AI-Powered DBTL Cycles: Accelerating Systems Metabolic Engineering for Next-Generation Therapeutics

This article provides a comprehensive introduction to the Design-Build-Test-Learn (DBTL) cycle, a foundational framework in modern systems metabolic engineering.

AI-Powered DBTL Cycles: Accelerating Systems Metabolic Engineering for Next-Generation Therapeutics

Abstract

This article provides a comprehensive introduction to the Design-Build-Test-Learn (DBTL) cycle, a foundational framework in modern systems metabolic engineering. Tailored for researchers, scientists, and drug development professionals, it explores the evolution of DBTL from a traditional iterative process to an AI-informed, automated paradigm. We cover foundational principles, detailing its role in optimizing microbial cell factories for the production of valuable compounds, from platform chemicals to complex pharmaceuticals. The article delves into advanced methodologies, including the integration of machine learning for zero-shot design and the use of cell-free systems for high-throughput testing. It also addresses common challenges and optimization strategies, illustrated with real-world case studies such as the efficient production of dopamine in E. coli and C5 chemicals in Corynebacterium glutamicum. Finally, we present a comparative analysis of the DBTL framework's validation and its transformative impact on the bioeconomy and clinical research.

The DBTL Cycle Demystified: Core Principles and Evolutionary Impact on Metabolic Engineering

Defining the Design-Build-Test-Learn (DBTL) Framework in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology and metabolic engineering for developing and optimizing biological systems [1] [2]. This engineering-based approach provides a structured pipeline for reprogramming organisms to produce valuable compounds, such as pharmaceuticals or biofuels, by applying genetic modifications [2] [3]. The cycle begins with the Design of genetic constructs, proceeds to the Build phase where DNA is assembled and introduced into a host chassis, continues to the Test phase where performance is experimentally measured, and concludes with the Learn phase, where data is analyzed to inform the next design iteration [4]. The power of the DBTL framework lies in its iterative nature; each cycle incorporates knowledge from the previous one, progressively refining the biological system toward a desired objective [5] [6]. Automation and machine learning (ML) are now revolutionizing this workflow, enabling high-throughput experimentation and sophisticated data analysis that dramatically accelerate the pace of biological engineering [7] [2] [6].

The Four Phases of the DBTL Cycle

Design Phase

The Design phase involves creating a detailed blueprint for the genetic construct or system intended to achieve a specific biological function. This phase relies on domain knowledge, expertise, and computational tools to model the desired outcome [4]. Key design activities include:

- Protein Design: Selecting natural enzymes or designing novel proteins to perform specific catalytic functions [7].

- Genetic Design: Translating amino acid sequences into optimized coding sequences (CDS), designing regulatory elements like ribosome binding sites (RBS), and planning operon architecture [7].

- Assembly Design: Deconstructing plasmid designs into DNA fragments and planning their assembly, considering factors such as restriction enzyme sites, overhang sequences, and GC content [7].

Modern synthetic biology leverages software platforms to automate and enhance this process. These tools can generate detailed DNA assembly protocols, optimize the use of existing lab inventory to reduce costs, and ensure compatibility among DNA fragments, which is critical for complex combinatorial libraries [7].

Build Phase

The Build phase translates the in silico design into a physical biological entity. This involves synthesizing DNA constructs, assembling them into plasmids or other vectors, and introducing them into a characterization system, such as bacteria, yeast, or cell-free systems [4] [6]. Precision is paramount, as minor errors can lead to significant deviations in the final outcome [7]. Automation is key in this phase:

- Automated Liquid Handlers: Instruments from companies like Tecan, Beckman Coulter, and Hamilton Robotics provide high-precision pipetting for PCR setup, DNA normalization, and plasmid preparation [7].

- Integrated Software Platforms: These orchestrate the entire build process, managing protocols, tracking samples across lab equipment, and handling high-throughput, plate-based workflows [7].

- DNA Synthesis Providers: Partnerships with companies like Twist Bioscience and IDT streamline the integration of custom DNA sequences into automated lab workflows [7].

The shift towards rapid cell-free expression systems is also notable. These systems use protein biosynthesis machinery from cell lysates or purified components to express proteins directly from DNA templates, bypassing time-intensive cloning steps and enabling high-throughput testing [4].

Test Phase

In the Test phase, the engineered biological constructs are experimentally measured to determine the efficacy of the Design and Build phases [4]. This phase often represents a throughput bottleneck in the DBTL cycle, which is now being addressed through automation and high-throughput analytics [6]. Core technologies include:

- High-Throughput Screening (HTS): Automated liquid handling systems and plate readers enable precise and rapid assay setups for thousands of samples [7].

- Multi-Omics Technologies: Next-Generation Sequencing (NGS) platforms provide rapid genotypic analysis, while automated mass spectrometry and NMR enable comprehensive proteomic and metabolomic profiling [7] [6].

- Data Management: Software platforms act as a centralized hub, collecting data from various analytical equipment and transforming raw data into formats ready for in-depth analysis and machine learning [7].

The application of microfluidics has further accelerated this phase, with platforms like DropAI screening over 100,000 picoliter-scale reactions to generate vast datasets [4].

Learn Phase

The Learn phase is where data collected during testing is analyzed to extract insights and inform the next DBTL cycle. The goal is to understand the underlying mechanisms or discover statistical patterns that link genetic design to phenotypic outcome [6]. Machine learning (ML) has become a powerful tool for this phase, processing large, complex datasets to uncover patterns that are not apparent through manual analysis [2]. Applications include:

- Predictive Modeling: Using ML models to make accurate genotype-to-phenotype predictions, guiding subsequent metabolic engineering designs [5] [7].

- Feature Analysis: Advanced ML can provide reasons for its predictions, deepening the understanding of biological relationships and accelerating the derivation of design principles [2].

The integration of ML can be so impactful that a paradigm shift to "LDBT" has been proposed, where Learning based on large datasets precedes Design, potentially reducing the need for multiple iterative cycles [4].

DBTL in Action: An Experimental Case Study

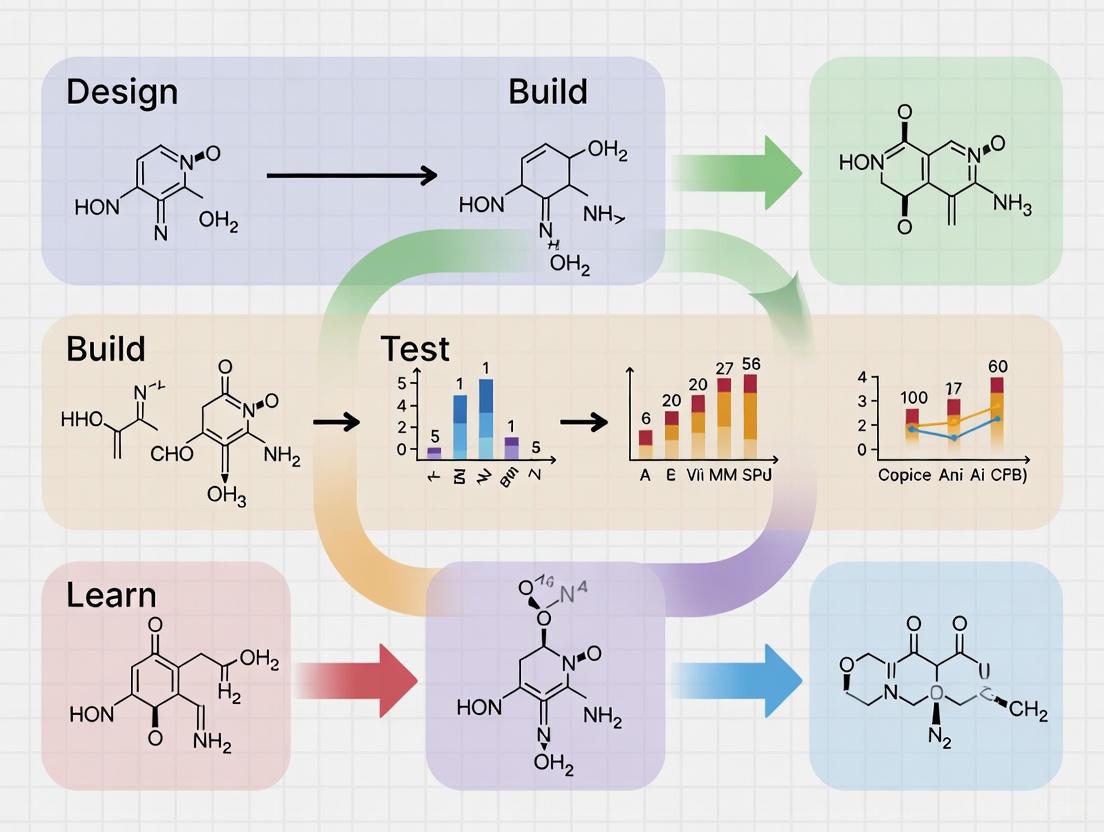

A recent study demonstrating the development of a dopamine production strain in E. coli provides a clear example of a knowledge-driven DBTL cycle in metabolic engineering [8]. The following diagram outlines the core workflow of an iterative DBTL cycle, as implemented in such studies.

Diagram 1: The iterative DBTL cycle for metabolic engineering.

Detailed Experimental Protocol

The successful development of a high-yield dopamine production strain, achieving 69.03 ± 1.2 mg/L, was accomplished through the following methodology [8]:

- Host Strain Engineering: The native E. coli production host (FUS4.T2) was first engineered for high-level production of the dopamine precursor, L-tyrosine. This involved genomic modifications to deplete the transcriptional dual regulator L-tyrosine repressor (TyrR) and introduce a mutation (tyrA) to remove feedback inhibition in the L-tyrosine pathway [8].

- Pathway Design: A synthetic pathway was constructed by introducing two key genes: the native E. coli gene encoding 4-hydroxyphenylacetate 3-monooxygenase (HpaBC), which converts L-tyrosine to L-DOPA, and the L-DOPA decarboxylase gene (Ddc) from Pseudomonas putida, which catalyzes the formation of dopamine from L-DOPA [8].

- In Vitro Prototyping (Learn-first approach): Before in vivo DBTL cycling, the relative expression levels of HpaBC and Ddc were investigated in vitro using a crude cell lysate system. This knowledge-driven step helped assess enzyme compatibility and inform the initial design for the in vivo environment [8].

- In Vivo Fine-Tuning via RBS Engineering: The learning from the in vitro tests was translated into the in vivo strain through high-throughput ribosome binding site (RBS) engineering. A library of RBS sequences with modulated Shine-Dalgarno (SD) sequences was constructed to precisely control the translation initiation rate (TIR) and fine-tune the expression levels of HpaBC and Ddc without altering the secondary structure of the mRNA [8].

- Cultivation and Analysis: Engineered strains were cultivated in a defined minimal medium with controlled carbon sources. Dopamine production was analyzed using high-performance liquid chromatography (HPLC) to quantify titers [8].

Table 1: Key Research Reagent Solutions for Microbial Metabolic Engineering

| Reagent / Material | Function in Experiment |

|---|---|

| Minimal Medium (e.g., defined glucose medium) [8] | Provides precise nutrients for microbial growth and product formation, enabling accurate metabolic flux analysis. |

| Antibiotics (e.g., Ampicillin, Kanamycin) [8] | Maintains selective pressure to ensure plasmid retention in the production host throughout cultivation. |

| Inducers (e.g., IPTG) [8] | Triggers the expression of genes in inducible genetic circuits, allowing controlled timing of metabolic pathway activation. |

| DNA Assembly Kit (e.g., Gibson Assembly) [7] | Enables seamless and high-efficiency assembly of multiple DNA fragments into a functional plasmid vector. |

| RBS Library [8] | A collection of DNA sequences that allow for fine-tuning of gene expression levels without changing the coding sequence. |

| Cell-Free System (crude cell lysate) [8] [4] | Allows for rapid prototyping and testing of enzyme pathways without the constraints of a living cell. |

Advanced Topics and Future Directions

The Role of Automation and Biofoundries

The integration of automation throughout the DBTL cycle has given rise to industrialized platforms known as biofoundries [2] [6]. These facilities integrate automated equipment and software to execute high-throughput DBTL cycles with minimal manual intervention, dramatically increasing the speed and scale of biological engineering [9] [6]. Automated biofoundries address key limitations of artisanal research by improving consistency, reducing human error, and allowing researchers to focus on intellectual tasks [6].

Machine Learning and the LDBT Paradigm

Machine learning is transforming the DBTL cycle, particularly the Learn phase. ML algorithms can analyze vast experimental datasets to uncover complex genotype-phenotype relationships and predict optimal designs [5] [7] [2]. This has led to a proposal for a reordered "LDBT" cycle (Learn-Design-Build-Test), where learning from large datasets or pre-trained models precedes design [4]. For instance, zero-shot predictions from protein language models (e.g., ESM, ProteinMPNN) can generate functional protein designs without any initial experimental data for that specific protein, potentially collapsing multiple DBTL cycles into a single turn [4].

Table 2: Comparison of Traditional DBTL and Emerging LDBT Approaches

| Aspect | Traditional DBTL Cycle | LDBT & ML-Augmented Cycle |

|---|---|---|

| Learning Basis | Data from previous cycle's Build-Test phases [5]. | Pre-trained models on megascale datasets; zero-shot prediction [4]. |

| Primary Bottleneck | Build-Test phases are slow and resource-intensive [6]. | Data quality and quantity for training robust models [2] [4]. |

| Iteration Speed | Multiple cycles (often many) required [4]. | Potential for single-cycle success; much faster iteration [4]. |

| Predictive Power | Limited, often relies on trial-and-error [2]. | High, enabled by pattern recognition in high-dimensional data [5] [4]. |

Computational and Modeling Frameworks

Computational tools are vital for managing the complexity of biological design. Kinetic models, constraint-based models like Flux Balance Analysis (FBA), and whole-cell models provide a mechanistic framework to simulate pathway behavior before embarking on costly experiments [5] [10]. These models can be used to in silico test machine learning methods and DBTL strategies, providing a "ground truth" that is difficult to obtain with real-world experiments due to cost and time constraints [5]. Software platforms now offer end-to-end support for the entire DBTL cycle, from design and inventory management to data analysis and machine learning [7].

The Design-Build-Test-Learn (DBTL) cycle represents a fundamental shift in metabolic engineering, moving from traditional linear approaches to an iterative, data-driven framework for microbial strain development. This paradigm leverages advancements in automation, artificial intelligence (AI), and synthetic biology to systematically optimize complex biological systems. By continuously refining hypotheses with experimental data, the DBTL cycle enables researchers to navigate vast design spaces efficiently, accelerating the development of strains for sustainable bioproduction. This whitepaper examines the core principles of the DBTL framework, its implementation in modern biofoundries, and its impact through recent case studies in enzyme and metabolite engineering.

The DBTL Cycle: Core Components and Workflow

The DBTL cycle is an iterative methodology for engineering biological systems. Its power lies in the continuous refinement of designs based on data and learning from previous iterations.

Design

In the Design phase, researchers specify genetic modifications using computational tools and prior knowledge. This involves selecting DNA components (e.g., promoters, ribosomal binding sites (RBS), coding sequences) to create genetic designs predicted to improve strain performance [5]. Modern approaches incorporate machine learning (ML) and large language models (LLMs) to propose optimized enzyme variants or pathway configurations [11].

Build

The Build phase translates digital designs into physical biological entities. This involves DNA synthesis, assembly, and introduction into host organisms. Automation is crucial here, with robotic platforms enabling high-throughput strain construction. For example, an automated pipeline for Saccharomyces cerevisiae achieved a throughput of ~2,000 transformations per week, a 10-fold increase over manual methods [12].

Test

In the Test phase, engineered strains are cultured and evaluated for performance metrics such as titer, yield, and productivity (TYR) [5]. This phase often employs high-throughput analytical techniques like liquid chromatography-mass spectrometry (LC-MS) for rapid quantification of target molecules [12].

Learn

The Learn phase involves analyzing experimental data to extract insights. Machine learning models are trained on the collected data to identify patterns, predict the performance of untested designs, and recommend improved designs for the next cycle [5] [11]. This transforms raw data into actionable knowledge, closing the loop.

Quantitative Advancements in DBTL Cycle Performance

The implementation of automated, AI-powered DBTL cycles has led to dramatic improvements in the speed and efficiency of strain and enzyme development. The following table summarizes key performance metrics from recent studies.

Table 1: Performance Metrics of Advanced DBTL Platforms

| Engineering Target | Platform/Strategy | Timeframe | Improvement | Key Enabling Technology | Citation |

|---|---|---|---|---|---|

| Halide Methyltransferase (AtHMT) | Autonomous AI-powered platform | 4 weeks | 16-fold improvement in ethyltransferase activity | Protein LLM (ESM-2) & iBioFAB automation | [11] |

| Phytase (YmPhytase) | Autonomous AI-powered platform | 4 weeks | 26-fold improvement in activity at neutral pH | Epistasis model (EVmutation) & robotic screening | [11] |

| Yeast Strain Construction | Automated robotic pipeline | 1 week | 2,000 transformations/week (10x manual throughput) | Hamilton VANTAGE integrated system | [12] |

| Dopamine Production | Knowledge-driven DBTL cycle | N/A | 2.6 to 6.6-fold improvement over state-of-the-art | In vitro lysate studies & high-throughput RBS engineering | [13] |

Case Study: Knowledge-Driven DBTL for Dopamine Production inE. coli

A recent study demonstrated the power of a knowledge-driven DBTL cycle to optimize dopamine production in E. coli, achieving a final titer of 69.03 ± 1.2 mg/L, a 2.6 to 6.6-fold improvement over previous state-of-the-art methods [13]. The workflow integrated upstream in vitro experiments to inform the initial in vivo design, accelerating the learning process.

Experimental Protocol and Workflow

The following diagram outlines the specific experimental workflow used in the knowledge-driven DBTL cycle for dopamine production.

Key Methodological Details:

- Host Strain Engineering: The base E. coli production strain (FUS4.T2) was engineered for high L-tyrosine production by depleting the transcriptional repressor TyrR and mutating the feedback inhibition of chorismate mutase/prephenate dehydrogenase (TyrA) [13].

- Pathway Enzymes: The heterologous pathway consisted of:

- HpaBC (4-hydroxyphenylacetate 3-monooxygenase, native to E. coli): Converts L-tyrosine to L-DOPA.

- Ddc (L-DOPA decarboxylase, from Pseudomonas putida): Converts L-DOPA to dopamine [13].

- Fine-Tuning Mechanism: Instead of full pathway re-design, RBS engineering was used to precisely modulate the translation initiation rates of hpaBC and ddc. The SD sequence was altered without interfering with secondary structures to generate a library of expression strengths [13].

- Analytical Method: Dopamine titers were quantified using high-performance liquid chromatography (HPLC) or LC-MS, adapted for high-throughput screening of the strain library [13].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table catalogs key reagents, molecular tools, and hardware essential for implementing advanced DBTL cycles in metabolic engineering.

Table 2: Essential Research Reagents and Solutions for DBTL Cycles

| Reagent / Tool / Solution | Function in DBTL Cycle | Specific Example / Note |

|---|---|---|

| Ribosome Binding Site (RBS) Libraries | Fine-tunes translation initiation rate and enzyme expression levels in a pathway. | Modulating the Shine-Dalgarno sequence; tools like UTR Designer can assist [13]. |

| Promoter Libraries | Provides a range of transcription strengths for pathway gene regulation. | Inducible (e.g., pGAL1 in yeast) or constitutive promoters of varying strengths [12]. |

| Cell-Free Protein Synthesis (CFPS) Systems | Enables rapid in vitro testing of enzyme expression and pathway function, bypassing cellular constraints. | Used for upstream, knowledge-driven design before in vivo strain construction [13]. |

| Automated Robotic Platforms | Executes high-throughput, reproducible pipetting, transformations, and assays in the Build and Test phases. | Hamilton Microlab VANTAGE; integrated with off-deck hardware (thermal cyclers, sealers) [12]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Precisely quantifies target metabolite titers and pathway intermediates from cultured strains. | Critical for high-throughput screening in the Test phase; methods can be optimized for speed [12]. |

| Machine Learning (ML) Models | Learns from experimental data to predict high-performing designs, guiding the Learn and Design phases. | Gradient boosting and random forest models perform well in low-data regimes [5] [11]. |

The Future: Autonomous DBTL Cycles and AI Integration

The next frontier of DBTL cycles is full autonomy, integrating AI and robotics to form a closed-loop system. A generalized platform for AI-powered autonomous enzyme engineering demonstrated this capability, using protein LLMs and epistasis models for design, a biofoundry (iBioFAB) for build and test, and ML to learn and propose subsequent variants [11]. This platform required only an input protein sequence and a fitness function, engineering enzymes with significant activity improvements in just four weeks [11]. Such systems eliminate human intervention and bias, dramatically accelerating the pace of biological discovery and optimization.

The transition from linear to iterative DBTL cycles has fundamentally revolutionized strain development. By embracing a framework of continuous learning powered by automation and artificial intelligence, metabolic engineers can now systematically tackle the complexity of biological systems. This approach drastically reduces development timelines and experimental costs while achieving performance improvements that were previously unattainable. As DBTL methodologies become more accessible and autonomous, they promise to be the cornerstone of sustainable biomanufacturing for chemicals, materials, and therapeutics.

The Design-Build-Test-Learn (DBTL) cycle has emerged as the fundamental framework for modern biological engineering, serving as the critical conduit through which metabolic engineering, systems biology, and synthetic biology converge. This iterative process provides the structural methodology for optimizing biological systems toward specific production goals, from sustainable biofuels to pharmaceutical compounds [14] [13]. Systems metabolic engineering represents the integration of systems biology's analytical approaches with metabolic engineering's production objectives, enhanced by synthetic biology's precise genetic toolset. Within this integrated framework, the DBTL cycle functions as the operational engine that drives continuous improvement and innovation.

The power of the DBTL methodology lies in its recursive nature, where each iteration refines understanding and enhances system performance. As exemplified in microbial co-culture systems, this approach enables researchers to compartmentalize complex biochemical tasks across different microbial species, achieving notable successes such as a 40% increase in bioethanol yield compared to monocultures by segregating sugar fermentation and carbon fixation pathways [15]. Similarly, the DBTL framework has facilitated the optimization of microbial production strains for diverse compounds, including dopamine, where a knowledge-driven DBTL approach resulted in a 2.6 to 6.6-fold improvement over previous production methods [13].

This technical guide examines the core principles, methodologies, and applications of the DBTL cycle within integrated systems metabolic engineering, providing researchers with both theoretical foundations and practical protocols for implementing this powerful framework.

The Core Principles of the DBTL Cycle

The DBTL cycle represents a systematic approach to biological engineering that transforms the design and optimization of biological systems into a structured, iterative process. Each phase of the cycle contributes distinct capabilities that collectively enable precise engineering of metabolic pathways and cellular functions.

Design Phase

The Design phase initiates the DBTL cycle by establishing a clear objective and developing a rational plan based on specific hypotheses or prior knowledge. This stage leverages computational tools, domain expertise, and biological insight to specify the genetic components and systems required to achieve the desired metabolic function [16]. In metabolic engineering applications, this typically involves selecting appropriate enzymes, designing expression cassettes with suitable promoters and ribosome binding sites (RBS), and planning assembly strategies. The Design phase has been revolutionized by advances in machine learning and modeling, with protein language models (e.g., ESM, ProGen) and structure-based design tools (e.g., ProteinMPNN) enabling zero-shot prediction of protein structures and functions, thereby accelerating the creation of novel biocatalysts [4].

Build Phase

The Build phase translates theoretical designs into biological reality through molecular biology techniques. This involves DNA synthesis, plasmid assembly, and transformation of engineered constructs into host organisms [16]. Recent advances have focused on automating and scaling the Build process through biofoundries and robotic integration. For example, automated strain construction pipelines for Saccharomyces cerevisiae can achieve up to 2,000 transformations per week—a 10-fold increase over manual methods [12]. These automated workflows employ standardized protocols, such as the lithium acetate/ssDNA/PEG transformation method adapted to a 96-well format, with integrated robotic arms managing liquid handling, heat shock, and plating procedures [12].

Test Phase

The Test phase focuses on quantitative characterization of the engineered system's performance through various analytical methods. This includes measuring metabolite production, assessing growth characteristics, and evaluating functional outputs. Advanced high-throughput screening methods have dramatically accelerated this phase, with approaches ranging from simple absorbance measurements for compounds like flaviolin to sophisticated LC-MS analysis for verifying verazine production in engineered yeast strains [17] [12]. Cell-free expression systems have emerged as particularly valuable tools for rapid testing, enabling protein synthesis without time-intensive cloning steps and facilitating high-throughput sequence-to-function mapping of protein variants [4].

Learn Phase

The Learn phase represents the critical knowledge extraction step where experimental data is analyzed to generate insights about system behavior. This phase determines whether the design performed as expected and identifies causes of success or failure [16]. Modern Learn phases increasingly incorporate machine learning and statistical analysis to identify patterns and relationships within complex datasets. For example, Explainable Artificial Intelligence techniques have been employed to identify key media components influencing production, revealing unexpectedly that common salt (NaCl) was the most important factor for flaviolin production in Pseudomonas putida [17]. The knowledge generated in this phase directly informs the subsequent Design phase, creating a virtuous cycle of improvement.

Table 1: Key Tools and Technologies Enhancing the DBTL Cycle

| DBTL Phase | Technology | Application | Impact |

|---|---|---|---|

| Design | Protein Language Models (ESM, ProGen) | Zero-shot prediction of protein structure and function | Accelerated enzyme design without extensive experimental screening [4] |

| Design | UTR Designer | RBS engineering for translation optimization | Precise fine-tuning of gene expression in synthetic pathways [13] |

| Build | Automated Robotic Workstations | High-throughput strain construction | 2,000 yeast transformations/week vs. 200 manually [12] |

| Build | Cell-Free Expression Systems | Rapid protein synthesis without cloning | >1 g/L protein in <4 hours; toxic product expression [4] |

| Test | Droplet Microfluidics | Ultra-high-throughput screening | >100,000 picoliter-scale reactions screened [4] |

| Test | LC-MS Methods | Metabolite quantification | Rapid detection (19 min for verazine vs. 50 min previously) [12] |

| Learn | Explainable AI | Identification of critical production factors | Revealed NaCl as key factor in flaviolin production [17] |

| Learn | Knowledge-Driven DBTL | In vitro prototyping before in vivo implementation | 2.6 to 6.6-fold improvement in dopamine production [13] |

Quantitative Applications and Outcomes

The implementation of integrated DBTL cycles has yielded substantial improvements in bioproduction across multiple domains. The following table summarizes key quantitative achievements demonstrating the efficacy of this approach.

Table 2: Notable DBTL Applications and Performance Metrics

| Application Area | Host Organism | Engineering Strategy | Outcome | Reference |

|---|---|---|---|---|

| Next-Gen Biofuels | Engineered Clostridium spp. | CRISPR-Cas genome editing; de novo pathway engineering | 3-fold increase in butanol yield; 91% biodiesel conversion efficiency | [14] |

| Dopamine Production | E. coli FUS4.T2 | Knowledge-driven DBTL; RBS engineering | 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass); 2.6-6.6-fold improvement | [13] |

| Flaviolin Production | Pseudomonas putida KT2440 | Machine learning-led media optimization | 60-70% increase in titer; 350% increase in process yield | [17] |

| Bioethanol Production | S. cerevisiae & C. autoethanogenum co-culture | Modular division of labor in co-culture system | 40% increase in yield compared to monoculture | [15] |

| Artemisinin Precursor | S. cerevisiae & P. pastoris co-culture | Pathway compartmentalization | 2.8 g/L titer (15-fold improvement over monoculture) | [15] |

| Verazine Production | Saccharomyces cerevisiae | Automated library screening of 32 genes | 2.0 to 5-fold increase with top-performing genes | [12] |

DBTL Cycle Diagram

Enhanced DBTL Frameworks: From Knowledge-Driven to LDBT

Traditional DBTL cycles typically begin with limited prior knowledge, requiring multiple iterations to achieve optimal performance. Recent advancements have introduced modified frameworks that accelerate this process through strategic incorporation of upfront knowledge and computational power.

Knowledge-Driven DBTL

The knowledge-driven DBTL cycle incorporates upstream in vitro investigation before embarking on full in vivo engineering. This approach was successfully implemented for dopamine production in E. coli, where cell lysate studies were first conducted to assess enzyme expression levels and identify potential bottlenecks [13]. The resulting data informed the subsequent in vivo RBS engineering strategy, enabling precise fine-tuning of the dopamine pathway. This knowledge-forward approach demonstrated that GC content in the Shine-Dalgarno sequence significantly impacts RBS strength, leading to the development of a high-efficiency dopamine production strain with dramatically reduced optimization cycles [13].

LDBT Paradigm

A more radical restructuring of the traditional cycle has been proposed as LDBT (Learn-Design-Build-Test), where machine learning precedes design based on large biological datasets [4]. This paradigm leverages the predictive power of pre-trained models to generate functional designs that require minimal subsequent iteration. The LDBT framework capitalizes on zero-shot predictors capable of designing proteins with desired functions without additional training, potentially transitioning synthetic biology toward a "Design-Build-Work" model more akin to established engineering disciplines [4].

Enhanced DBTL Frameworks Diagram

Experimental Protocols and Methodologies

Knowledge-Driven DBTL for Dopamine Production

The successful application of knowledge-driven DBTL for dopamine production in E. coli exemplifies the integrated experimental approach [13]:

In Vitro Investigation Phase:

- Prepare crude cell lysate systems from production host (E. coli FUS4.T2)

- Set up reaction buffer containing 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA in 50 mM phosphate buffer (pH 7)

- Express heterologous genes hpaBC (encoding 4-hydroxyphenylacetate 3-monooxygenase) and ddc (encoding L-DOPA decarboxylase) using pJNTN plasmid system

- Quantify enzyme activities and pathway flux to identify rate-limiting steps

In Vivo Implementation:

- Engineer production host with depleted transcriptional dual regulator TyrR and mutated feedback inhibition of chorismate mutase/prephenate dehydrogenase (tyrA) to increase L-tyrosine production

- Implement RBS library for fine-tuning expression of hpaBC and ddc genes

- Use UTR Designer for modulating RBS sequences, focusing on Shine-Dalgarno sequence optimization

- Cultivate engineered strains in minimal medium containing 20 g/L glucose, 10% 2xTY medium, and appropriate supplements

- Analyze dopamine production using HPLC with detection at 280 nm

Automated Strain Construction Protocol

The automated workflow for high-throughput yeast strain construction demonstrates the integration of robotics into the Build phase [12]:

Automated Transformation Protocol:

- Program Hamilton Microlab VANTAGE with Venus software for liquid handling

- Prepare competent Saccharomyces cerevisiae cells in 96-well format

- Set up transformation mixture with optimized lithium acetate/ssDNA/PEG ratios

- Execute heat shock using integrated thermal cycler (Inheco ODTC)

- Perform washing steps with selective media

- Plate transformations using automated colony picker (QPix 460)

- Incubate plates at 30°C for 2-3 days

- Pick colonies for high-throughput culturing in 96-deep-well plates

Analytical Validation:

- Develop chemical extraction method using Zymolyase-mediated cell lysis

- Implement organic solvent extraction for metabolite recovery

- Establish rapid LC-MS method for verazine quantification (19-minute runtime)

- Normalize titers to cell density for production comparison

Machine Learning-Led Media Optimization

The integration of machine learning into media optimization represents a powerful application of the Learn phase [17]:

Semi-Automated Pipeline:

- Utilize automated liquid handler to combine stock solutions for 15 media components

- Dispense media designs in triplicate/quadruplicate in 48-well plates

- Inoculate with engineered P. putida strain

- Cultivate in automated cultivation platform (BioLector) for 48 hours

- Measure flaviolin production via absorbance at 340 nm

- Store production data and media designs in Experiment Data Depot (EDD)

- Employ Automated Recommendation Tool (ART) for media design recommendation

- Iterate through multiple DBTL cycles with algorithm-guided experiments

Explainable AI Analysis:

- Apply SHAP (SHapley Additive exPlanations) or similar methods to identify feature importance

- Validate key findings through controlled experiments

- Scale optimal conditions to bioreactor systems

Essential Research Reagent Solutions

The successful implementation of DBTL cycles in systems metabolic engineering relies on specialized reagents and materials that enable precise genetic manipulation and analysis.

Table 3: Essential Research Reagents for DBTL Implementation

| Reagent/Material | Specification | Function in DBTL Cycle | Example Application |

|---|---|---|---|

| pSEVA261 Backbone | Medium-low copy number plasmid | Stable expression vector with reduced background signal | PFOA biosensor construction in E. coli [18] |

| pET Plasmid System | T7 expression system | High-level protein expression for in vitro testing | Dopamine pathway enzyme expression [13] |

| pESC-URA Plasmid | S. cerevisiae GAL1 promoter | Inducible expression in yeast | Verazine biosynthetic pathway [12] |

| Amicon Ultra Filters | 100k MWCO | Extracellular vesicle/exosome isolation | L. rhamnosus exosome isolation [16] |

| Hamilton Microlab VANTAGE | Robotic liquid handling | Automated strain construction | High-throughput yeast transformation [12] |

| LuxCDEAB Operon | Bioluminescence reporter | Biosensor signal generation | PFOA detection system [18] |

| Cell-Free Expression System | Crude lysate or purified | Rapid protein synthesis without cloning | In vitro pathway prototyping [4] |

| Zymolyase | Lytic enzyme preparation | Yeast cell wall digestion | Metabolite extraction from S. cerevisiae [12] |

Future Perspectives and Emerging Trends

The continued convergence of metabolic engineering, systems biology, and synthetic biology through the DBTL framework is driving several transformative trends. The integration of machine learning and artificial intelligence throughout the DBTL cycle is transitioning from enhancement to central function, with pre-trained models increasingly capable of zero-shot design of biological parts and systems [4]. This shift toward data-driven predictive biology promises to reduce iteration requirements and accelerate the engineering timeline.

Automation and biofoundries are expanding access to high-throughput capabilities, with standardized workflows and shared resources enabling broader implementation of automated DBTL cycles [12]. The development of modular, user-customizable interfaces for robotic systems makes these technologies increasingly accessible to research teams without specialized engineering expertise.

Cell-free systems continue to emerge as powerful platforms for rapid prototyping, particularly when combined with microfluidics for ultra-high-throughput screening [4]. These systems bypass cellular constraints and enable direct measurement of enzyme activities and pathway fluxes, providing critical data for the Learn phase that directly informs in vivo implementation.

Microbial co-cultures represent another frontier in metabolic engineering, enabling modular division of labor that addresses fundamental challenges in metabolic burden and incompatible pathway requirements [15]. Engineering effective consortia requires application of DBTL principles at the community level, with careful attention to population dynamics and cross-species interactions.

Finally, the expansion of systems thinking beyond cellular engineering to encompass broader impacts—including environmental, social, and healthcare systems—signals a maturation of the field [19]. This holistic approach recognizes that technological solutions must be integrated within broader contexts to achieve meaningful impact, particularly in applications involving healthcare delivery and sustainable biomanufacturing.

As these disciplines continue to converge through the structured framework of the DBTL cycle, the capacity to engineer biological systems for addressing global challenges in medicine, energy, and sustainability will continue to accelerate, ushering in a new era of biological design.

Metabolic engineering, defined as the use of genetic engineering to modify the metabolism of an organism, has undergone a radical transformation since its emergence in the early 1990s [20]. This field has evolved from initial efforts focused on modifying single enzymes to comprehensive systems-level approaches that integrate computational biology, synthetic biology, and high-throughput automation. The historical progression from traditional to advanced systems metabolic engineering represents a fundamental shift in how microbial cell factories are designed and optimized for industrial production, enabling the bio-based manufacturing of chemicals, materials, and fuels from renewable resources with unprecedented efficiency [21]. This evolution has been characterized by three distinct waves of innovation, each building upon the previous to address increasingly complex challenges in strain development.

The integration of the Design-Build-Test-Learn (DBTL) cycle has been particularly instrumental in advancing systems metabolic engineering. This iterative framework provides a systematic methodology for the discovery and optimization of biosynthetic pathways, allowing researchers to continuously refine microbial strains through successive rounds of computational design, genetic construction, performance testing, and data-driven learning [22]. The adoption of DBTL cycles, enhanced by automation and machine learning, has dramatically accelerated the development of efficient bioprocesses, reducing the time and resources required to achieve commercially viable production strains [5]. This article traces the historical progression of metabolic engineering through its three major waves of development, examines the core principles and implementation of the DBTL framework, and explores the advanced toolkits that define contemporary systems metabolic engineering.

The Three Waves of Metabolic Engineering Innovation

First Wave: Rational Pathway Engineering

The first wave of metabolic engineering, beginning in the 1990s, established the foundational principle that natural pathways could be enumerated and assessed for converting specific substrates to target products [23]. Early metabolic engineering efforts relied predominantly on rational approaches to pathway analysis and flux optimization, focusing on redirecting cellular metabolism toward desired products through sequential genetic modifications. A landmark example from this period was the overproduction of lysine in Corynebacterium glutamicum. Through metabolic flux analysis, researchers identified pyruvate carboxylase and aspartokinase as potential bottlenecks in the biosynthetic pathway. By simultaneously expressing both enzymes, they achieved a 150% increase in lysine productivity while maintaining the same growth rate as the control strain [23]. This approach demonstrated the power of targeted genetic interventions but was limited by its dependence on existing knowledge of pathway regulation and enzyme kinetics.

During this initial phase, metabolic engineering strategies primarily involved:

- Sequential debottlenecking of rate-limiting steps in pathways of interest [5]

- Overexpression of key enzymes in biosynthetic pathways [23]

- Knockout of competing metabolic pathways [24]

- Heterologous gene expression to introduce new capabilities [22]

While these rational approaches achieved notable successes, they faced fundamental limitations due to incomplete knowledge of metabolic networks, particularly regarding the regulation of individual pathway elements and overall cell physiology [5]. The inherent complexity of cellular metabolism often led to unexpected outcomes, as perturbations in one part of the network could produce counterintuitive effects in distant pathways. Despite these challenges, the first wave established metabolic engineering as a distinct discipline and demonstrated its potential for industrial biotechnology.

Second Wave: Systems Biology Integration

The second wave of metabolic engineering emerged in the 2000s with the integration of systems biology technologies, particularly genome-scale metabolic models (GEMs) [23]. This holistic approach was pioneered by researchers like Bernhard Ø Palsson, who developed frameworks for bridging mechanistic genotype-phenotype relationships to explore the metabolic potential of cell factories [23]. Genome-scale models enabled researchers to simulate cellular metabolism at an unprecedented scale, identifying non-obvious targets for genetic engineering that would be difficult to discover through rational approaches alone.

The application of systems biology tools expanded metabolic engineering capabilities for producing a wider range of chemicals, including fuels, materials, and pharmaceutical ingredients [23]. Notable achievements from this period included:

- Predictive Modeling: Genome-scale Saccharomyces cerevisiae and Escherichia coli metabolic models predicted strategies for bioethanol production [23].

- Multi-target Optimization: Algorithms identified key gene knockout targets for production of compounds like cubebol, L-threonine, and L-valine [23].

- Flux Analysis: Techniques like flux scanning based on enforced objective flux identified overexpression targets for enhancing lycopene production [23].

The second wave represented a significant shift from local pathway optimization to global network analysis, acknowledging that cellular metabolism functions as an integrated system rather than a collection of independent pathways. This systemic perspective enabled more sophisticated engineering strategies that accounted for complex interactions and regulatory mechanisms across the entire metabolic network.

Third Wave: Synthetic Biology and Systems Metabolic Engineering

The third, and current, wave of metabolic engineering began in the 2010s with the pioneering work of Jay D. Keasling on artemisinin production [23]. This wave is characterized by the full integration of synthetic biology with metabolic engineering, enabling the design, construction, and optimization of complete metabolic pathways using synthetic nucleic acid elements for producing both natural and non-natural chemicals [23]. Systems metabolic engineering represents the maturation of this approach, combining and integrating in silico and experimental strategies to globally analyze and engineer microorganisms at super efficiency otherwise not accessible [21].

Key differentiators of third-wave systems metabolic engineering include:

- Combinatorial Pathway Optimization: Simultaneous optimization of multiple pathway genes rather than sequential debottlenecking [5]

- Automated DBTL Cycles: Implementation of iterative design-build-test-learn pipelines with increasing automation [22]

- Machine Learning Integration: Application of advanced algorithms to learn from experimental data and propose new designs [5]

- Multi-level Engineering: Strategies operating at multiple hierarchies, including part, pathway, network, genome, and cell levels [23]

This modern approach has dramatically expanded the array of attainable products, including advanced biofuels [25], pharmaceuticals like opioids and vinblastine [23], commodity chemicals, and complex natural products. Systems metabolic engineering has emerged as a major driver toward bio-based production from renewables and represents one of the core technologies of global green growth [21].

Table 1: Historical Progression of Metabolic Engineering Approaches

| Wave | Time Period | Key Technologies | Representative Achievements | Limitations |

|---|---|---|---|---|

| First Wave: Rational Engineering | 1990s | Pathway enumeration, Flux analysis, Targeted gene knockout/overexpression | 150% increase in lysine productivity in C. glutamicum [23] | Limited by knowledge gaps, unexpected network interactions |

| Second Wave: Systems Biology | 2000s | Genome-scale models, Flux balance analysis, In silico strain design | Genome-scale models for bioethanol production in S. cerevisiae [23] | Limited capacity for de novo pathway design |

| Third Wave: Systems Metabolic Engineering | 2010s-present | Synthetic biology, Automated DBTL cycles, Machine learning, Combinatorial optimization | Artemisinin production in yeast [23], 500-fold improvement in pinocembrin production through automated DBTL [22] | Computational complexity, data management challenges |

The DBTL Cycle: Core Framework for Systems Metabolic Engineering

Design Principles and Computational Tools

The Design phase in systems metabolic engineering has evolved from simple pathway selection to sophisticated computational workflows that integrate multiple tools for pathway prediction, enzyme selection, and DNA part design. For any given target compound, modern pipelines utilize specialized software such as RetroPath for automated pathway selection and Selenzyme for enzyme selection [22]. These tools enable the systematic identification of potential biosynthetic routes and suitable enzyme candidates based on biochemical rules and substrate specificity.

Following enzyme selection, reusable DNA parts are designed with simultaneous optimization of ribosome-binding sites and enzyme coding regions using tools like PartsGenie [22]. Genes and regulatory parts are then combined in silico into large combinatorial libraries of pathway designs. To manage the resulting combinatorial explosion, statistical methods such as Design of Experiments (DoE) are employed to reduce libraries to smaller representative sets. This approach allows efficient exploration of the design space with tractable numbers of samples for laboratory construction and screening [22]. For example, in one documented flavonoid production project, a combinatorial design of 2592 possible configurations was successfully reduced to just 16 representative constructs using DoE based on orthogonal arrays combined with a Latin square for positional arrangement of genes, achieving a compression ratio of 162:1 [22].

Build Methodologies and Automation

The Build phase has been transformed by advances in DNA synthesis and assembly technologies. Modern DBTL pipelines begin with commercial DNA synthesis, followed by automated part preparation via PCR, and robotic setup for pathway assembly using methods such as ligase cycling reaction [22]. After transformation in microbial hosts, candidate plasmid clones undergo quality control through high-throughput automated purification, restriction digest analysis by capillary electrophoresis, and sequence verification.

Automation is a critical factor in the Build phase, with robotic platforms handling increasingly complex assembly operations. While some manual interventions remain in current workflows (such as PCR clean-up and host-cell transformation), the trend is toward full automation of these processes [22]. The modular nature of these pipelines allows for flexibility in adopting new assembly methods and accommodates species-specific requirements for different microbial hosts through adjustments to regulatory elements, codon optimization, and experimental methods.

Test Platforms and Analytical Methods

The Test phase involves introducing constructs into selected production chassis and running automated multi-well growth and induction protocols. Detection of target products and key intermediates begins with automated extraction followed by quantitative screening using advanced analytical methods such as fast ultra-performance liquid chromatography coupled to tandem mass spectrometry with high mass resolution [22]. Data extraction and processing are typically handled by custom-developed scripts, often in open-source platforms like R.

A significant innovation in the Test phase is the use of mechanistic kinetic models to simulate metabolic pathway behavior and generate data for comparing machine learning methods [5]. In these models, changes in intracellular metabolite concentrations over time are described by ordinary differential equations, with each reaction flux described by a kinetic mechanism derived from mass action principles. This approach allows for in silico changes to pathway elements, such as enzyme concentrations or catalytic properties, creating simulated environments for testing optimization strategies before laboratory implementation [5].

Learn Strategies and Machine Learning Integration

The Learn phase represents the knowledge-generating component of the DBTL cycle, where data from the Test phase are analyzed to identify relationships between design factors and production outcomes. Statistical methods and machine learning algorithms play crucial roles in this process, enabling the extraction of meaningful patterns from complex datasets. In the flavonoid production case study, statistical analysis of the initial library revealed that vector copy number had the strongest significant effect on pinocembrin levels, followed by a positive effect of the chalcone isomerase promoter strength [22]. These insights directly informed the design parameters for the subsequent DBTL cycle.

Machine learning has shown particular promise in the Learn phase for recommending new strain designs for subsequent DBTL cycles. Studies comparing different algorithms have demonstrated that gradient boosting and random forest models outperform other methods in the low-data regime typical of early DBTL cycles [5]. These methods have also proven robust to training set biases and experimental noise. The integration of recommendation algorithms that balance exploration and exploitation further enhances the efficiency of the iterative optimization process [5].

Diagram 1: The iterative Design-Build-Test-Learn (DBTL) cycle in systems metabolic engineering. The cycle integrates computational design, genetic construction, performance testing, and data-driven learning for continuous strain improvement [5] [22].

Implementation Case Studies

Flavonoid Production in E. coli

The application of an automated DBTL pipeline for flavonoid production in E. coli demonstrates the power of iterative systems metabolic engineering. The project targeted (2S)-pinocembrin, a key precursor to diverse flavonoids, using a pathway comprising four enzymes: phenylalanine ammonia-lyase (PAL), chalcone synthase (CHS), chalcone isomerase (CHI), and 4-coumarate:CoA ligase (4CL) [22]. The initial DBTL cycle designed a combinatorial library covering a wide range of variants, including four expression levels through vector backbone selection, varying promoter strengths for each gene, and 24 positional permutations of the four genes. This resulted in 2592 possible configurations, which was reduced to 16 representative constructs using DoE.

Screening this initial library revealed pinocembrin titers ranging from 0.002 to 0.14 mg L⁻¹, with statistical analysis identifying vector copy number as the strongest factor influencing production, followed by CHI promoter strength [22]. Based on these insights, a second DBTL cycle was implemented with modified design constraints: (1) high copy number origin for all constructs, (2) fixed positioning of CHI at the beginning of the pathway, (3) variable positioning and promoter strengths for 4CL and CHS, and (4) fixed positioning of PAL at the pathway end. This targeted approach achieved a remarkable 500-fold improvement in production titers, reaching competitive levels of up to 88 mg L⁻¹ [22].

Organic Acid Bioproduction

Systems metabolic engineering has driven significant advances in organic acid production, as exemplified by recent achievements in strain engineering:

Table 2: Selected Organic Acid Production Achievements through Metabolic Engineering

| Organic Acid | Host Organism | Titer (g/L) | Key Metabolic Engineering Strategies | Reference |

|---|---|---|---|---|

| Lactic Acid | Corynebacterium glutamicum | 212-264 | Modular pathway engineering for both L- and D-lactic acid isoforms [23] | [23] |

| 3-Hydroxypropionic Acid | Corynebacterium glutamicum | 62.6 | Substrate engineering, genome editing engineering [23] | [23] |

| Succinic Acid | E. coli | 153.36 | Modular pathway engineering, high-throughput genome engineering, codon optimization [23] | [23] |

| Pyruvic Acid | Lactococcus lactis | 54.6 | Substrate engineering, chassis engineering [23] | [23] |

For pyruvate production, metabolic engineers have employed strategies including the disruption of the pyruvate decarboxylase gene (KmPDC1) and glycerol-3-phosphate dehydrogenase gene (KmGPD1) in Kluyveromyces marxianus, coupled with overexpression of mth1 and its variants [24]. Additional approaches have utilized acid-resistant, pyruvate-tolerant strains of Klebsiella oxytoca with integration of the NADH oxidase gene (nox) to inhibit lactic acid production and regenerate NAD⁺ [24]. These examples illustrate how systems metabolic engineering integrates multiple modification strategies to achieve high-titer production of target compounds.

Advanced Biofuel Production

The progression of metabolic engineering is particularly evident in biofuel production, which has evolved through multiple generations:

- First-generation biofuels utilized food crops like corn and sugarcane, employing conventional fermentation and distillation processes [25].

- Second-generation biofuels leveraged non-food lignocellulosic biomass through enzymatic hydrolysis and fermentation [25].

- Third-generation biofuels employed algal systems with photobioreactors and hydrothermal liquefaction [25].

- Fourth-generation biofuels utilize genetically modified algae and synthetic biology approaches, including CRISPR-based genome editing and synthetic pathways for advanced hydrocarbons [25].

Notable achievements in advanced biofuel production include 91% biodiesel conversion efficiency from lipids and a three-fold increase in butanol yield in engineered Clostridium species, alongside approximately 85% xylose-to-ethanol conversion in engineered S. cerevisiae [25]. These advances demonstrate how systems metabolic engineering enables the optimization of microorganisms for enhanced substrate processing and industrial resilience.

Essential Research Tools and Reagents

The implementation of systems metabolic engineering relies on a sophisticated toolkit of research reagents and computational resources. The table below details key resources essential for executing advanced metabolic engineering projects.

Table 3: Research Reagent Solutions for Systems Metabolic Engineering

| Research Reagent / Tool | Function | Application Example |

|---|---|---|

| RetroPath [22] | In silico pathway design | Automated selection of biosynthetic pathways for target compounds |

| Selenzyme [22] | Enzyme selection | Computational selection of suitable enzymes for pathway steps |

| PartsGenie [22] | DNA part design | Design of reusable DNA parts with optimized RBS and coding sequences |

| Ligase Cycling Reaction | DNA assembly | Automated pathway assembly for combinatorial libraries |

| Mechanistic Kinetic Models [5] | Pathway simulation | In silico testing of metabolic pathway behavior and optimization strategies |

| UPLC-MS/MS [22] | Analytical screening | Quantitative detection of target products and pathway intermediates |

| CRISPR-Cas Systems [25] | Genome editing | Precise genetic modifications in host organisms |

| Orthogonal Array Design [22] | Library reduction | Statistical reduction of combinatorial libraries to tractable sizes |

| Gradient Boosting/Random Forest [5] | Machine learning | Predicting strain performance and recommending new designs |

Future Perspectives and Concluding Remarks

The historical progression from traditional metabolic engineering to advanced systems metabolic engineering represents a fundamental transformation in how microbial cell factories are conceived, designed, and optimized. This evolution has been characterized by increasing integration of computational tools, automation, and data-driven approaches, culminating in the current paradigm of iterative DBTL cycles enhanced by machine learning. As the field continues to advance, several emerging trends are likely to shape its future trajectory:

- AI-Driven Strain Optimization: Increased application of artificial intelligence for enzyme and pathway discovery, with machine learning models becoming increasingly sophisticated at predicting metabolic behavior and optimizing strain designs [5] [25].

- Multi-Omics Integration: Deeper integration of multi-omics data (genomics, transcriptomics, proteomics, metabolomics) to create more comprehensive models of cellular metabolism [24] [23].

- Automation and Scale: Continued advancement in automation technologies enabling higher-throughput DBTL cycles with reduced manual intervention [22].

- Non-Model Organisms: Expansion of metabolic engineering capabilities beyond traditional model organisms to encompass a wider range of industrially relevant hosts [24].

- Sustainable Bioproduction: Enhanced focus on circular economy principles, including waste stream utilization and carbon-negative manufacturing processes [25].

The DBTL cycle has emerged as the central organizing framework for modern systems metabolic engineering, providing a structured methodology for continuous strain improvement. By integrating computational design, automated construction, high-throughput testing, and machine learning, this iterative approach has dramatically accelerated the development of microbial cell factories for diverse applications. As these technologies continue to mature, systems metabolic engineering is poised to play an increasingly vital role in the transition toward sustainable bio-based manufacturing across chemical, material, and fuel industries.

Diagram 2: Historical progression of metabolic engineering through three distinct waves of innovation, from rational pathway engineering to modern systems metabolic engineering [23].

Key Components and Workflow of a Single DBTL Iteration

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology and systems metabolic engineering, providing an iterative, systematic approach for engineering biological systems. This cycle enables researchers to design genetic constructs, build them in microbial hosts, test their performance, and learn from the data to inform subsequent design iterations. Recent advances in machine learning, automation, and high-throughput technologies have transformed traditional DBTL approaches, significantly accelerating the engineering of microbial cell factories for producing fine chemicals and pharmaceuticals. This technical guide examines the core components and workflow of a single DBTL iteration within the context of modern metabolic engineering research.

Core Components of the DBTL Cycle

A single DBTL iteration comprises four interconnected phases, each with distinct objectives, methodologies, and outputs that collectively drive the strain engineering process forward.

Design Phase

The Design phase involves in silico specification of genetic designs based on project objectives and prior knowledge. Modern Design workflows integrate computational tools for pathway selection, enzyme choice, and DNA part design. Key tools include RetroPath for automated pathway selection from target compounds [22], Selenzyme for enzyme selection [22], and PartsGenie for designing reusable DNA parts with optimized ribosome-binding sites and codon-optimized coding regions [22].

Machine learning has revolutionized this phase, with protein language models like ESM-2 and ProtBert enabling zero-shot prediction of protein structures and functions, potentially reordering the cycle to LDBT (Learn-Design-Build-Test) in some applications [26]. These models capture evolutionary relationships from millions of protein sequences, allowing prediction of beneficial mutations without additional experimental training data [26] [27]. For combinatorial library design, statistical methods like Design of Experiments (DoE) dramatically reduce the number of constructs needed to explore large design spaces, achieving compression ratios of 162:1 or higher [22].

Build Phase

The Build phase translates digital designs into physical biological constructs. Automated platforms enable high-throughput DNA assembly using methods such as ligase cycling reaction (LCR) [22]. Commercial DNA synthesis provides gene fragments, followed by automated pathway assembly on robotic platforms [22]. Constructs are then transformed into microbial chassis, with quality control performed via automated plasmid purification, restriction digest, and sequence verification [22].

Emerging approaches leverage cell-free expression systems to accelerate the Build and Test phases. These systems use transcription-translation machinery from cell lysates or purified components to express proteins without time-consuming cloning steps, enabling protein production at rates exceeding 1 g/L in under 4 hours [26]. When combined with liquid handling robots and microfluidics, cell-free systems allow ultra-high-throughput screening of thousands of protein variants [26].

Test Phase

The Test phase involves experimental characterization of built constructs to measure performance against target metrics. For metabolic engineering, this typically includes cultivating strains in automated multi-well platforms, followed by metabolite extraction and quantitative analysis using techniques like ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) [22].

Advanced analytical methods have dramatically increased testing throughput and resolution. The RespectM method, for instance, uses mass spectrometry imaging to detect metabolites at single-cell resolution, acquiring data from 500 cells per hour [28]. This approach captures metabolic heterogeneity within cell populations, generating thousands of single-cell metabolomics data points that provide deeper insights into pathway performance and identify metabolic subpopulations [28].

Learn Phase

The Learn phase represents the knowledge extraction component, where Test data are analyzed to identify relationships between design parameters and performance outcomes. Statistical methods and machine learning algorithms process experimental data to determine key factors influencing production titers [22]. For example, in a flavonoid production case study, statistical analysis revealed that vector copy number and chalcone isomerase promoter strength had the most significant effects on pinocembrin titers [22].

Machine learning approaches have enhanced this phase considerably. Deep neural networks trained on single-cell metabolomics data can establish heterogeneity-powered learning models that predict optimal metabolic engineering strategies [28]. These models identify minimal genetic interventions needed to achieve target metabolite production, effectively reshaping the traditional DBTL cycle [28].

Quantitative Analysis of DBTL Performance

The impact of iterative DBTL cycling is demonstrated through measurable improvements in production metrics. The following table summarizes performance data from a published DBTL case study on pinocembrin production in E. coli:

Table 1: Performance Improvement Across DBTL Iterations for Pinocembrin Production in E. coli [22]

| DBTL Cycle | Number of Constructs | Max Titer (mg L⁻¹) | Fold Improvement | Key Optimized Parameters |

|---|---|---|---|---|

| Initial Library | 16 | 0.14 | Baseline | Wide exploration of design space |

| Second Iteration | Not specified | 88 | ~500x | High-copy origin, optimized CHI positioning and promoter strength |

The implementation of automated, integrated DBTL pipelines has demonstrated significant operational efficiencies:

Table 2: Impact of Workflow Automation on DBTL Efficiency [29]

| Metric | Manual Processes | Automated Workflow | Improvement |

|---|---|---|---|

| Sample processing time | Baseline | 5x faster | 500% improvement |

| Manual error rate | Baseline | 50% reduction | 50% improvement |

| Data traceability | Limited | Enhanced with full audit trails | Significant improvement |

Detailed Experimental Methodologies

Automated Pathway Assembly Protocol

The Build phase employs standardized protocols for high-throughput genetic construction. The following methodology is adapted from an automated DBTL pipeline for flavonoid production [22]:

- DNA Preparation: Commercial synthesis of gene fragments followed by PCR amplification of parts.

- Robotic Assembly Setup: Ligase cycling reaction (LCR) assembly using worklists generated by PlasmidGenie software.

- Transformation: Chemical transformation of assembled constructs into E. coli DH5α.

- Quality Control: Automated plasmid purification, restriction digest analysis via capillary electrophoresis, and sequence verification.

- Repository Registration: All constructs deposited in JBEI-ICE repository with unique identifiers for sample tracking.

Analytics and Screening Methodology

The Test phase employs comprehensive analytical workflows to assess strain performance [22]:

- Cultivation: Automated 96-deepwell plate cultivation with standardized media and induction protocols.

- Metabolite Extraction: Automated extraction of target compounds from cultures.

- Quantitative Analysis: UPLC-MS/MS with high mass resolution for precise quantification of target products and intermediates.

- Data Processing: Custom R scripts for data extraction and processing.

Machine Learning-Guided Learning Phase

Modern Learn phases incorporate sophisticated data analysis techniques [28]:

- Data Preparation: Processing of single-cell metabolomics data representing metabolic heterogeneity.

- Model Training: Deep neural network training on metabolomics data to establish predictive models.

- Pattern Identification: Analysis of trained models to identify key metabolic engineering targets.

- Design Optimization: Recommendation of minimal genetic interventions to achieve production targets.

Workflow Visualization

DBTL Cycle Workflow: This diagram illustrates the iterative four-phase DBTL cycle with key activities in each phase and their interconnected relationships.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of DBTL cycles requires specialized reagents, software tools, and analytical platforms. The following table catalogues essential solutions used in modern DBTL pipelines:

Table 3: Essential Research Reagents and Solutions for DBTL Implementation

| Category | Item/Solution | Function/Application | Example Sources/Platforms |

|---|---|---|---|

| DNA Construction | Ligase Cycling Reaction (LCR) Reagents | High-efficiency DNA assembly without traditional restriction enzymes | Custom formulations [22] |

| Commercial Gene Fragments | Source of standardized genetic parts for pathway assembly | Various synthetic biology providers [22] | |

| Analytical Standards | Quantitative Metabolite Standards | Calibration and quantification of target compounds in analytical assays | Commercial chemical suppliers [22] |

| Stable Isotope-Labeled Internal Standards | Precise quantification via mass spectrometry | Cambridge Isotope Laboratories, etc. [22] | |

| Cell Culture | Specialized Growth Media | Optimized cultivation for production strains | Custom formulations per organism [22] |

| Induction Reagents | Pathway induction (e.g., IPTG, arabinose) | Various biochemical suppliers [22] | |

| Software Tools | Pathway Design Platforms | In silico pathway design and enzyme selection | RetroPath, Selenzyme [22] |

| DNA Part Design Tools | Optimization of regulatory elements and coding sequences | PartsGenie [22] | |

| Data Analysis Platforms | Processing of analytical data and machine learning | R scripts, Python ML libraries [22] [28] | |

| Specialized Reagents | MALDI Matrix Compounds | Matrix for mass spectrometry imaging in single-cell analysis | RespectM method [28] |

| Cell-Free Expression Systems | Rapid in vitro protein synthesis and testing | PURExpress, homemade extracts [26] |

Advanced Methodologies in Modern DBTL Cycles

Integration of Machine Learning and AI

Machine learning has transformed traditional DBTL approaches in several ways. Protein language models (ESM, ProtBert) enable zero-shot prediction of protein stability, solubility, and function from sequence data alone [26] [27]. Models like ProteinMPNN and MutCompute use deep neural networks trained on protein structures to predict stabilizing mutations and design novel sequences [26]. Ensemble methods combining multiple prediction approaches, such as ESM-SECP for protein-DNA binding site prediction, integrate sequence-feature-based predictors with sequence-homology-based predictors to improve accuracy [27].

Single-Cell Analysis and Metabolic Heterogeneity

Traditional bulk measurements obscure cellular heterogeneity, limiting learning potential. Advanced single-cell methodologies like RespectM provide massive single-cell metabolomics datasets (4,321 cells in one study) that reveal metabolic subpopulations and dynamics [28]. Heterogeneity-powered learning uses deep neural networks trained on this single-cell data to identify optimal metabolic engineering strategies that account for population variation [28]. Pseudo-time analysis and trajectory mapping capture dynamic metabolic changes across cell populations, identifying key branching points in metabolic networks [28].

Automation and Biofoundries

Integrated automation platforms connect each DBTL phase through streamlined data and material transfer. Automated worklist generation enables seamless transition from digital designs to physical construction [22]. Centralized data repositories (e.g., JBEI-ICE) provide sample tracking and data management across cycles [22]. Modular platform design allows replacement of individual components as technology evolves while maintaining overall workflow integrity [22].

The DBTL cycle represents a powerful framework for systematic engineering of biological systems in metabolic engineering research. A single iteration encompasses design using computational tools and machine learning, construction via automated DNA assembly, characterization through advanced analytics, and knowledge extraction via statistical analysis and machine learning. Recent advances in machine learning, single-cell analysis, and automation have dramatically accelerated DBTL cycles, enabling 500-fold improvements in production titers through iterative optimization. The integration of these technologies continues to evolve the DBTL paradigm, with emerging approaches like LDBT (Learn-Design-Build-Test) potentially reshaping the fundamental cycle structure. As these methodologies mature, they promise to further accelerate the development of microbial cell factories for sustainable production of fine chemicals, pharmaceuticals, and biofuels.

From Code to Cell: Advanced DBTL Tools and Real-World Applications in Bioproduction

In the field of systems metabolic engineering, the Design-Build-Test-Learn (DBTL) cycle has emerged as a fundamental framework for engineering microbial cell factories. This iterative process involves designing genetic modifications, building engineered strains, testing their performance, and learning from the data to inform the next design cycle. Biofoundries represent the technological evolution of this framework—highly automated, integrated facilities that leverage robotic automation and computational analytics to streamline and accelerate synthetic biology research and applications [30]. These facilities are transforming traditional, labor-intensive bioprocess development into a rapid, high-throughput endeavor essential for establishing sustainable alternatives to the petrochemical industry [31] [32].

The core challenge in conventional metabolic engineering lies in the time-consuming and costly nature of the DBTL process. Genetic improvement projects that previously required five to 10 years can now be completed in just six to 12 months through biofoundry automation [32]. This dramatic acceleration is made possible by integrating state-of-the-art technology and advanced instrumentation into an interconnected infrastructure that supports multidisciplinary research and enables rapid design, construction, and testing of genetically reprogrammed organisms for biotechnology applications [32]. As these capabilities continue to evolve, biofoundries are playing an increasingly crucial role in advancing biomanufacturing across pharmaceuticals, sustainable chemicals, and materials production.

The Build Phase: High-Throughput Strain Construction

Automated Genetic Design and Assembly

The build phase in a biofoundry encompasses the automated, high-throughput construction of biological components predefined in the design phase. This process transforms digital genetic designs into physical DNA constructs ready for testing. Biofoundries employ sophisticated software-driven design tools such as j5 DNA assembly design software and Cello for manipulating and assembling DNA sequences and designing new genetic circuits [30]. More recently, open-source solutions like AssemblyTron have emerged as affordable automation packages that integrate j5 DNA assembly design outputs with Opentrons liquid handling systems for automated DNA assembly [30].

A key innovation in modern biofoundries is the development of standardized software libraries such as SynBiopython, created by the Software Working Group of the Global Biofoundry Alliance (GBA) to standardize development efforts in DNA design and assembly across biofoundries [30]. This standardization is critical for enabling reproducible, scalable synthetic biology research. The GBA, established in 2019 with over 30 member biofoundries worldwide, coordinates activities and promotes open-source models that allow new technologies and capabilities to be widely shared across the research community [32] [30].

Robotic Automation Platforms

Biofoundries utilize integrated robotic systems that enable unprecedented throughput in strain construction. For instance, Lesaffre, a private company with substantial biofoundry facilities, has implemented a system comprising more than 100 interconnected programmable instruments supporting eight work cells [32]. This level of automation has increased their screening capacity from approximately 10,000 yeast strains per year to an impressive 20,000 strains per day [32].

The hardware infrastructure typically includes automated platforms for high-throughput colony picking, clone screening, DNA assembly, and transformation. These systems are programmed with specially tailored software and connected to laboratory information management systems (LIMS) and electronic lab notebooks to ensure seamless data tracking and integration throughout the build process [32]. The interconnection of these systems enables a continuous workflow where genetic designs from the computational phase are automatically translated into physical DNA constructs with minimal human intervention.

Table 1: Key Technologies in Automated Strain Construction

| Technology Category | Specific Tools/Platforms | Function | Throughput Capacity |

|---|---|---|---|