AI-Driven DBTL Cycles: Accelerating Synthetic Biology and Biomanufacturing with Machine Learning

This article explores the transformative integration of machine learning (ML) into the Design-Build-Test-Learn (DBTL) cycle, a core framework in synthetic biology and metabolic engineering.

AI-Driven DBTL Cycles: Accelerating Synthetic Biology and Biomanufacturing with Machine Learning

Abstract

This article explores the transformative integration of machine learning (ML) into the Design-Build-Test-Learn (DBTL) cycle, a core framework in synthetic biology and metabolic engineering. Aimed at researchers and drug development professionals, it details how ML is reshaping this iterative process into a more predictive and automated workflow. We cover foundational concepts like the paradigm shift to a 'Learn-Design-Build-Test' (LDBT) model, methodological advances combining ML with high-throughput cell-free testing and automated biofoundries, strategies for troubleshooting data and model limitations, and finally, a validation of these approaches through compelling case studies in enzyme and metabolic pathway engineering. The synthesis of these elements points towards a future of self-driving laboratories capable of unprecedented acceleration in biological design.

From DBTL to LDBT: A Paradigm Shift in Biological Engineering

Deconstructing the Traditional Design-Build-Test-Learn (DBTL) Cycle

The Design-Build-Test-Learn (DBTL) cycle represents a cornerstone framework in synthetic biology and metabolic engineering, providing a systematic, iterative methodology for developing and optimizing biological systems. This cyclic process enables researchers to engineer microorganisms for specific functions, such as producing valuable pharmaceuticals, biofuels, or specialty chemicals. The traditional DBTL approach begins with the rational design of biological components, followed by physical assembly and construction of genetic circuits, experimental testing of the constructed systems, and finally, analysis and learning from the generated data to inform the next design iteration [1] [2].

Within the context of modern bioengineering research, particularly in machine learning-driven optimization, the traditional DBTL framework is undergoing significant transformation. The integration of artificial intelligence and automation technologies is reshaping each phase of the cycle, enabling more predictive design, accelerated construction, high-throughput testing, and data-driven learning. This deconstruction examines the fundamental components of the traditional DBTL cycle, its evolution into next-generation paradigms, and the practical methodologies implementing these frameworks for advanced strain optimization and biological system engineering.

The Traditional DBTL Framework

The traditional DBTL cycle operates as a sequential, iterative process with distinct phases, each contributing to the progressive refinement of biological systems.

Phase 1: Design

The Design phase involves specifying the genetic elements and regulatory components required to achieve a desired biological function. This stage relies heavily on domain expertise, prior knowledge, and computational tools to model system behavior. Researchers design DNA constructs by selecting appropriate promoters, ribosomal binding sites (RBS), coding sequences, and terminators, often focusing on modular components that can be interchangeably assembled [1] [2]. In traditional metabolic engineering, this phase typically involves:

- Pathway Identification: Selecting enzymatic steps to convert substrates to desired products

- Component Selection: Choosing genetic parts with known characteristics

- Rational Engineering: Applying biological knowledge to predict beneficial modifications

Phase 2: Build

The Build phase encompasses the physical construction of the designed genetic systems. This involves DNA synthesis, assembly into plasmids or other vectors, and introduction into host organisms. Traditional building methods include:

- Molecular Cloning: Restriction enzyme-based assembly or Gibson assembly

- Plasmid Construction: Assembling genetic circuits in appropriate vectors

- Host Transformation: Introducing constructed plasmids into microbial chassis such as Escherichia coli or Pseudomonas putida [3]

Manual cloning approaches, while effective, often create bottlenecks in throughput and reproducibility, limiting the scale of combinatorial testing possible within a single DBTL cycle [2].

Phase 3: Test

The Test phase involves experimental characterization of the built biological systems to evaluate their performance against predefined metrics. This typically includes:

- Cultivation Experiments: Growing engineered strains under controlled conditions

- Product Quantification: Measuring titers, yields, and productivity of target compounds

- Functional Assays: Assessing whether the genetic circuit operates as intended [1]

In traditional workflows, testing is often low- to medium-throughput, relying on flask cultivations or small-scale bioreactors with analytical techniques like HPLC or mass spectrometry for metabolite detection and quantification.

Phase 4: Learn

The Learn phase focuses on analyzing experimental data to extract insights about system behavior and identify modifications for subsequent cycles. Traditional learning approaches include:

- Statistical Analysis: Identifying correlations between genetic modifications and performance

- Hypothesis Generation: Formulating biological explanations for observed behaviors

- Design Refinement: Determining which components to modify in the next iteration [1]

This phase typically relies heavily on researcher intuition and domain expertise, with limited computational support for predicting the outcomes of proposed modifications.

Evolution Toward Next-Generation DBTL Frameworks

The integration of machine learning and automation technologies has driven significant evolution in the traditional DBTL cycle, leading to more efficient and predictive frameworks.

The Knowledge-Driven DBTL Cycle

Recent advances incorporate upstream in vitro investigations to inform the initial design phase, creating a "knowledge-driven" DBTL approach. This methodology uses cell-free transcription-translation systems to rapidly prototype pathway components before committing to full cellular engineering [1]. As demonstrated in dopamine production optimization in E. coli, this approach involves:

- In Vitro Pathway Characterization: Testing enzyme expression and function in cell lysate systems

- RBS Engineering: Using ribosomal binding site libraries to fine-tune translation efficiency

- High-Throughput Screening: Rapidly assessing numerous genetic variants [1]

This knowledge-driven strategy achieved a 2.6 to 6.6-fold improvement in dopamine production over previous state-of-the-art approaches, highlighting the power of incorporating mechanistic understanding early in the DBTL cycle [1].

The LDBT Paradigm Shift

A more radical transformation proposes reordering the cycle entirely to LDBT (Learn-Design-Build-Test), where machine learning precedes initial design. This paradigm leverages:

- Protein Language Models: Tools like ESM and ProGen trained on evolutionary relationships in protein sequences

- Structure-Based Design: Algorithms such as MutCompute and ProteinMPNN that predict sequences folding into desired structures

- Zero-Shot Prediction: Generating functional protein variants without additional experimental training data [4]

This approach is particularly powerful when combined with cell-free expression systems that enable rapid testing of computationally generated designs, potentially collapsing multiple iterative cycles into a single pass through the LDBT framework [4].

Automated and Semi-Automated DBTL Implementations

The integration of automation throughout the DBTL cycle significantly enhances throughput and reproducibility. Key advancements include:

- Biofoundries: Facilities integrating laboratory automation for high-throughput genetic engineering

- Liquid Handling Robots: Enabling parallel assembly and testing of numerous variants

- Microfluidics: Allowing ultra-high-throughput screening of enzymatic reactions [4] [5]

In medium optimization for flaviolin production in Pseudomonas putida, a semi-automated DBTL pipeline incorporating active learning identified sodium chloride as a critical, previously overlooked factor, leading to 60-70% increases in titer and a 350% improvement in process yield [6].

Quantitative Analysis of DBTL Applications

Table 1: Performance Metrics from DBTL Applications in Metabolic Engineering

| Application | Host Organism | Target Compound | Performance Improvement | Key DBTL Enhancement |

|---|---|---|---|---|

| Dopamine production [1] | Escherichia coli | Dopamine | 69.03 ± 1.2 mg/L (2.6 to 6.6-fold increase) | Knowledge-driven DBTL with RBS engineering |

| Flaviolin production [6] | Pseudomonas putida | Flaviolin | 60-70% titer increase, 350% process yield | Machine learning-led media optimization |

| Biosensor refactoring [5] | Escherichia coli | Biosensor performance | Improved performance and compatibility | Automated testing and characterization |

| 3-HB production [4] | Clostridium | 3-Hydroxybutyrate | 20-fold improvement | iPROBE pathway prototyping |

Table 2: Comparison of Traditional and Enhanced DBTL Approaches

| DBTL Phase | Traditional Approach | Enhanced Approach | Key Technologies |

|---|---|---|---|

| Design | Rational design based on literature | Predictive computational models | Machine learning, protein language models, kinetic modeling [4] [7] |

| Build | Manual molecular cloning | Automated DNA assembly | Biofoundries, liquid handling robots, high-throughput cloning [2] [5] |

| Test | Flask-scale cultivations | High-throughput screening | Cell-free systems, microfluidics, automated analytics [1] [4] |

| Learn | Statistical analysis, researcher intuition | Machine learning, data-driven modeling | Active learning, explainable AI, automated recommendation algorithms [7] [6] |

Experimental Protocols for DBTL Implementation

Protocol 1: Knowledge-Driven DBTL for Metabolic Pathway Optimization

This protocol outlines the methodology for implementing a knowledge-driven DBTL cycle with upstream in vitro investigation, as applied to dopamine production in E. coli [1].

Materials

- Bacterial Strains: Production host (e.g., E. coli FUS4.T2) and cloning strain (e.g., E. coli DH5α)

- Plasmids: pET system for gene storage, pJNTN for crude cell lysate system and library construction

- Media: 2xTY medium, SOC medium, minimal medium with appropriate supplements

- Buffers: Phosphate buffer (50 mM, pH 7), reaction buffer for crude cell lysate system

- Enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC), L-DOPA decarboxylase (Ddc)

Methods

In Vitro Pathway Characterization:

- Prepare crude cell lysate from production host

- Test enzyme expression levels in cell-free system

- Measure substrate conversion rates for different enzyme ratios

- Identify optimal expression balance for maximal flux

In Vivo Translation:

- Design RBS library based on in vitro results

- Vary Shine-Dalgarno sequence while maintaining secondary structure

- Construct plasmid libraries with different RBS strengths

- Transform into production host

High-Throughput Screening:

- Cultivate variants in 96-well format

- Induce expression with IPTG

- Measure product formation via HPLC or colorimetric assays

- Select top performers for further analysis

Learning and Iteration:

- Analyze correlation between RBS strength and production

- Identify potential bottlenecks in pathway

- Design next-generation libraries targeting identified limitations

Protocol 2: Machine Learning-Led Media Optimization

This protocol details the semi-automated DBTL process for media optimization, as implemented for flaviolin production in P. putida [6].

Materials

- Bacterial Strain: Pseudomonas putida KT2440

- Media Components: Carbon sources, nitrogen sources, salts, trace elements

- Automation Equipment: Liquid handling robot, plate readers, automated bioreactors

- Analytical Instruments: HPLC, spectrophotometer

Methods

Initial Design of Experiments:

- Define media component space using fractional factorial design

- Select initial set of conditions covering design space

- Program liquid handling robot for media preparation

Automated Build Phase:

- Prepare media variants in 96-well format using automation

- Inoculate with standardized preculture

- Transfer plates to controlled incubation system

High-Throughput Testing:

- Monitor growth curves via automated plate reading

- Sample at appropriate time points for product quantification

- Analyze flaviolin concentration using spectrophotometric methods

Active Learning Cycle:

- Train machine learning models on collected data

- Use explainable AI techniques to identify key components

- Select next media conditions predicted to improve performance

- Iterate through additional DBTL cycles until performance plateaus

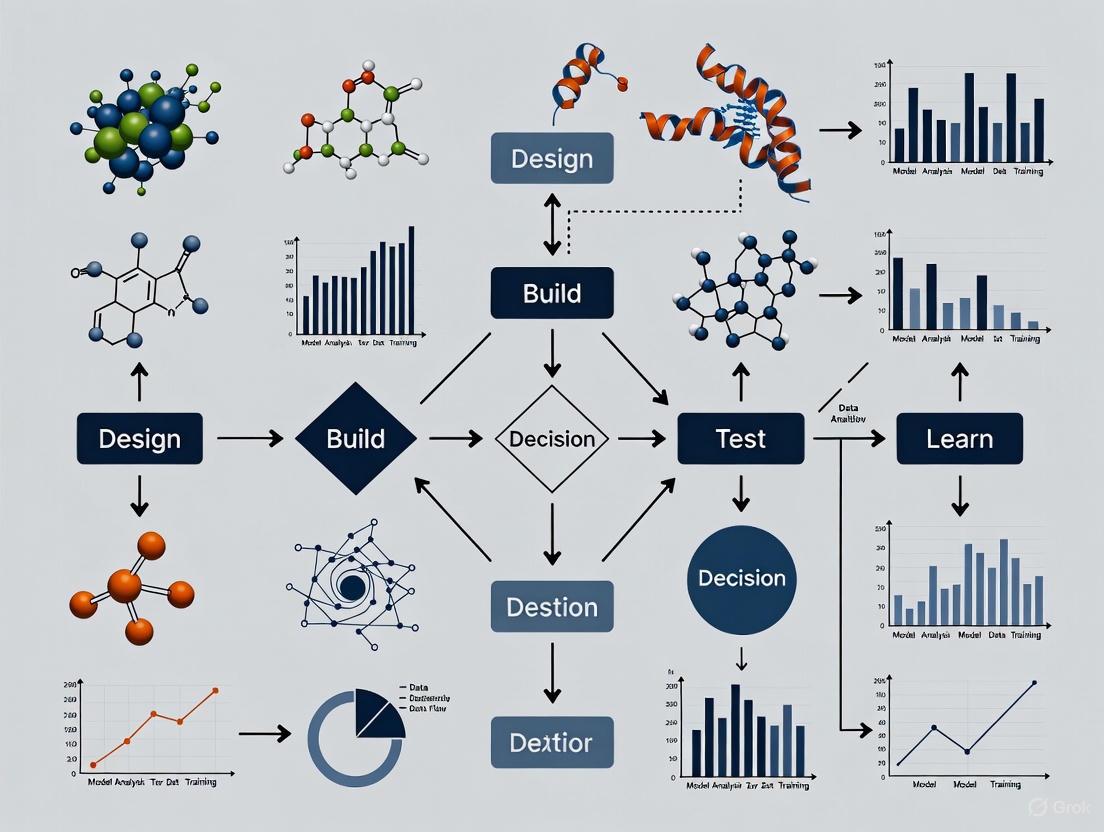

Workflow Visualization

DBTL Cycle Evolution: Traditional vs. Enhanced Approaches

LDBT Paradigm: Machine Learning-First Approach

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for DBTL Implementation

| Reagent/Material | Function | Application Example |

|---|---|---|

| Cell-Free Expression Systems | Rapid in vitro prototyping of pathways without cellular constraints | Testing enzyme expression levels before in vivo implementation [1] [4] |

| RBS Library Variants | Fine-tuning translation initiation rates for pathway balancing | Optimizing relative expression of dopamine pathway enzymes [1] |

| Automated DNA Assembly Kits | High-throughput construction of genetic variants | Building large promoter-RBS-gene combinatorial libraries [2] [5] |

| Specialized Production Media | Supporting optimal titers, rates, and yields | Machine learning-optimized media for flaviolin production [6] |

| Analytical Standards | Quantifying pathway intermediates and products | HPLC calibration for dopamine and L-DOPA quantification [1] |

The deconstruction of the traditional Design-Build-Test-Learn cycle reveals a dynamic framework in transition, moving from sequential, empirically-driven iterations toward integrated, predictive workflows enhanced by machine learning and automation. The knowledge-driven DBTL approach demonstrates how upstream in vitro investigation can accelerate strain development, while the LDBT paradigm represents a fundamental rethinking that places machine learning at the forefront of biological design.

For researchers pursuing machine learning-driven optimization of DBTL cycles, key considerations include the selection of appropriate model organisms, implementation of high-throughput building and testing methodologies, application of explainable AI techniques for extracting biological insights, and development of closed-loop experimental systems that seamlessly integrate computational design with physical experimentation. As these technologies mature, the DBTL cycle promises to evolve from a sequential, iterative process toward a more parallelized, predictive engineering discipline capable of addressing complex biological design challenges with unprecedented efficiency and success.

The Design-Build-Test-Learn (DBTL) cycle is a cornerstone methodology in synthetic biology and biological engineering, providing a systematic, iterative framework for developing and optimizing biological systems [8]. This cyclical process begins with Design, where researchers define objectives and create a plan for the genetic system based on a specific hypothesis or prior knowledge. This is followed by the Build phase, where theoretical designs are translated into physical reality through molecular cloning and transformation into host organisms. The Test phase involves quantitative characterization of the built system through various assays. Finally, in the Learn phase, data from testing is analyzed to gain insights that inform the next Design phase, creating a continuous loop of improvement [8]. While this framework has proven valuable, the traditional implementation of DBTL cycles faces significant bottlenecks that limit the pace of biological innovation, particularly in fields like drug development where speed is critical.

Quantitative Analysis of DBTL Bottlenecks

The resource-intensive nature of classic DBTL cycles becomes evident when examining the experimental requirements for optimizing even relatively simple biological systems. The table below summarizes key quantitative challenges identified from recent studies.

Table 1: Quantitative Bottlenecks in Classic DBTL Cycles

| Bottleneck Category | Traditional Approach Requirements | Example from Literature | Impact |

|---|---|---|---|

| Combinatorial Explosion in Media Optimization | Testing 10 components at 5 levels requires 510 (9,765,625) experiments for full combinatorial testing [9] | Flaviolin production in Pseudomonas putida [9] | Makes comprehensive optimization practically impossible |

| Strain Construction & Cloning Time | 2-3 weeks for DNA synthesis and delivery [10] | Cell-free biosensor development [10] | Significantly slows iteration speed |

| Pathway Optimization Complexity | Multiple plasmid combinations and concentration ratios requiring extensive screening [10] | Arsenic biosensor development with sense/reporter plasmids [10] | Exponential increase in experimental conditions |

| Limited Predictive Capability | Initial cycles often start without prior knowledge, requiring multiple iterations [1] | Dopamine production in E. coli [1] | Trial-and-error approach consumes resources |

The challenges extend beyond mere numbers to fundamental methodological limitations. Traditional approaches often rely on One-Factor-at-a-Time (OFAT) experimentation, which fails to capture interactions between components and can lead to suboptimal solutions [9]. Furthermore, the Build and Test phases are particularly slow, relying on time-consuming processes such as molecular cloning, cellular transformation, and cell culturing that can take days or weeks [11] [12]. This slow iteration speed means that complex biological engineering projects may require months or years to complete just a handful of DBTL cycles, dramatically slowing progress in research and development.

Detailed Experimental Analysis: A Case Study in Media Optimization

Protocol: Machine Learning-Led Semi-Automated Media Optimization

Recent research demonstrates a protocol for overcoming DBTL bottlenecks in media optimization for secondary metabolite production [9]. The following detailed methodology highlights both the traditional limitations and modern solutions:

Experimental System Setup: Engineered Pseudomonas putida KT2440 producing flaviolin was used as the model system. Flaviolin serves as a proxy for malonyl-CoA, a precursor to polyketides and fatty acids with applications in pharmaceutical development [9].

Media Component Selection: Fifteen media components were selected for optimization, with 12-13 variable components and 2-3 fixed components. Traditional OFAT approach for this system would require testing 510 combinations for just 10 components at 5 levels each [9].

Semi-Automated Pipeline Implementation:

- Automated Media Preparation: A liquid handler combined stock solutions to create media with desired concentrations for each design, dispensing them in triplicate/quadruplicate in 48-well plates.

- Inoculation and Cultivation: Plates were inoculated with engineered P. putida and cultivated in an automated BioLector platform for 48 hours with tight control of O₂ transfer, shake speed, and humidity.

- High-Throughput Analytics: Flaviolin in culture supernatant was measured using absorbance at 340 nm as a proxy, enabling rapid phenotype acquisition.

- Data Management: Production data and media designs were stored in the Experiment Data Depot (EDD) for machine learning access.

- Active Learning Integration: The Automated Recommendation Tool (ART) collected data and recommended improved media designs, which were automatically translated to liquid handler instructions via a Jupyter notebook [9].

Key Reagents and Equipment:

- Pseudomonas putida KT2440 engineered for flaviolin production

- 15 media components (including NaCl, carbon sources, nitrogen sources, trace elements)

- Automated liquid handler (e.g., Beckman Coulter Biomek)

- BioLector automated cultivation system

- Microplate reader for Abs₃₄₀ measurements

- Experiment Data Depot (EDD) for data management

- Automated Recommendation Tool (ART) for active learning

Table 2: Research Reagent Solutions for Media Optimization Studies

| Reagent/Equipment | Function/Application | Specific Example |

|---|---|---|

| Automated Liquid Handler | Precise dispensing of media components and reagents | Beckman Coulter Biomek series [9] |

| BioLector Microbioreactor | Automated cultivation with controlled parameters (O₂, humidity, temperature) | m2p-labs BioLector [9] |

| Experiment Data Depot (EDD) | Centralized data management for DBTL cycles | EDD database system [9] |

| Cell-Free Protein Synthesis System | Rapid testing without cellular constraints | E. coli and HeLa-based CFPS systems [12] |

| Active Learning Algorithms | Intelligent selection of next experiments to run | Automated Recommendation Tool (ART) [9] |

Results and Workflow Visualization

The implementation of this semi-automated, machine learning-led approach yielded significant improvements over traditional methods. In three different optimization campaigns for flaviolin production, the system achieved 60% and 70% increases in titer, and a 350% increase in process yield [9]. Surprisingly, explainable AI techniques identified common salt (NaCl) as the most important component influencing production, with optimal concentrations near the tolerance limits of P. putida – a counterintuitive finding that might have been missed with traditional OFAT approaches [9].

The workflow demonstrates how integrating automation and machine learning addresses classic DBTL bottlenecks:

Diagram 1: ML-accelerated DBTL workflow for media optimization. This integrated approach demonstrates how automation and machine learning address traditional bottlenecks at each stage of the cycle.

Emerging Solutions: Machine Learning and Automation

The Shift to LDBT: Learning-Design-Build-Test

A transformative paradigm emerging in the field is the LDBT cycle, which repositions "Learning" to the beginning of the process [11] [13]. This approach leverages machine learning models that have been pre-trained on vast biological datasets to make zero-shot predictions about protein structures, functions, and optimal sequences before any physical experiments are conducted [11]. Protein language models such as ESM and ProGen, trained on evolutionary relationships between millions of protein sequences, can predict beneficial mutations and infer protein functions without additional training [11]. Similarly, structure-based tools like ProteinMPNN and AlphaFold enable sophisticated protein design with significantly higher success rates [11].

Protocol: Fully Automated DBTL for Cell-Free Protein Synthesis

A recent breakthrough in automated DBTL implementation demonstrates the optimization of colicin M and E1 production in cell-free systems [12]:

Design Phase Automation: All Python scripts for experimental design were generated by ChatGPT-4 from non-specialist prompts without manual code editing, dramatically reducing the coding expertise and time traditionally required.

Build Phase Implementation: A fully automated liquid handling system prepares cell-free reactions using:

- E. coli-based CFPS system derived from BL21 Star (DE3) strains

- HeLa-based CFPS system for eukaryotic expression capability

- DNA templates for colicin M (29 kDa) and colicin E1 (58 kDa)

- Energy solutions (creatine phosphate-based energy regeneration)

- Amino acid mixtures and nucleotide triphosphates

Test Phase Configuration:

- Microplate reader with fluorescence and absorbance detection

- Real-time protein synthesis monitoring using GFP fusions

- Endpoint antimicrobial activity assays against sensitive E. coli strains

- Quantification of soluble protein yield via SDS-PAGE and densitometry

Learn Phase with Active Learning:

- Cluster Margin (CM) sampling algorithm selects experiments that are both uncertain and diverse

- Gaussian process models predict protein yield based on CFPS composition

- Automated retraining after each batch of experiments

- Integration with Galaxy platform for FAIR (Findable, Accessible, Interoperable, Reusable) data compliance [12]

This automated platform achieved a 2- to 9-fold increase in colicin yield in just four DBTL cycles, demonstrating the dramatic acceleration possible through integrated automation and machine learning [12].

Knowledge-Driven DBTL for Metabolic Engineering

An alternative approach to reducing DBTL bottlenecks is the knowledge-driven DBTL cycle, which incorporates upstream in vitro investigations to inform the initial design phase [1]. In developing an optimized dopamine production strain in E. coli, researchers first used cell-free transcription-translation systems to test different relative enzyme expression levels before moving to in vivo engineering [1]. This strategy provided mechanistic insights into pathway bottlenecks and informed rational RBS engineering, ultimately achieving 2.6 to 6.6-fold improvements over state-of-the-art dopamine production strains [1].

Diagram 2: Classic DBTL bottlenecks versus modern solutions. The traditional cycle (red) suffers from limited knowledge and manual processes, while modern approaches (green) leverage machine learning and automation to accelerate each phase.

The classic DBTL cycle, while methodologically sound, faces fundamental limitations in its traditional implementation. The combinatorial explosion of biological design space, time-consuming manual processes in building and testing phases, and limited predictive capability collectively create significant bottlenecks that slow research progress and consume substantial resources. However, emerging methodologies demonstrate that these limitations can be effectively addressed through integrated approaches combining machine learning, automation, and strategic experimental design. The implementation of active learning algorithms, automated liquid handling, high-throughput analytics, and computational predictive models can dramatically accelerate DBTL cycles, reducing optimization timelines from months to weeks while improving overall outcomes. For researchers and drug development professionals, adopting these advanced DBTL methodologies represents a critical pathway to accelerating biological innovation and overcoming traditional constraints in synthetic biology and metabolic engineering.

Introducing the Machine Learning Revolution in Synthetic Biology

The convergence of machine learning (ML) and synthetic biology is revolutionizing how we understand, design, and engineer biological systems. This integration is transforming the traditional Design-Build-Test-Learn (DBTL) cycle from a slow, iterative process into a rapid, predictive, and automated pipeline. ML algorithms are now capable of navigating the vast complexity of biological design spaces, making accurate predictions about system behavior, and optimizing genetic constructs with minimal human intervention. This paradigm shift is accelerating the development of novel therapeutics, sustainable biomaterials, and efficient bioprocesses, framing synthetic biology not just as an engineering discipline but as an information science. This article details the specific applications and experimental protocols underpinning this machine learning revolution, providing researchers with the tools to implement these advanced techniques in their own automated DBTL cycle optimization research.

ML Applications Across the Automated DBTL Cycle

The application of machine learning is enhancing every stage of the DBTL cycle, creating a more integrated and efficient workflow for bioengineering.

Design: Predictive Modeling andDe NovoGeneration

In the Design phase, ML models are used to predict the function of genetic parts and systems before physical construction, and can even generate entirely new biological sequences.

- Genomic Foundation Models: Large-scale AI models, such as Evo 2, are trained on DNA sequences from over 100,000 species. This provides a "generalist understanding of the tree of life" that can be fine-tuned for tasks like predicting the effects of genetic mutations or designing new genomes, including ones as long as simple bacterial genomes [14].

- Protein and Molecule Design: Deep learning models enable de novo protein design and the creation of new chemical entities for drug discovery. For instance, automated workflows can generate and computationally profile billions of novel molecular structures to identify promising drug candidates in a matter of days [15] [16].

- Biological Circuit Design: AI-driven tools contribute to increased efficiency and precision in designing complex biological circuits, such as those used in DNA-based neural networks [15].

Build: Optimizing Bioprocesses

In the Build phase, ML shifts from digital design to optimizing the physical construction of biological systems, particularly in biomanufacturing.

- Bioprocess Optimization: ML algorithms, including artificial neural networks (ANNs), are used to optimize critical bioprocesses like Chinese Hamster Ovary (CHO) cell cultivation. One study demonstrated that an ANN could suggest cultivation conditions that increased final monoclonal antibody titers by up to 48% [17].

- Supply Chain and Manufacturing: AI and ML enhance biomanufacturing through predictive maintenance, real-time demand forecasting, and inventory optimization, minimizing waste and ensuring timely delivery of products [18].

Test: Enhanced Analysis and Monitoring

The Test phase is augmented by ML's ability to analyze complex datasets and enable real-time monitoring.

- Clinical Trial Optimization: In biopharma, ML models analyze Electronic Health Records (EHRs) to streamline patient recruitment for clinical trials, predict patient dropouts, and identify diverse participant cohorts. This can cut trial duration by up to 10% [18].

- Real-Time Bioprocess Monitoring: Digital twins and soft-sensing technologies allow for real-time control and increased operational precision in complex bioprocess environments, such as fermentation in biorefineries [19].

Learn: Creating Molecular Memory

The Learn phase is the most advanced, where ML principles are being embedded into molecular systems themselves.

- Autonomous Molecular Learning: Groundbreaking research has demonstrated a DNA-based neural network that can autonomously perform supervised learning in vitro. The system uses DNA strand displacement to learn from molecular training examples, forming stable molecular memories that it uses to classify new patterns without external computation [20] [21].

Table 1: Quantitative Impact of Machine Learning on Key Biotechnological Applications

| Application Area | Key Metric | Impact of ML | Source |

|---|---|---|---|

| Drug Discovery | Proportion of new drugs discovered with AI | Estimated 30% by 2025 | [18] |

| CHO Cell Bioprocessing | Increase in final mAb titer | Up to 48% | [17] |

| Clinical Trials | Reduction in trial duration | Up to 10% | [18] |

| DNA Neural Networks | Pattern classification complexity | 100-bit, two-class system | [20] |

Experimental Protocols & Methodologies

Protocol: Optimizing a CHO Cell Cultivation Process with an Artificial Neural Network

This protocol details the use of an ANN to improve cell growth and recombinant protein yield in an industrial CHO cell process [17].

1. Objective: To increase monoclonal antibody (mAb) titer in a CHO cell cultivation process by using an ANN to identify optimized cultivation conditions.

2. Reagents and Equipment:

- CHO cell line expressing the target mAb

- Standard cell culture media and bioreactors

- Analytics for measuring cell density, viability, and mAb titer (e.g., HPLC, ELISA)

- Computing environment for machine learning (e.g., Python with TensorFlow/PyTorch)

3. Procedure:

- Step 1: Data Collection. Compile a comprehensive dataset from historical and newly generated CHO cell cultivation runs. Key parameters include:

- Input variables: Temperature, pH, dissolved oxygen, nutrient concentrations, feeding schedules.

- Output variables: Final cell density, viability, and mAb titer.

- Step 2: Model Selection and Training.

- Choose an ANN architecture suitable for regression analysis.

- Split the dataset into training (e.g., 80%) and validation (e.g., 20%) sets.

- Train the ANN to predict the output variables (cell growth and mAb titer) based on the input process parameters.

- Step 3: In-Silico Optimization.

- Use the trained ANN to run simulations and predict the performance outcome of thousands of untested condition combinations.

- Identify the set of input parameters that the model predicts will yield the highest mAb titer.

- Step 4: Experimental Validation.

- Perform new cultivation runs using the ML-suggested optimal conditions.

- Measure the final mAb titer and cell growth and compare them to the model's predictions and the performance of the previous standard protocol.

4. Expected Outcome: The validation experiments should confirm that the ML-optimized process leads to a statistically significant increase in final mAb titer compared to the pre-optimization process [17].

Protocol: Implementing a DNA Neural Network for AutonomousIn VitroLearning

This protocol describes the setup for a DNA-based neural network that learns to classify molecular patterns through supervised learning, without external computation [20] [21].

1. Objective: To demonstrate that a molecular system can autonomously learn from training examples and use this memory to classify subsequent test data.

2. Research Reagent Solutions:

Table 2: Key Reagents for DNA Neural Network Implementation

| Reagent / Component | Function | Key Characteristic |

|---|---|---|

| Learning Gates | Stoichiometrically produces activator signals upon receiving input and label strands. | Engineered for irreversibility via a stable hairpin structure to prevent memory loss. |

| Activatable Weight Gates | Catalytically produces a weighted input signal; represents the network's connections. | Activated only by a specific combination of input bit and memory class for high specificity. |

| Activator Molecules (Act_i,j) | The "memory" of the system; carries both input bit (i) and class (j) information. | Transfers information from learning gates to weight gates. |

| Input Strands | Represents the data pattern to be classified (e.g., 100-bit pattern). | Shares the same molecular 'language' as the training data. |

| Label Strands | Represents the correct class for a given input pattern during training. | Consumed during the learning process. |

3. Procedure:

- Step 1: Network Assembly.

- Design and synthesize the DNA strands for all learning gates and activatable weight gates required for the classification task (e.g., a 100-bit, two-class system involves over 700 distinct DNA species).

- Step 2: Training Phase.

- Sequentially introduce training examples into the system. Each example consists of a mixture of input strands (representing the pattern) and label strands (representing the correct class).

- The learning gates bind to the input and label, irreversibly producing specific activator molecules.

- These activator molecules bind to and permanently activate their corresponding weight gates, building up the network's memory matrix over multiple examples.

- Step 3: Memory Transfer.

- Connect the memory device (containing the activated weights) to the processor network. The concentrations of activator molecules now represent the learned weights.

- Step 4: Testing Phase.

- Introduce test input patterns (without labels) into the system.

- The active weight gates interact with the test inputs to compute a weighted sum for each output class.

- A winner-take-all competition is triggered, where the output with the largest sum is amplified, producing a binary classification signal.

4. Expected Outcome: The system should correctly classify a majority of the test cases based on the patterns it learned during the training phase, demonstrating stable, autonomous molecular learning [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for ML-Driven Synthetic Biology

| Category | Specific Tool / Reagent | Research Function |

|---|---|---|

| AI/ML Software Platforms | Schrödinger's AutoDesigner | Enables de novo molecular design and large-scale virtual compound screening for drug discovery [16]. |

| AI/ML Software Platforms | Evo (Evo 1, Evo 2) | A foundation model for biology that predicts effects of genetic mutations and designs new genomes [14]. |

| Specialized DNA Components | Activatable Weight Gates (DNA-based) | Serves as a programmable connection in a molecular neural network, performing multiplication and signal amplification [20]. |

| Specialized DNA Components | Learning Gates (DNA-based) | The core of molecular learning; writes training examples into the network's memory by producing activator molecules [20] [21]. |

| Cell Culture & Bioprocessing | CHO Cell Lines | Industry-standard host cells for producing recombinant therapeutic proteins; optimized using ML models [17]. |

| Cell Culture & Bioprocessing | Advanced Bioreactors with Sensors | Generate real-time data on process parameters (pH, O2, nutrients) for training ML models on bioprocess optimization [19]. |

The classical Design-Build-Test-Learn (DBTL) cycle has long served as the foundational paradigm for synthetic biology and biological engineering. However, this iterative process often encounters significant bottlenecks in its Build and Test phases, particularly when reliant on in vivo systems and traditional cloning methods. These limitations become particularly constraining in the context of modern drug development and bio-manufacturing, where rapid iteration is essential.

We propose a paradigm shift to the LDBT cycle (Learn-Design-Build-Test), where machine learning (ML) precedes biological design [4] [22]. This reordering leverages the predictive power of pre-trained models on vast biological datasets to generate high-probability-of-success designs from the outset. When integrated with rapid cell-free testing platforms, this approach creates a more linear, efficient pathway from concept to functional biological system, potentially achieving in a single cycle what previously required multiple DBTL iterations [4].

This Application Note details the experimental frameworks and protocols for implementing the LDBT model, with a specific focus on its application in automated drug development research.

Core LDBT Framework and Workflow

The LDBT model fundamentally restructures the bioengineering workflow by initiating with a computational Learning phase. This foundational step utilizes machine learning models trained on evolutionary, structural, and functional biological data to inform the subsequent design of biological parts and systems [4].

The Conceptual Workflow

The following diagram illustrates the core logical flow of the LDBT cycle, highlighting its iterative, learning-driven nature.

Key Advantages Over DBTL

- Predictive Power: Machine learning models, including protein language models (e.g., ESM, ProGen) and structure-based tools (e.g., ProteinMPNN, MutCompute), can perform zero-shot prediction of protein function, stability, and expression, de-risking the initial design [4].

- Speed and Scale: Cell-free expression systems (CFPS) bypass cell culture, enabling protein expression and testing in hours rather than days, and are easily integrated with automation for megascale data generation [4] [22].

- Data Efficiency: Active learning processes, where the ML algorithm selects the most informative experiments to run, dramatically reduce the number of experiments needed to find optimal solutions [9].

Experimental Protocols for LDBT Implementation

This section provides detailed methodologies for establishing an integrated LDBT pipeline for protein or pathway engineering.

Protocol 1: Machine Learning-Guided Protein Design

This protocol utilizes pre-trained models for zero-shot design or fine-tunes them on specific protein families.

3.1.1 Objectives To generate functional protein variant sequences with optimized properties (e.g., stability, activity, solubility) using machine learning before any physical DNA synthesis.

3.1.2 Materials and Reagents

- Hardware: Computer workstation with GPU acceleration (recommended).

- Software & Models:

- Input Data: Wild-type protein sequence (FASTA) and/or 3D structure (PDB file).

3.1.3 Procedure

- Problem Formulation: Define the target property (e.g., "improved thermostability at 60°C," "increased kcat for substrate X").

- Model Selection:

- For zero-shot design based on evolutionary information, use a protein language model (e.g., ESM).

- For structure-based sequence design, use ProteinMPNN, providing a backbone structure.

- For mutation effect prediction, use tools like MutCompute or Stability Oracle.

- In Silico Screening:

- Generate a library of candidate sequences (10^3 - 10^6 variants).

- Score and rank all candidates using the predictive model(s).

- Filter the top candidates (e.g., 100-500 variants) using orthogonal computational filters (e.g., aggregation propensity, solubility predictors).

- Output: A finalized list of DNA sequences for synthesis, representing the highest-confidence designs.

Protocol 2: High-Throughput Build & Test via Cell-Free Protein Synthesis

This protocol outlines a semi-automated pipeline for rapid expression and testing of ML-designed variants, adapted from published workflows [22] [9].

3.2.1 Objectives To rapidly express and characterize hundreds to thousands of protein variants or pathway enzymes without in vivo cloning and culture.

3.2.2 Materials and Reagents

- Research Reagent Solutions:

3.2.3 Procedure

- DNA Template Preparation:

- Synthesize gene variants as linear DNA fragments via PCR or as array-synthesized oligonucleotides pooled and cloned. For cell-free systems, linear templates are often sufficient [22].

- Reaction Assembly:

- Use an automated liquid handler to mix cell-free master mix with individual DNA templates in a 48-, 96-, or 384-well plate.

- Include positive and negative controls (e.g., template for a known fluorescent protein, no-template control).

- Expression and Incubation:

- Incubate plates in a controlled environment (e.g., 30°C for 4-24 hours) in an automated shaker/incubator like a BioLector, which can monitor biomass or fluorescence in real-time [9].

- Testing and Assay:

- For expression yield: Use SDS-PAGE, Western Blot, or fluorescent fusion tags.

- For enzymatic activity: Add substrate linked to a colorimetric or fluorescent readout directly to the reaction.

- For pathway output: Use HPLC or GC-MS on reaction supernatants for authoritative validation of yields [9].

- Data Collection and Curation:

- Automate data extraction from plate readers and instruments.

- Store all data (designs, sequences, and results) in a structured database like the Experiment Data Depot (EDD) for traceability and model retraining [9].

Integrated LDBT Technical Pipeline

The practical implementation of the LDBT cycle involves a tight integration of computational and physical workflows, as shown in the following technical pipeline.

Case Study & Performance Data

Media Optimization for Flaviolin Production inP. putida

A 2025 study demonstrated the power of the LDBT approach for optimizing culture media, a critical but often slow step in bioprocess development [9]. The goal was to maximize flaviolin production.

4.1.1 LDBT Implementation

- Learn: The Automated Recommendation Tool (ART), an active learning ML algorithm, was used to select the most informative media compositions to test next based on previous results.

- Design: ART recommended media designs with varying concentrations of 12-13 components.

- Build & Test: A semi-automated pipeline was used, where a liquid handler prepared media, an automated bioreactor (BioLector) cultivated cells, and a microplate reader quantified flaviolin yield via absorbance [9].

4.1.2 Key Findings and Performance The application of this LDBT pipeline yielded significant performance enhancements across multiple optimization campaigns.

Quantitative Performance Improvements:

Optimization Campaign Metric Improvement Citation Campaign 1 Flaviolin Titer +60% [9] Campaign 2 Flaviolin Titer +70% [9] Campaign 3 Process Yield +350% [9] - Key Insight: Explainable AI techniques identified sodium chloride (NaCl) as the most critical media component, with an optimal concentration near the tolerance limit of P. putida—a non-intuitive finding that traditional methods would likely have missed [9].

Regulatory Considerations for Drug Development

Integrating LDBT into regulatory submissions for drug development requires careful planning. The FDA's Drug Development Tool (DDT) qualification program provides a pathway for regulatory acceptance [23].

- Context of Use (CoU): Clearly define the specific manner and purpose of the LDTB-generated data in the drug development process (e.g., for patient stratification, as a biomarker, or for safety assessment) [23] [24].

- Early Engagement: Proactively consult with health authorities (e.g., FDA) during the development of an LDBT-derived endpoint to ensure alignment on validation requirements [24].

- Validation and Fit-for-Purpose: The level of validation for the ML model and the associated biological assay must be sufficient to support its CoU. This includes demonstrating analytical validation (reliability, accuracy) and clinical validation (association with clinical endpoints) [24].

- Real-World Example: The qualification of the "stride velocity 95th centile" as a primary endpoint for Duchenne Muscular Dystrophy studies by the EMA demonstrates the regulatory utility of digitally-derived endpoints, paving the way for similar acceptance of LDBT-generated evidence [24].

The Scientist's Toolkit: Essential Research Reagents & Platforms

Successful implementation of the LDBT model relies on a suite of computational and experimental tools.

| Tool Category | Example | Specific Function in LDBT |

|---|---|---|

| ML Protein Design | ESM-3, ProGen | Protein language models for zero-shot prediction and sequence generation [4]. |

| ML Protein Design | ProteinMPNN | Structure-based sequence design for fixed protein backbones [4]. |

| ML Protein Design | Stability Oracle, DeepSol | Predicts mutation effects on protein stability (ΔΔG) and solubility [4]. |

| Active Learning | Automated Recommendation Tool (ART) | Selects optimal experiments to run to maximize information gain and efficiency [9]. |

| Cell-Free System | E. coli Extract, PURExpress | Rapid, modular platform for protein expression without living cells [4] [22]. |

| Automation Hardware | Liquid Handling Robot | Enables high-throughput assembly of DNA constructs or cell-free reactions [22] [9]. |

| Automation Hardware | BioLector | Provides parallel, monitored microbioreactor cultivation with online fluorescence/OD measurements [9]. |

| Data Management | Experiment Data Depot (EDD) | Centralized database for storing and linking designs, builds, and test results [9]. |

The integration of Machine Learning (ML) into biological research has transformed our ability to decipher complex biological systems, accelerating discovery and innovation. ML is a branch of artificial intelligence focused on building computational systems that learn from data, enhancing their performance without explicit programming. A central goal of ML is to build models that effectively generalize from training data to new, unseen data, balancing prediction accuracy with model complexity to avoid overfitting or underfitting [25]. In the context of a broader thesis on optimizing the Design-Build-Test-Learn (DBTL) cycle, ML serves as a powerful engine for the "Learn" phase. It extracts meaningful patterns from high-throughput experimental data, informing subsequent cycles of design and building to streamline the development of biological products, such as novel enzymes or microbial production strains [1].

ML techniques are broadly categorized into supervised learning, which uses labeled data for tasks like classification and regression; unsupervised learning, which identifies underlying structures in unlabeled data; and reinforcement learning, where models learn through trial-and-error interactions with an environment [25]. This review focuses on the application of core ML concepts, specifically Protein Language Models (PLMs) and fitness predictors, which are increasingly critical for advancing rational bio-design and optimizing DBTL cycles in synthetic biology and drug development.

Core Machine Learning Concepts and Algorithms

Biologists can leverage several key ML algorithms to analyze complex datasets. The selection of an algorithm often depends on the specific biological question, the nature of the data, and the trade-off between model interpretability and predictive power.

Table 1: Key Machine Learning Algorithms for Biological Research

| Algorithm | Type | Key Principle | Typical Biological Applications |

|---|---|---|---|

| Ordinary Least Squares (OLS) | Supervised / Linear | Minimizes the sum of squared differences between observed and predicted values to find a best-fit line [25]. | Quantifying trait-fitness relationships, baseline statistical modeling. |

| Random Forest | Supervised / Ensemble | Constructs a multitude of decision trees at training time and outputs the mode of their classes or mean prediction [25]. | Genomic prediction, classifying phenotypes from gene expression data. |

| Gradient Boosting Machines | Supervised / Ensemble | Builds models sequentially, where each new model corrects errors made by the previous ones [25]. | Predicting fitness from gene expression, disease prognosis. |

| Support Vector Machines (SVM) | Supervised / Kernel-based | Finds a hyperplane in a high-dimensional space that best separates classes of data points [25]. | Protein subcellular localization, classifying tissue samples. |

Among these, ensemble methods like Random Forest and Gradient Boosting are particularly valued for their high predictive accuracy with complex biological data. For example, one study used ML models, including regularized regression, to predict fitness components (e.g., seed set) from gene expression data in Ivyleaf morning glory, identifying that genes related to photosynthesis, stress, and light responses were key predictors of fitness [26].

Protein Language Models (PLMs): Concepts and Applications

Foundations of Protein Language Models

Protein Language Models (PLMs) are a transformative application of deep learning at the intersection of natural language processing (NLP) and biology. The conceptual similarity between protein sequences and human language is the foundation of PLMs: just as sentences are linear chains of words, proteins are linear chains of 20 common amino acids [27]. This analogy allows powerful NLP models, particularly the Transformer architecture, to be applied to protein sequences. These models are trained on millions of protein sequences through self-supervised learning, learning to generate distributed embedded representations that encode semantic and structural information about proteins [27]. A landmark model, ESM-2 (Evolutionary Scale Modeling), demonstrates how scaling up model parameters and training data leads to emergent capabilities in predicting protein structure and function [27] [28].

PLMs can be categorized based on their underlying architecture:

- Encoder-only models (e.g., ESM, ProtBert): These models, akin to BERT in NLP, are designed to encode a protein sequence into a rich, contextual representation. They are excellent for tasks like function prediction, variant effect analysis, and structure prediction [27].

- Decoder-only models (e.g., ProtGPT2): These models, following the GPT paradigm, generate new protein sequences in an autoregressive manner. They are primarily used for de novo protein design [27] [29].

Applying PLMs in the DBTL Cycle

PLMs directly accelerate the "Design" and "Learn" phases of the DBTL cycle. In the Design phase, generative PLMs can create novel protein sequences tailored for a specific function. For instance, ProGen is a language model trained on 280 million protein sequences across thousands of families. When fine-tuned on lysozyme families, it generated artificial lysozymes that were functionally active, despite having sequences as low as 31.4% identical to natural proteins [29]. This showcases the potential for rapid in silico design of novel biocatalysts.

In the Learn phase, PLMs analyze experimental results to glean deeper insights. A key challenge, however, has been the "black box" nature of these models. A novel approach from MIT researchers uses sparse autoencoders to interpret what features a PLM uses for its predictions. This technique expands the model's internal representation, forcing it to use more "neurons," which ultimately makes individual nodes more interpretable. These nodes can then be linked to specific biological features, such as protein family or molecular function, providing novel biological insights and increasing trust in the model's predictions [28].

Figure 1: A simplified workflow of Protein Language Models (PLMs), showing how they are built and applied to different tasks that feed into the DBTL cycle. Encoder models are typically used for analysis ("Learn"), while decoder models are used for creation ("Design").

Fitness Predictors: Concepts and Applications

Defining Fitness in Machine Learning Contexts

In biological ML, "fitness" can refer to an organism's evolutionary success or a protein's functional performance. ML models act as fitness predictors by establishing a mapping from a biological input (e.g., gene expression profile, protein sequence) to a quantitative measure of this fitness. A prominent example is the use of ML to predict organismal fitness, such as seed set in plants, based on gene expression data. This approach treats gene expression levels as a high-dimensional phenotypic intermediate between the genome and traditional fitness traits, allowing researchers to identify which genes and biological processes are most critical for survival and reproduction [26].

Fitness Prediction in the DBTL Cycle

Fitness predictors are crucial for the "Test" and "Learn" phases. High-throughput experimental data from the "Test" phase is used to train models that predict the fitness (e.g., growth, production yield) of designed variants. The learned insights then guide the next "Design" phase.

A study on optimizing dopamine production in E. coli exemplifies this. Researchers used a knowledge-driven DBTL cycle. Before the first full in vivo cycle, they used in vitro cell-free systems to test different relative expression levels of pathway enzymes (HpaBC and Ddc). This preliminary "Test" provided data to build an initial model, informing the in vivo Design. They then employed high-throughput RBS (Ribosome Binding Site) engineering to fine-tune the expression of these enzymes in living cells, effectively using the RBS variants as a means to scan a fitness landscape for dopamine production. This ML-guided optimization resulted in a strain producing 69.03 mg/L dopamine, a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production methods [1].

Integrated Experimental Protocols

Protocol 1: Fine-Tuning a PLM for Functional Protein Design

This protocol outlines the steps for using a model like ProGen to design novel functional proteins, a process that forms a complete in silico DBTL cycle [29].

Design: Model Selection and Conditioning

- Select a pre-trained generative PLM (e.g., ProGen).

- Define the target protein family and desired properties. These properties are converted into control tags (e.g.,

[PFAM:Lysozyme],[TAXON:Chicken]). - Assemble a fine-tuning dataset of sequences and their corresponding tags from relevant databases (e.g., UniProt, Pfam).

Build: Fine-Tuning the Model

- Continue training (fine-tune) the base ProGen model on your curated dataset. This teaches the model the specific "language" of your protein family and how to associate control tags with sequence features.

- Use standard deep learning frameworks (e.g., PyTorch, TensorFlow) and high-performance computing resources.

Test: In Silico Generation and Screening

- Generate a large library of novel protein sequences by sampling from the fine-tuned model, providing it with your desired control tags.

- Use independent computational tools (e.g., AlphaFold2 for structure prediction, docking software for binding affinity) to screen and rank the generated sequences based on stability and predicted function.

Learn: Analysis and Model Refinement

- Analyze the generated sequences to identify patterns and common features.

- The model's success is validated experimentally (see Protocol 2), and the experimental results can be used to further refine the fine-tuning dataset for subsequent cycles.

Protocol 2: ML-Guided Optimization of a Metabolic Pathway

This protocol details the knowledge-driven DBTL cycle for optimizing a metabolic pathway, as demonstrated for dopamine production [1].

Design: In Vitro Knowledge Gathering

- Goal: Determine the optimal relative expression levels of pathway enzymes before in vivo engineering.

- Method: Clone pathway genes (e.g., hpaBC, ddc) into individual plasmids under an inducible promoter. Express these proteins in a cell-free transcription-translation (CFPS) system or crude cell lysate.

- Testing: Combine the lysates in different ratios and measure the production of the final product (e.g., dopamine from L-tyrosine) to identify the most efficient enzyme ratio.

Build: In Vivo Library Construction

- Translate the optimal ratio into an in vivo context using RBS engineering.

- Design a library of constructs where the genes of the metabolic pathway are assembled in a polycistronic operon, with the RBS of each gene randomized (e.g., using degenerate primers) to create a vast spectrum of expression strengths.

- Use automated cloning techniques (e.g., Golden Gate assembly) to build the plasmid library and transform it into the production host (e.g., a high L-tyrosine producing E. coli strain).

Test: High-Throughput Screening

- Culture the library variants in microtiter plates under production conditions.

- Use high-throughput analytics (e.g., HPLC, LC-MS) or colorimetric/fluorometric assays to quantify the titer of the desired product (dopamine) for each variant.

Learn: Model Building and Prediction

- Data Collection: For each variant, collect the data: RBS sequence -> product titer (fitness).

- Model Training: Train an ML model (e.g., Random Forest or Gradient Boosting) to predict the production titer from the RBS sequence features (e.g., GC content, Shine-Dalgarno sequence).

- Analysis: The model identifies which RBS features are most predictive of high yield. This model can then be used to predict superior RBS combinations for the next DBTL cycle, further optimizing production.

Figure 2: The knowledge-driven DBTL cycle for metabolic pathway optimization. Insights from initial in vitro tests guide the construction of a smart library, and ML learns from high-throughput screening data to close the loop.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for ML-Driven Biology

| Reagent / Tool | Function | Example Use Case |

|---|---|---|

| Pre-trained PLMs (e.g., ESM-2, ProGen) | Provides a foundational understanding of protein sequence space for prediction or generation. | Predicting the effect of a mutation (ESM-2) or generating a novel enzyme sequence (ProGen) [29] [28]. |

| Cell-Free Protein Synthesis (CFPS) System | An in vitro platform for rapid testing of protein expression and pathway function without cellular constraints. | Determining optimal enzyme expression ratios for a pathway before in vivo strain engineering [1]. |

| Ribosome Binding Site (RBS) Library | A collection of genetic variants with randomized RBS sequences to tune translation initiation rates and gene expression levels. | Creating a diverse population of production strains to map sequence-to-function relationships for ML [1]. |

| Sparse Autoencoders | An AI tool for interpreting the internal representations of complex deep learning models like PLMs. | Identifying which protein features (e.g., function, family) a PLM uses for its predictions, increasing trust and providing biological insights [28]. |

| pET / pJNTN Plasmid Systems | High-copy-number expression vectors for controlled, high-level protein expression in E. coli. | Cloning and expressing target genes for in vitro testing or in vivo production [1]. |

Building the Self-Driving Lab: ML Methods and Real-World Applications

The traditional Design-Build-Test-Learn (DBTL) cycle has been the cornerstone of systematic engineering in synthetic biology and protein engineering. However, its effectiveness is often hampered by long development times and the limited predictive power of purely physical models. The integration of advanced machine learning (ML) technologies is now revolutionizing this cycle, enabling a more predictive and efficient approach to bioengineering. This shift is so profound that some propose reordering the cycle to "LDBT" (Learn-Design-Build-Test), where machine learning models that have internalized evolutionary and biophysical principles guide the initial design, potentially reducing the need for multiple iterative cycles [4]. This document provides application notes and detailed protocols for three key classes of ML technologies—Protein Language Models, Structure-Based Design Tools, and Fitness Predictors—that are critical for optimizing the DBTL cycle in modern protein research and drug development.

Protein Language Models (ESM and ProGen)

Protein Language Models (LMs) treat amino acid sequences as a language, learning evolutionary patterns from vast datasets of natural protein sequences. By predicting the next amino acid in a sequence, these models develop a deep understanding of protein grammar and semantics without explicit biophysical modeling. Two leading examples are ESM (Evolutionary Scale Modeling) and ProGen [30] [4].

ESM from Meta FAIR is a state-of-the-art Transformer-based protein language model. ESM-2, one of its most advanced versions, is a single-sequence model that outperforms other tested single-sequence models across structure prediction tasks. ESMFold harnesses ESM-2 to generate accurate end-to-end structure predictions directly from sequence. The ESM Metagenomic Atlas provides hundreds of millions of predicted metagenomic protein structures, showcasing its scale [31].

ProGen is a 1.2 billion-parameter neural network trained on 280 million protein sequences from over 19,000 protein families. Its key innovation is conditional generation, where sequence generation is controlled by property tags (e.g., protein family, biological process) provided as input. This allows researchers to significantly constrain the sequence space for generation and improve quality [30].

Table 1: Key Specifications of ESM and ProGen Models

| Feature | ESM-2 | ProGen |

|---|---|---|

| Architecture | Transformer | Decoder Transformer |

| Parameters | Up to 15B (esm2t4815B_UR50D) | 1.2 Billion |

| Training Data | UR50/D (UniRef50) | 280 million sequences from UniParc, UniProtKB, Pfam |

| Key Capability | Structure/Function Prediction from Sequence | Conditional Generation via Control Tags |

| Unique Feature | ESMFold for end-to-end structure prediction | Fine-tuning to specific protein families |

Application Notes

Protein LMs excel in zero-shot prediction tasks, meaning they can make accurate predictions without additional training on specific targets. Applications include:

- De novo protein design: Generating novel protein sequences from scratch for desired functions [31] [30].

- Variant effect prediction: Predicting the functional consequences of sequence variations (ESM-1v) [31].

- Inverse folding: Designing sequences that fold into a given structure (ESM-IF1) [31].

- Function prediction: Inferring protein function and predicting properties like solubility and stability [4].

In experimental validation, ProGen-generated artificial lysozyme sequences showed similar activities and catalytic efficiencies to natural lysozymes (including hen egg white lysozyme), despite having as low as 31.4% sequence identity to any known natural protein. X-ray crystallography confirmed that an artificial protein recapitulated the conserved fold and active site residue positioning found in natural proteins [30].

Protocol: Generating Novel Protein Sequences with Fine-Tuned ProGen

Purpose: To generate novel, functional protein sequences for a target protein family using ProGen's fine-tuning and conditional generation capabilities.

Materials:

- Pre-trained ProGen model (1.2B parameter version)

- Curated dataset of sequences from the target protein family (e.g., ≥10,000 sequences for effective fine-tuning)

- Computational resources: Access to 256 Google Cloud TPU v3 cores or equivalent (Fine-tuning requires ~2 weeks on this setup, though generation itself is rapid)

Procedure:

- Data Curation: Collect and clean a dataset of protein sequences belonging to your target family. Annotate sequences with relevant control tags (e.g., Pfam ID, biological process).

- Model Fine-Tuning: Perform computationally inexpensive gradient updates to the pre-trained ProGen model using the curated dataset. This adapts the model to the specific distribution of your target family [30].

- Sequence Generation:

- Provide the Pfam ID or other relevant control tags as input to constrain generation.

- Optionally, provide a few amino acids at the beginning of the sequence as a primer.

- The model will autoregressively generate the sequence left-to-right, token-by-token, until an "end of sequence" token is produced.

- Sequence Filtering and Ranking: Rank the generated sequences using a combination of:

- Adversarial Discriminator: A model trained to distinguish real from generated sequences, used to score "naturalness" [30].

- Model Log-Likelihood: The model's own probability assessment of the generated sequence [30].

- Select top-ranked sequences that span a range of sequence identities (e.g., 40-90% "max ID") to natural proteins to explore diverse functional candidates.

- Experimental Validation: Proceed to in vitro or in vivo testing of selected artificial sequences.

Structure-Based Design Tools (ProteinMPNN)

ProteinMPNN is a deep learning-based protein sequence design method that solves the inverse folding problem: given a protein backbone structure, it predicts amino acid sequences that will fold into that structure. It outperforms physically-based approaches like Rosetta in both computational speed and native sequence recovery [32].

ProteinMPNN is a message passing neural network (MPNN) that uses protein backbone features—distances between atoms (N, Cα, C, Cβ, O), relative frame orientations, and rotations—as input. Its key architectural features include:

- Order-agnostic autoregressive decoding: The decoding order is randomly sampled, enabling design of fixed regions and multi-chain complexes.

- Symmetry awareness: For homo-oligomers, logits can be averaged between symmetric positions to enforce identical sequences.

- Noise training: Models trained with Gaussian noise (std=0.02Å) added to backbone coordinates show improved performance on AlphaFold-generated models [32].

Table 2: ProteinMPNN Performance Comparison with Rosetta

| Metric | ProteinMPNN | Rosetta |

|---|---|---|

| Native Sequence Recovery (PDB Test) | 52.4% | 32.9% |

| Computational Time (100 residues) | ~1.2 seconds | ~4.3 minutes |

| Core Residue Recovery | ~90-95% | Lower (exact % not specified) |

| Surface Residue Recovery | ~35% | Lower (exact % not specified) |

Application Notes

ProteinMPNN's flexibility makes it applicable to a wide range of design challenges:

- De novo protein design: Generating sequences for novel backbone scaffolds.

- Multi-chain protein complexes: Designing interfaces for heteromers and homomers with controlled symmetry.

- Rescue of failed designs: Successfully redesigning sequences for previously failed designs of monomers, cyclic homo-oligomers, tetrahedral nanoparticles, and target-binding proteins [32].

- Robust design on predicted structures: Effective performance on AlphaFold2-predicted backbone models when trained with backbone noise.

In one application, ProteinMPNN was combined with deep learning-based structure assessment (AlphaFold, RoseTTAFold), leading to a nearly 10-fold increase in protein design success rates [4].

Protocol: Fixed-Backbone Sequence Design with ProteinMPNN

Purpose: To design a novel amino acid sequence that folds into a given protein backbone structure, which can be experimentally determined (e.g., from PDB) or computationally predicted (e.g., from AlphaFold).

Materials:

- Input protein structure in PDB format

- ProteinMPNN implementation (available via NVIDIA NIM API or local installation)

- Specifications for any design constraints (e.g., fixed positions, omitted AAs, symmetry requirements)

Procedure:

- Input Preparation: Provide the input protein backbone structure via

input_pdbparameter (file or pre-uploaded asset). The structure should include at least Cα atoms, though full atomic detail is beneficial. - Parameter Configuration:

- Chain Selection: Specify

input_pdb_chainsto design for specific chains; defaults to all chains. - Number of Sequences: Set

num_seq_per_target(default: 1) to generate multiple sequence candidates. - Diversity Control: Adjust

sampling_temp(range 0.1-0.3); lower values produce more conservative designs. - Model Type: Choose between soluble (

use_soluble_model=true) and non-soluble models based on the target protein's intended environment [33].

- Chain Selection: Specify

- Apply Design Constraints (Optional):

- Fixed Positions: Use

fixed_positions_jsonlto specify residues that must remain unchanged (e.g., catalytic triad residues, binding site motifs). - Omitted Amino Acids: Use

omit_AAsoromit_AA_jsonlto exclude specific amino acids (e.g., cysteine to prevent disulfide formation). - Symmetry Handling: For symmetric complexes, use

tied_positions_jsonlto enforce identical residues at corresponding positions. - Evolutionary Guidance: Use PSSM (

pssm_jsonl) and associated parameters to bias designs toward natural conservation patterns [33].

- Fixed Positions: Use

- Run Sequence Design: Execute the model. The order-agnostic decoder will autoregressively generate sequences based on the input backbone features and constraints.

- Output Analysis: Review the generated sequences in multi-FASTA format (

mfasta). Use the providedscores(log-probabilities) andprobs(positional probabilities) to assess sequence quality and variability [33].

Fitness Predictors and the Automated Recommendation Tool (ART)

Fitness predictors estimate the functional quality of protein sequences or strains, bridging the Learn and Design phases of the DBTL cycle. The Automated Recommendation Tool (ART) is a machine learning tool specifically tailored for synthetic biology that uses Bayesian ensemble approaches to predict strain production levels and recommend improved designs [34].

ART is designed for the data-sparse environments typical of biological research. Its key features include:

- Uncertainty Quantification: Provides full probability distributions for predictions, not just point estimates, enabling risk assessment.

- Adaptation to DBTL cycles: Recursively incorporates data from previous engineering cycles.

- Multiple objective support: Maximization, minimization, and target value optimization for metabolic engineering goals.

- Integration with data repositories: Can import data directly from Experimental Data Depo (EDD) or EDD-style .csv files [34].

Application Notes

ART and similar fitness predictors guide engineering campaigns when a direct sequence-to-function mapping is needed. Applications include:

- Metabolic engineering: Mapping proteomics data or promoter combinations to production titers of valuable molecules [34].

- Pathway optimization: Predicting optimal enzyme combinations and expression levels in biosynthetic pathways.

- Informed library design: Recommending which strains to build in the next DBTL cycle to maximize information gain and production.

In experimental validation, ART was used to improve tryptophan productivity in yeast by 106% from the base strain. It has also been successfully applied to projects involving renewable biofuels, fatty acids, and hoppy flavored beer without hops [34].

Protocol: Guiding Protein Engineering with ART

Purpose: To use the Automated Recommendation Tool (ART) to recommend and predict the performance of new protein or strain variants in an iterative DBTL cycle.

Materials:

- Dataset of characterized variants (inputs and corresponding response variables, e.g., production levels)

- ART software (leveraging scikit-learn and Bayesian ensemble methods)

- Specification of engineering objective (e.g., maximize production)

Procedure:

- Data Integration: Import your experimental dataset into ART. This can be done directly from Experimental Data Depo (EDD) or via EDD-style .csv files. The dataset should include both input variables (e.g., proteomics data, promoter combinations, sequence features) and the response variable (e.g., enzyme activity, product titer) [34].

- Model Training: Train ART's ensemble model on the available data. The model will learn the statistical relationships between your inputs and the response variable. ART is particularly tailored for small datasets (<100 instances) typical in early DBTL cycles [34].

- Define Objective: Specify the desired engineering objective (e.g., "Maximize limonene production").

- Generate Recommendations:

- ART uses sampling-based optimization to provide a set of recommended inputs (e.g., proteomic profiles) predicted to achieve your goal.

- Each recommendation comes with a probabilistic prediction of the outcome, allowing you to balance the potential reward against the prediction uncertainty [34].

- Build and Test Recommended Variants: Synthesize and experimentally characterize the top recommended variants.

- Iterate the DBTL Cycle: Incorporate the new experimental results back into ART's dataset and retrain the model to inform the next round of design, creating a closed-loop optimization system.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ML-Driven Protein Design

| Resource / Tool | Type | Primary Function | Access / Source |

|---|---|---|---|

| ESM Models | Pre-trained Protein Language Model | Protein structure/function prediction, variant effect, inverse folding | GitHub: facebookresearch/esm, HuggingFace, TorchHub [31] |

| ProGen | Pre-trained Protein Language Model | Conditional generation of novel protein sequences | Request from authors [30] |

| ProteinMPNN | Structure-Based Sequence Design Tool | Fixed-backbone sequence design for monomers & complexes | NVIDIA NIM API, GitHub [32] [33] |

| Automated Recommendation Tool (ART) | Fitness Prediction & Recommendation | Recommending high-performing strains/variants based on experimental data | Software library [34] |

| Cell-Free Expression System | Experimental Testing Platform | Rapid, high-throughput protein synthesis and testing without live cells | Commercially available kits (e.g., from Arbor Biosciences, NEB) [4] |

| Experimental Data Depo (EDD) | Data Management Platform | Standardized storage and management of experimental data and metadata | Online tool [34] |

Integrated Workflow: From Learning to Functional Proteins

The true power of these ML technologies is realized when they are integrated into a cohesive workflow. The proposed LDBT paradigm begins with the extensive prior knowledge encoded in pre-trained models, fundamentally accelerating the engineering process [4].

Integrated Protocol: ML-Driven DBTL Cycle for Enzyme Engineering