Accelerating Innovation: A Guide to Rapid Prototyping Workflows in Synthetic Biology

This article provides a comprehensive overview of rapid prototyping workflows that are revolutionizing synthetic biology.

Accelerating Innovation: A Guide to Rapid Prototyping Workflows in Synthetic Biology

Abstract

This article provides a comprehensive overview of rapid prototyping workflows that are revolutionizing synthetic biology. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of iterative Design-Build-Test-Learn (DBTL) cycles and their critical role in accelerating the development of genetic circuits, microbial cell factories, and therapeutic agents. The scope spans from core concepts and key tools like combinatorial optimization and AI-driven design to practical applications in metabolic engineering and cell-free systems. It further addresses common troubleshooting challenges, optimization strategies for enhanced yield and stability, and essential validation and comparative analysis techniques to ensure reproducibility and robust performance. This guide synthesizes current methodologies to empower scientists in building more predictable and efficient biological systems.

The Principles and Power of Rapid Prototyping in Bio-Design

Rapid prototyping is a foundational methodology in synthetic biology, enabling the accelerated development and optimization of biological systems. At its core, it involves the iterative application of the Design-Build-Test-Learn (DBTL) cycle, a framework that systematically guides the engineering of organisms to perform specific functions, such as producing therapeutics or valuable chemicals [1]. The traditional DBTL cycle begins with the Design of biological parts, proceeds to the physical Build of DNA constructs, moves to the experimental Test of function, and concludes with the analysis and Learn phase to inform the next design iteration [2] [3].

However, the landscape of biological prototyping is undergoing a significant transformation. The integration of artificial intelligence (AI) and machine learning (ML) is reshaping the classic DBTL cycle, with some proposing a new LDBT (Learn-Design-Build-Test) paradigm where machine learning, trained on vast biological datasets, precedes and guides the design phase [4]. Furthermore, the adoption of cell-free protein synthesis (CFPS) systems is dramatically accelerating the Build and Test phases by decoupling gene expression from living cells, enabling faster iteration and high-throughput experimentation [5] [4]. This convergence of computational and experimental technologies is pushing the field toward a future of more predictable biological engineering, where the first design might simply work—a "Design-Build-Work" ideal [6].

The Core DBTL Framework and Its Evolution

The Phases of the Traditional DBTL Cycle

The DBTL cycle is an iterative engineering framework that provides structure to the complex process of biological design [2] [3].

- Design: In this initial phase, researchers define the objectives for the desired biological function. This involves designing new genes, selecting genetic parts from libraries, or using computational models to simulate the anticipated behavior of the system. The design relies on domain knowledge, expertise, and computational tools [4] [3].

- Build: This phase concerns the physical construction of the designed DNA fragments and their introduction into a host cell or system. Key enabling technologies include gene synthesis and genome editing tools like CRISPR-Cas9 [3]. Automation and robotic liquid handling in biofoundries have standardized and increased the throughput of this stage [2].

- Test: Once constructs are built, they are rigorously characterized to measure performance against the desired outcome. High-throughput sequencing and various functional assays are used to collect performance data [3] [1]. This phase generates the critical data required for validation and learning.

- Learn: In the final phase, data from the Test phase is analyzed to understand the success or failure of the design. This learning informs the subsequent design round, and the DBTL cycle is repeated until the desired function is robustly achieved [4] [1].

The LDBT Paradigm: A Machine-Learning First Approach

A paradigm shift is emerging where "Learning" precedes "Design" [4]. This LDBT cycle leverages powerful machine learning models that have been pre-trained on megascale biological datasets. These models can make zero-shot predictions—designing functional biological parts without the need for additional training or multiple DBTL iterations [4].

For instance, protein language models (e.g., ESM, ProGen) learn from evolutionary relationships embedded in millions of protein sequences, enabling them to predict beneficial mutations and infer function [4]. Structure-based tools like ProteinMPNN can design sequences that fold into a given protein backbone, leading to a nearly 10-fold increase in design success rates for applications such as engineering TEV protease variants with improved catalytic activity [4]. This approach, when combined with rapid cell-free testing, allows researchers to start with a large, in-silico-generated knowledge base, effectively compressing the traditional iterative cycle.

The Role of Biofoundries and Automation

Biofoundries are automated, high-throughput facilities that strategically integrate robotics, liquid handling systems, and bioinformatics to streamline the entire synthetic biology workflow [2]. They are physical hubs where the DBTL cycle is executed at scale and with high precision. These facilities consolidate foundational technologies to accelerate the engineering of biological systems, making the rapid exploration of vast design spaces feasible [2]. The establishment of the Global Biofoundry Alliance (GBA) underscores the importance of shared resources and standardized protocols in advancing the field's capabilities [2].

Accelerating Prototyping with Cell-Free Systems and AI

Cell-Free Protein Synthesis (CFPS) as a Prototyping Platform

Cell-free protein synthesis (CFPS) platforms use the transcriptional and translational machinery from cell lysates or purified components to express proteins in an open, test-tube environment [5]. This technology is transformative for rapid prototyping because it decouples gene expression from the constraints of cell viability and growth [5].

Key advantages of CFPS for prototyping include:

- Speed: CFPS reactions are rapid, yielding >1 g/L of protein in less than 4 hours, and they eliminate time-consuming cloning and transformation steps [4] [5].

- Direct Control: The open nature of the system allows direct manipulation of reaction conditions, including enzyme concentrations, cofactor levels, and energy sources [5].

- High-Throughput Compatibility: CFPS is easily miniaturized to picoliter scales and automated using liquid-handling robots, enabling the screening of thousands of protein variants or pathway combinations in parallel [4] [5].

- Expression of Toxic Proteins: It allows for the expression of proteins that would be lethal to a living host [5].

These features make CFPS particularly valuable for metabolic pathway prototyping, enzyme engineering, and biosensor development [5]. For example, the in vitro prototyping and rapid optimization of biosynthetic enzymes (iPROBE) method uses CFPS to generate training data for a neural network, which then predicts optimal pathway sets, leading to a over 20-fold improvement in product yield [4].

Integrating Machine Learning for Predictive Design

Machine learning (ML) and deep learning (DL) are powerful catalysts for the DBTL cycle [3]. They address the core challenge of biological complexity by capturing non-linear, high-dimensional interactions within data that are intractable for traditional biophysical models [7] [3]. The synergy between ML and synthetic biology is mutually reinforcing: synthetic biology generates the large-scale datasets needed to train accurate models, and these models, in turn, inform and optimize biological design [3].

This integration is exemplified by context-aware biosensor design. In one study, a library of FdeR-based naringenin biosensors was built and characterized under different conditions. A biology-guided machine learning model was then developed to describe the biosensor's dynamic behavior and predict the optimal genetic and environmental combinations for a desired performance specification [7]. This creates a powerful, data-driven DBTL pipeline for optimizing biological parts for specific applications.

Table 1: Key Machine Learning Applications in Biological Prototyping

| ML Application | Function | Example Tool/Use Case |

|---|---|---|

| Protein Language Models | Predicts protein structure and function from sequence; enables zero-shot design. | ESM, ProGen; designing antibody sequences and predicting beneficial mutations [4]. |

| Structure-Based Design | Designs protein sequences that fold into a specific backbone structure. | ProteinMPNN; engineering stabilized variants of TEV protease [4]. |

| Fitness Landscape Mapping | Predicts the effect of mutations on protein properties like stability and solubility. | Prethermut, Stability Oracle; predicting ΔΔG of mutations for thermostability engineering [4]. |

| Context-Aware Modeling | Predicts the performance of genetic circuits under varying environmental conditions. | Mechanistic-guided ML for optimizing naringenin biosensor response in different media [7]. |

Application Note: Prototyping a Naringenin Biosensor

This application note details a protocol for developing and optimizing a transcription factor-based biosensor for naringenin, a valuable flavonoid compound. The workflow employs a DBTL cycle enhanced by biology-guided machine learning to account for context-dependent performance [7].

Experimental Protocol

Design and Build Phases: Biosensor Library Construction

Objective: Combinatorially assemble a library of biosensor constructs to explore a wide design space. Materials:

- DNA Parts: A collection of 4 promoters (P1-P4) and 5 ribosome binding sites (RBSs) of different strengths [7].

- Backbone Vectors: Plasmids for the reporter module (fdeO-GFP) and the TF expression module.

- Enzymes: Restriction enzymes or assembly mix (e.g., Golden Gate assembly).

- Chassis: Escherichia coli strains for transformation and characterization.

Procedure:

- Design: Plan the combinatorial assembly of the FdeR transcription factor module using the 4 promoters and 5 RBSs. The resulting modules will be assembled with a second module containing the FdeR operator and a GFP reporter gene [7].

- Build: Perform automated DNA assembly according to the designed plan. In the referenced study, this process successfully built 17 distinct constructs from the possible combinations [7].

- Transform: Introduce the assembled constructs into the E. coli chassis for testing.

Test Phase: Characterizing Dynamic Response

Objective: Measure the biosensor's fluorescence output in response to naringenin under different environmental conditions. Materials:

- Culture Media: A variety of media such as M9, SOB, and others [7].

- Carbon Sources/Supplements: Glucose, glycerol, sodium acetate [7].

- Inducer: Naringenin stock solution (e.g., 400 µM working concentration) [7].

- Equipment: Microplate reader, incubating shaker, automated liquid handler.

Procedure:

- Inoculation: Grow overnight cultures of the transformed biosensor strains.

- Experimental Setup: Use a D-optimal design of experiments (DoE) to plan informative combinations of genetic constructs (from the Build phase), media, and supplements. Set up deep-well plates with these condition combinations [7].

- Induction and Measurement: Dilute cultures into fresh media in assay plates. Add naringenin inducer. Incubate and measure optical density (OD600) and GFP fluorescence (e.g., excitation 485 nm, emission 520 nm) over 7 hours to capture dynamic response [7].

- Data Collection: Record fluorescence and OD measurements at regular intervals. Normalize fluorescence by OD to calculate normalized output.

Learn Phase: Model-Guided Analysis and Optimization

Objective: Analyze data to build a predictive model and identify optimal biosensor designs. Materials: Computational resources, statistical software, machine learning frameworks.

Procedure:

- Data Analysis: Fit a mechanistic model of the biosensor's dynamic response to the collected data. Calibrate model parameters using bagging to create an ensemble of models [7].

- Machine Learning: Use the calibrated parameters to train a deep learning-based predictive model. This model will account for context-dependence (promoter strength, RBS, media) [7].

- Prediction and Validation: Use the trained model to predict the best combinations of genetic parts and growth conditions to achieve a desired biosensor specification (e.g., dynamic range, sensitivity). Validate the top predictions experimentally.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biosensor Prototyping

| Item | Function in the Protocol |

|---|---|

| FdeR Transcription Factor | Allosteric TF from Herbaspirillum seropedicae; activates gene expression in response to naringenin binding [7]. |

| Promoter Library (P1-P4) | Provides varying levels of transcriptional strength for the TF gene, tuning the biosensor's input sensitivity [7]. |

| RBS Library (5 variants) | Provides varying levels of translational strength for the TF gene, further fine-tuning the system's response [7]. |

| GFP Reporter Gene | Encodes a green fluorescent protein; its expression under the control of the FdeR operator provides a quantifiable output signal [7]. |

| Cell-Free Protein Synthesis (CFPS) System | An alternative platform for ultra-high-throughput testing of biosensor components without the need for transformation into live cells [5]. |

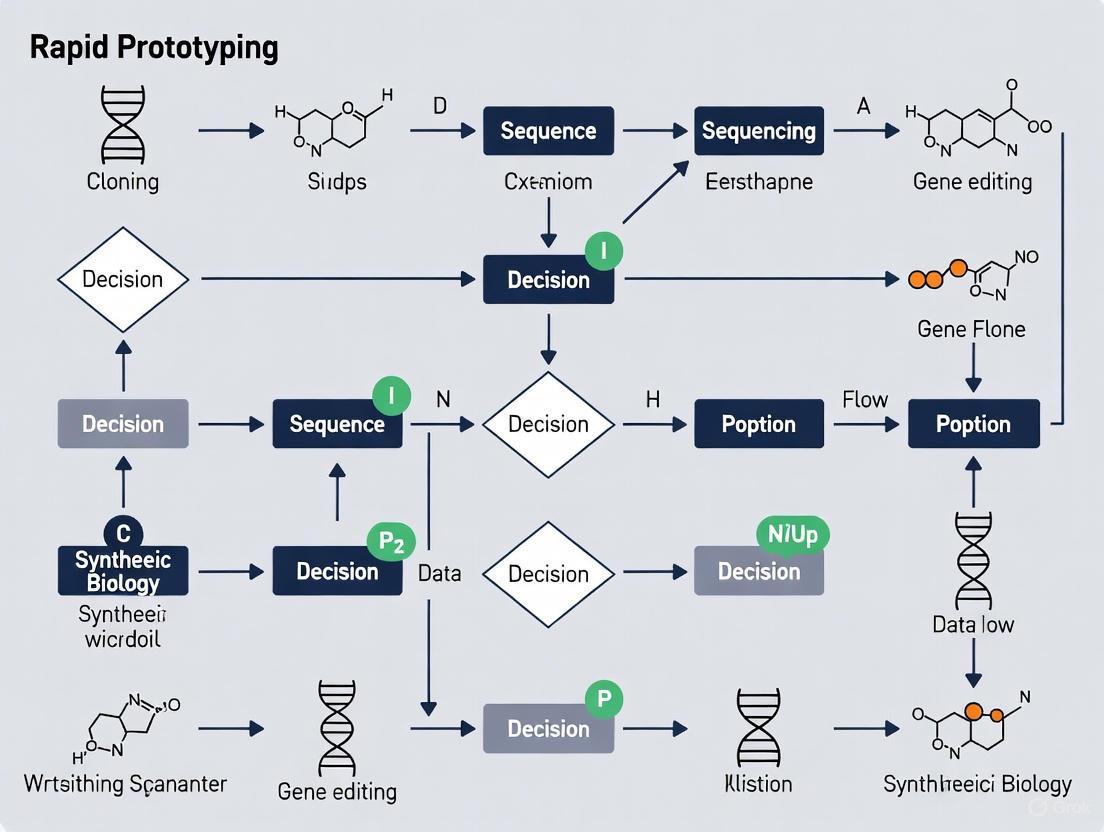

Workflow Visualization

The following diagrams illustrate the core workflows discussed in this application note.

The DBTL Cycle in Biofoundries

The LDBT Paradigm with Cell-Free Testing

Rapid prototyping in synthetic biology has evolved from a purely iterative DBTL process to an accelerated, intelligent workflow powered by cell-free systems and machine learning. The integration of CFPS enables the megascale testing necessary to generate high-quality data, while ML models turn this data into predictive power for future designs. This synergistic approach, often embodied in automated biofoundries, is reducing the time and cost of biological engineering. It is pushing the field closer to the ultimate goal of predictable and reliable "Design-Build-Work" outcomes, thereby accelerating the development of novel biologics, biosensors, and sustainable bioprocesses for drug development and beyond.

Synthetic biology has undergone a fundamental transformation, evolving from a discipline focused on characterizing individual genetic parts to one capable of designing and implementing complex, multi-component systems. This evolution has been driven by the adoption of engineering principles, particularly the Design-Build-Test-Learn (DBTL) cycle, and enabled by the rise of automated biofoundries. This application note details the protocols and methodologies that underpin this shift, providing researchers with a framework for implementing rapid prototyping workflows essential for advanced therapeutic development and biomanufacturing.

The foundational goal of synthetic biology is the application of engineering principles to design and construct new biological parts, devices, and systems [2]. Initially, research was constrained to the painstaking characterization of single parts, such as promoters and coding sequences, due to technological limitations. The transition to engineering complex systems was necessitated by the understanding that cellular functions inherently arise from interacting molecular networks, not isolated components [8]. Systems biology revealed that most cellular processes occur as networks controlled by sensors, signals, and effectors, creating a foundation for synthetic biology to build upon [8].

This shift was made possible by integrating automation, computational modeling, and machine learning into a standardized workflow. The DBTL cycle has emerged as the central paradigm for this systems-level approach, enabling the iterative optimization required to achieve robust function in complex biological systems [2]. This document outlines the key protocols and reagents that facilitate this modern, systems-oriented approach to synthetic biology.

The DBTL Cycle: Core Protocol for Systems Engineering

The DBTL cycle is the engine of modern synthetic biology. The following protocol, implemented in automated biofoundries, allows for the high-throughput engineering required to move from single parts to complex systems.

Protocol: Implementing an Automated DBTL Workflow

Objective: To complete a full DBTL cycle for the optimization of a multi-gene biosynthetic pathway in a microbial host.

Materials:

- Strain Chassis: Escherichia coli or Saccharomyces cerevisiae.

- DNA Parts: Library of promoter, RBS, coding sequence, and terminator parts.

- Software: DNA assembly design software (e.g., j5, Cello, SynBiopython) [2].

- Hardware: Automated liquid handling robots (e.g., Opentrons), plate readers, next-generation sequencers.

- Culture Vessels: 96-well or 384-well deep-well plates for cell culture.

Methodology:

Design (D) Phase:

- In silico Design: Use software to design genetic constructs. For metabolic pathways, tools like Cameo or RetroPath 2.0 can predict optimal pathways and flux [2].

- Parts Standardization: Design parts using standardized formats (e.g., BioBricks, Golden Gate assemblies) to ensure compatibility.

- Design of Experiments (DoE): Plan a library of constructs that vary key parameters (e.g., promoter strength, gene order) to efficiently explore the design space.

Build (B) Phase:

- Automated DNA Assembly: Use robotic liquid handlers to perform high-fidelity DNA assembly reactions (e.g., Gibson Assembly, Golden Gate) in a 96-well format.

- Transformation: Automatically transform assembled DNA into the microbial chassis and plate onto selective solid media.

- Colony Picking: Use a robotic colony picker to inoculate single colonies into liquid culture in deep-well plates. This protocol can be executed using affordable automation solutions like AssemblyTron, which integrates j5 designs with Opentrons robots [2].

Test (T) Phase:

- High-Throughput Screening: Grow cultures in controlled bioreactor blocks (e.g., BioLector) that monitor growth (OD600) and product formation via fluorescence or absorbance.

- Data Acquisition: Automatically collect samples for analytical methods like HPLC or MS to quantify target metabolite titers, yields, and productivities.

Learn (L) Phase:

- Data Analysis: Use statistical software (e.g., R, Python) to analyze screening data. Employ tools like SuperPlotsOfData to transparently visualize all data points and clearly communicate the results from biological replicates [9].

- Machine Learning: Train machine learning models (e.g., Graph Neural Networks, CNNs) on the collected data to predict the performance of new, untested designs [10] [11]. This model is then used to inform the designs for the next DBTL cycle.

Troubleshooting:

- Low Assembly Efficiency: Verify part purity and concentration; optimize assembly reaction incubation times.

- Poor System Performance: Revisit the DoE in the Design phase to explore a wider region of the genetic space. Check for metabolic burden or toxicity.

Workflow Visualization

The following diagram illustrates the iterative, automated nature of the DBTL cycle.

Quantitative Evolution: From Simple Calculators to Complex Interactomes

The complexity of a biological system can be qualitatively understood by comparing the number and interactions of its molecular components, analogous to comparing a simple calculator to a modern computer [8]. The transition in synthetic biology is quantifiable by the scaling of part counts and the emergence of network-level properties.

Table 1: Quantitative Comparison of Biological Complexity

| System Level | Exemplary Organism/System | Number of Protein Types | Total Molecular Components | Key Network Characteristics |

|---|---|---|---|---|

| Minimal Cell | Mycoplasma genitalium | ~400 [8] | ~1-2 million | Basic essential functions; minimal interactome. |

| Model Bacterium | Escherichia coli | 1,850 [8] | >25 million [8] | Dense metabolic networks; regulated feedback loops. |

| Eukaryotic Cell | Saccharomyces cerevisiae | ~4,300 | >50 million (est.) | Compartmentalization; complex signaling pathways. |

| Complex Biological System | Human PPI Network | ~20,000 | Trillions | Hierarchical community structure, high clustering, assortativity [10]. |

The data in Table 1 shows a dramatic increase in component count from minimal cells to complex organisms. This complexity is managed through interactomes—networks of protein-protein interactions where a single protein can interact with dozens of others, increasing system complexity exponentially [8]. Modern machine learning techniques can now reconstruct the evolution of these complex networks, revealing co-evolution mechanisms like preferential attachment and community structure that were previously difficult to model [10].

The Scientist's Toolkit: Essential Research Reagent Solutions

Engineering complex systems requires a specialized toolkit of reagents, software, and hardware.

Table 2: Key Research Reagent Solutions for Systems Synthetic Biology

| Item Name | Category | Function/Application | Example Product/Software |

|---|---|---|---|

| Standardized Genetic Parts | Biological Reagent | Interchangeable DNA sequences (promoters, RBS, etc.) for modular assembly. | BioBricks, Golden Gate MoClo Parts |

| DNA Assembly Master Mix | Chemical Reagent | Enzymatic mix for seamless and high-efficiency assembly of multiple DNA fragments. | Gibson Assembly Master Mix, Golden Gate Assembly Kit |

| Competent Cells | Biological Reagent | High-efficiency microbial cells for DNA transformation during the Build phase. | NEB 10-beta Competent E. coli |

| Fluorescent Reporters | Biological Reagent | Genes (e.g., GFP, mCherry) used to quantify gene expression and system output in real-time. | eGFP, sfGFP |

| j5 DNA Assembly Design Software | Software | Open-source tool for automating the design of complex DNA assemblies. [2] | j5 |

| Cello | Software | Software for automatically designing genetic circuits based on a verilog description. [2] | Cello |

| Graph Neural Network (GNN) Models | Computational Tool | Machine learning architecture for predicting network behavior and evolution. [10] [11] | Custom GNN Models |

| Opentrons Liquid Handling Robot | Hardware | Affordable, programmable robot for automating liquid transfers in the Build and Test phases. | OT-2 |

Visualizing System Complexity: From Pathways to Interactomes

The move to complex systems requires advanced visualization tools to represent network interactions. The following diagram contrasts a simple linear pathway with a complex interactome, highlighting the emergence of network-level properties.

Case Study Protocol: Rapid Prototyping a Biosynthetic Pathway

The following protocol is based on a real-world success story where a biofoundry was challenged to produce 10 target molecules in 90 days, demonstrating the power of integrated DBTL cycles [2].

Objective: To engineer a microbial strain for the production of a novel small molecule (e.g., a therapeutic precursor).

Experimental Protocol:

Pathway Discovery & Design:

- Use retrosynthesis software (e.g., RetroPath 2.0) to identify potential enzymatic pathways from a target molecule to host metabolites [2].

- Design a library of constructs varying codon usage, promoter strength, and gene order for the identified pathway enzymes.

High-Throughput Build & Test:

- Utilize an automated workflow to synthesize and assemble 1-2 Mb of DNA, constructing hundreds of strains across multiple microbial species (e.g., E. coli, S. cerevisiae, B. subtilis) [2].

- Grow strains in microtiter plates and use rapid, in-house assays (e.g., colorimetric, fluorescence-based) to screen for product formation. In the referenced study, 690 such assays were performed [2].

Machine Learning-Guided Learning:

- Input the screening data (genetic design + product titer) into a deep learning model. For sequence data, use one-hot encoding or more advanced embeddings to represent DNA or protein sequences as model inputs [11].

- Train a Convolutional Neural Network (CNN) or Graph Neural Network (GNN) to predict high-performing designs from sequence or network structure [11] [10].

- The model identifies successful design rules and recommends a new set of constructs for the next DBTL iteration, rapidly converging on an optimized strain.

Key Outcome: This integrated approach enabled the production of 6 out of 10 target molecules within the aggressive 90-day timeline, showcasing the power of automated, systems-level synthetic biology [2].

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology and metabolic engineering that enables the systematic and iterative development of biological systems [1]. This engineering-based approach provides a structured methodology for engineering organisms to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [1]. The cycle's power lies in its iterative nature: after learning from initial experimental results, genetic constructs can be modified and refined, with the cycle repeated until a biological system is obtained that produces the desired function [1].

Recent technical advances, including rapid DNA assembly, genome editing, comprehensive pathway refactoring, high-throughput screening, and powerful pathway design tools, are enabling increased automation of microbial chemical production processes [12]. Academic and industrial biofoundries are increasingly adopting this engineering approach, which has long been a central element of product development in traditional engineering disciplines [12]. The DBTL cycle effectively organizes biofoundry activities into interoperable levels, streamlining the entire biological engineering process from concept to optimized system [13].

Core Components of the DBTL Cycle

Design Phase

The Design phase involves the in silico selection of candidate enzymes and biological parts to construct a theoretical pathway for the desired function. For any given target compound, bioinformatics tools enable automated pathway and enzyme selection [12]. Reusable DNA parts are then designed with simultaneous optimization of bespoke ribosome-binding sites and enzyme coding regions [12]. Genes and regulatory parts are combined in silico into large combinatorial libraries of pathway designs, which are statistically reduced using design of experiments (DoE) to smaller representative libraries, allowing efficient exploration of the design space with tractable numbers of samples for laboratory construction [12].

Build Phase

The Build stage begins with commercial DNA synthesis, followed by part preparation via PCR, and automated pathway assembly on robotics platforms [12]. After transformation into a suitable microbial chassis, candidate plasmid clones are quality checked by high-throughput automated purification, restriction digest, analysis by capillary electrophoresis, and sequence verification [12]. Automated biofoundries implement this phase through unit operations representing the smallest units of operation for experiments, which can be conducted by automated instruments or software tools [13].

Test Phase

In the Test phase, constructs are introduced into selected production chassis and automated multi-well growth/induction protocols are run [12]. Detection of target products and key intermediates from cultures begins with automated extraction followed by quantitative screening, typically involving advanced analytical techniques such as fast ultra-performance liquid chromatography coupled to tandem mass spectrometry with high mass resolution [12]. Data extraction and processing are automated using custom-developed computational scripts, enabling high-throughput evaluation of prototype systems.

Learn Phase

The Learn phase involves identifying relationships between observed production levels and design factors through statistical methods and machine learning [12]. This stage provides critical insights that inform the next Design phase, creating the iterative cycle that progressively improves the biological system. The learning process incorporates both traditional statistical evaluations and model-guided assessments to refine system performance [14]. This knowledge-driven approach accelerates development by building mechanistic understanding while optimizing production strains [14].

Diagram 1: The iterative DBTL cycle for synthetic biology.

Quantitative Performance of DBTL-Engineered Systems

Table 1: Performance improvements through iterative DBTL cycling

| Application | Initial Titer | Optimized Titer | Fold Improvement | DBTL Cycles | Key Optimization Strategy |

|---|---|---|---|---|---|

| (2S)-Pinocembrin Production [12] | 0.14 mg/L | 88 mg/L | ~500-fold | 2 | Vector copy number optimization, promoter engineering |

| Dopamine Production [14] | 27 mg/L | 69 mg/L | 2.6-fold | 1+ | RBS engineering, host strain engineering |

| Cell-Free Prototyping [15] | Not specified | Significant reduction in cycle time | Not quantified | Multiple | In vitro compartmentalization, ultra-high-throughput screening |

Table 2: Statistical analysis of design factors affecting pinocembrin production

| Design Factor | P Value | Effect on Production | Implementation in Cycle 2 |

|---|---|---|---|

| Vector Copy Number | 2.00 × 10⁻⁸ | Strong positive | High copy number (ColE1) selected for all constructs |

| CHI Promoter Strength | 1.07 × 10⁻⁷ | Strong positive | Positioned at pathway beginning with strong promoter |

| CHS Promoter Strength | 1.01 × 10⁻⁴ | Moderate positive | Varied with no, low, or high strength promoters |

| 4CL Promoter Strength | 1.01 × 10⁻⁴ | Moderate positive | Varied with no, low, or high strength promoters |

| PAL Promoter Strength | 3.06 × 10⁻⁴ | Weak positive | Fixed at last position in operon |

| Gene Order | Not significant | Minimal | CHI fixed first, PAL fixed last, middle genes permuted |

Application Note: Implementation of an Automated DBTL Pipeline for Flavonoid Production

Background and Objective

Flavonoids represent a structurally diverse class of natural products with significant commercial potential, and pinocembrin serves as a key precursor to this diversity [12]. This application note describes the implementation of an automated DBTL pipeline for the rapid prototyping and optimization of a pinocembrin biosynthetic pathway in Escherichia coli.

Experimental Design and Workflow

Pathway Design

The four-enzyme pathway converts L-phenylalanine to (2S)-pinocembrin, requiring malonyl-CoA as a co-substrate [12]. The selected enzymes included phenylalanine ammonia-lyase (PAL), chalcone synthase (CHS) and chalcone isomerase (CHI) from Arabidopsis thaliana, and 4-coumarate:CoA ligase (4CL) from Streptomyces coelicolor [12].

Combinatorial Library Strategy

A comprehensive combinatorial library was designed with multiple engineering parameters:

- Four expression levels through vector backbone selection

- Varying copy numbers from medium (p15a origin) to low (pSC101 origin)

- Promoter strength variation (strong Ptrc or weak PlacUV5)

- Intergenic region regulation (strong, weak, or no promoter)

- Gene position permutation (24 arrangements for four genes)

This approach generated 2592 possible configurations, compressed to 16 representative constructs using design of experiments based on orthogonal arrays combined with a Latin square for positional gene arrangement, achieving a compression ratio of 162:1 [12].

Diagram 2: Automated DBTL pipeline for flavonoid production.

Detailed Protocol: Automated DBTL for Pathway Engineering

Design Phase Protocol

- Pathway Identification: Use RetroPath [12] and Selenzyme [12] tools for automated pathway and enzyme selection.

- Parts Design: Design reusable DNA parts with simultaneous optimization of ribosome-binding sites and enzyme coding regions using PartsGenie software [12].

- Library Design: Combine genes and regulatory parts into combinatorial libraries in silico.

- Library Compression: Apply design of experiments (DoE) based on orthogonal arrays to reduce library size to tractable numbers for laboratory construction.

- Worklist Generation: Use PlasmidGenie software to produce assembly recipes and robotics worklists for automated ligase cycling reaction for pathway assembly.

Build Phase Protocol

- DNA Synthesis: Order commercial DNA synthesis of designed parts.

- Part Preparation: Perform part preparation via PCR amplification.

- Automated Assembly: Set up reactions for pathway assembly by ligase cycling reaction on robotics platforms.

- Transformation: Transform assembled constructs into E. coli production chassis.

- Quality Control:

- Perform high-throughput automated plasmid purification

- Conduct analytical restriction digest

- Analyze by capillary electrophoresis

- Verify by sequence confirmation

Test Phase Protocol

- Strain Preparation: Introduce verified constructs into selected production chassis.

- Cultivation: Execute automated 96-deepwell plate growth and induction protocols.

- Metabolite Extraction: Perform automated extraction of target products and intermediates.

- Quantitative Analysis:

- Utilize fast UPLC-MS/MS with high mass resolution

- Apply custom-developed R scripts for data extraction and processing

- Quantify target product and key pathway intermediates

Learn Phase Protocol

- Statistical Analysis: Identify relationships between observed production levels and design factors using statistical methods.

- Machine Learning: Apply machine learning algorithms to identify non-intuitive relationships in large datasets.

- Hypothesis Generation: Formulate new design hypotheses based on statistical validation.

- Design Optimization: Implement rational redesign for subsequent DBTL cycle.

Results and Discussion

The initial DBTL cycle for pinocembrin production identified vector copy number as the strongest significant factor affecting production titers (P value = 2.00 × 10⁻⁸), followed by CHI promoter strength (P value = 1.07 × 10⁻⁷) [12]. Weaker but significant effects were observed for CHS, 4CL, and PAL promoter strengths [12]. Gene order effects were not statistically significant.

Based on these findings, a second DBTL cycle was implemented with specific design constraints:

- High copy number origin of replication (ColE1) selected for all constructs

- CHI positioned at the beginning of the pathway to ensure direct promoter placement

- 4CL and CHS expression levels varied with positional exchange and promoter strength modulation

- PAL fixed at the 3' end of the assembly as the pathway was not limited by this enzyme's activity

This knowledge-driven redesign successfully established a production pathway improved by 500-fold, with competitive titers up to 88 mg L⁻¹ [12].

Advanced DBTL Applications and Methodologies

Cyberloop for Controller Prototyping

The Cyberloop framework represents an advanced DBTL implementation that accelerates the design process for biomolecular controllers [16]. This testing platform interfaces cellular fluorescence measurements with computer-simulated candidate stochastic controllers in real-time, enabling rapid prototyping of synthetic genetic circuits [16].

Cyberloop Protocol:

- Cell Preparation: Engineer yeast cells with optogenetic tools and fluorescent reporters.

- Real-time Monitoring: Capture periodic microscopy images for automated cell segmentation, tracking, and quantification.

- In Silico Control: Pass quantified readouts from each cell to its own biomolecular controller simulation.

- Optogenetic Activation: Feed controller outputs back to cells via light stimulation using Digital Micromirror Device (DMD) based projection hardware.

- Performance Evaluation: Characterize controller function and derive conditions for optimal biomolecular controller performance.

This approach enables researchers to examine controller impacts, test effects of non-ideal circuit behaviors such as dilution, and qualitatively demonstrate performance improvements with specific network modifications before biological implementation [16].

Cell-Free Systems for Rapid Prototyping

Cell-free systems (CFS) serve as powerful platforms for rapid prototyping of genetic circuits, metabolic pathways, and enzyme functionality, offering numerous advantages including minimized metabolic interference, precise control of reaction conditions, and shorter DBTL cycles [15]. The introduction of in vitro compartmentalization strategies enables ultra-high-throughput screening in physically separated spaces, significantly enhancing prototyping efficiency [15].

Knowledge-Driven DBTL with In Vitro Investigation

A knowledge-driven DBTL cycle incorporating upstream in vitro investigation enables both mechanistic understanding and efficient strain optimization [14]. This approach uses cell-free protein synthesis systems to test different relative enzyme expression levels before implementing changes in vivo, accelerating strain development [14].

Knowledge-Driven DBTL Protocol:

- In Vitro Testing: Utilize crude cell lysate systems to assess enzyme expression levels and pathway interactions.

- Translation to In Vivo: Apply RBS engineering for precise fine-tuning of relative gene expression in synthetic pathways.

- High-Throughput Construction: Implement automated strain construction for library generation.

- Performance Validation: Evaluate production strains in controlled bioreactor systems.

This methodology has demonstrated successful optimization of dopamine production in E. coli, achieving concentrations of 69.03 ± 1.2 mg/L, a 2.6-fold improvement over previous state-of-the-art production [14].

Research Reagent Solutions

Table 3: Essential research reagents and materials for DBTL workflows

| Reagent/Material | Function/Application | Example Use Case |

|---|---|---|

| NEBuilder HiFi DNA Assembly [17] | DNA assembly method | Rapid one-day workflow from DNA construction to protein expression |

| NEBExpress Cell-free E. coli Protein Synthesis System [17] | Cell-free protein expression | Rapid, automated purification of diverse proteins for screening |

| Ribosome Binding Site (RBS) Libraries [14] | Fine-tuning gene expression | Optimization of relative enzyme expression levels in metabolic pathways |

| Optogenetic Tools [16] | Light-controlled gene expression | Cyberloop framework for testing biomolecular controllers |

| Automated Liquid Handlers [18] | High-throughput laboratory automation | Beckman Coulter Biomek FXP for DNA library construction |

| UPLC-MS/MS Systems [12] | Analytical quantification | High-throughput screening of target compounds and intermediates |

| Design of Experiments Software [12] | Statistical library design | Reduction of combinatorial libraries to tractable sizes |

| Fluorescent Reporters [16] | Real-time monitoring | mCherry and GFP for tracking promoter activity in biosensors |

The Design-Build-Test-Learn cycle represents a powerful, systematic framework for engineering biological systems, enabling rapid iteration and optimization of genetic designs. Through automation, statistical design, and increasingly sophisticated analytical techniques, DBTL cycles dramatically accelerate the development of microbial production strains for diverse applications. The implementation of integrated DBTL pipelines has demonstrated remarkable success in improving production titers by several hundred-fold through just a few iterative cycles. As synthetic biology continues to advance, the DBTL framework provides the foundational methodology for translating biological designs into functional systems with real-world applications in chemical production, therapeutics, and sustainable manufacturing.

The convergence of Clustered Regularly Interspaced Short Palindromic Repeats (CRISPR)-Cas9, advanced DNA synthesis, and sophisticated automated platforms is fundamentally accelerating synthetic biology research. These technologies collectively enable a new paradigm of rapid prototyping, allowing researchers to move quickly from digital design to functional biological systems. This integration is particularly powerful for applications in therapeutic development, where precision, speed, and scalability are paramount [19]. The workflow begins with in silico design of genetic constructs, proceeds to their physical synthesis, and culminates in automated, high-throughput testing and analysis—compressing development timelines that once required months into weeks [19] [20]. These integrated systems are underpinned by artificial intelligence (AI), which enhances the precision of gene editing and optimizes the design of synthetic DNA components, thereby improving the efficiency and success rate of the entire prototyping cycle [21] [22].

A quantitative understanding of the market and application landscape for these technologies is crucial for strategic planning and resource allocation in research and development.

Table 1: Global CRISPR-Based Gene Editing Market Forecast (2024-2034)

| Metric | 2024 Value | 2025 Value | 2034 Projected Value | CAGR (2025-2034) |

|---|---|---|---|---|

| Market Size | USD 4.04 Billion | USD 4.46 Billion | USD 13.39 Billion | 13.00% [22] |

| By Technology | ||||

| ⋯ CRISPR/Cas9 Share | 55% | |||

| ⋯ CRISPR/Cas12 Growth | 12.3% [22] | |||

| By Modality | ||||

| ⋯ Ex Vivo Editing Share | 53% | |||

| ⋯ In Vivo Editing Growth | 12.5% [22] |

Table 2: Global DNA Synthesis Market Forecast and Key Segments

| Category | 2024 Market Size | 2034 Projected Market Size | CAGR (2025-2034) |

|---|---|---|---|

| Overall DNA Synthesis Market | USD 4,980 Million | USD 30,320 Million | 19.8% [23] |

| Service Segment (2024 Share) | |||

| ⋯ Oligonucleotide Synthesis | Dominant Segment | ||

| ⋯ Gene Synthesis | Fastest-Growing Segment | [23] | |

| Application Segment (2024) | |||

| ⋯ Research & Development | Leading Application | ||

| ⋯ Therapeutics | Fastest-Growing Application | [23] |

CRISPR-Cas9 Mechanism and Workflow

The CRISPR-Cas9 system functions as a programmable gene-editing tool derived from a bacterial immune mechanism. Its core components are the Cas9 nuclease, which acts as a "molecular scissor," and a guide RNA (gRNA), which directs Cas9 to a specific DNA sequence complementary to its own [24] [25]. The system's operation can be broken down into three critical stages. First, in the recognition and binding phase, the Cas9-gRNA complex scans the genome for a target DNA sequence adjacent to a short Protospacer Adjacent Motif (PAM) [25]. Upon locating a valid target, the complex binds, and Cas9 unwinds the DNA double helix. Second, in the cleavage phase, the bound Cas9 protein introduces a precise double-strand break (DSB) in the DNA [24] [25]. Finally, the cell's innate DNA repair machinery is activated to resolve this break, primarily through two pathways: the error-prone Non-Homologous End Joining (NHEJ), which often results in small insertions or deletions (indels) that disrupt the gene, or the more precise Homology-Directed Repair (HDR), which can be harnessed to insert a new DNA template to correct the gene or insert a new sequence [24] [25].

Diagram 1: CRISPR-Cas9 experimental workflow from gRNA design to analysis.

Protocol: CRISPR-Cas9 Mediated Gene Knockout in Mammalian Cells

This protocol outlines the key steps for generating a gene knockout in a mammalian cell line using the CRISPR-Cas9 system and the NHEJ repair pathway [24] [25].

gRNA Design and Validation

- Design: Select a 20-nucleotide target sequence specific to your gene of interest that is immediately 5' to a PAM sequence (NGG for SpCas9). Utilize AI-driven tools (e.g., CRISPRon, DeepSpCas9) to predict gRNA on-target activity and potential off-target effects [21].

- Synthesis: The designed gRNA sequence is typically ordered as a single-guide RNA (sgRNA). DNA synthesis services can provide clonal genes or ready-to-use sgRNAs [23].

- Validation: Confirm target specificity and absence of significant off-target sites via in silico analysis against the reference genome of your cell line.

Delivery of CRISPR Components

- Method Selection: Choose a delivery method appropriate for your cell type.

- Electroporation: Effective for hard-to-transfect cells like immune cells and stem cells. Mix Cas9 protein or mRNA with sgRNA and electroporate using optimized parameters [24].

- Lipid Nanoparticles (LNPs): Suitable for in vivo delivery and sensitive cell types in vitro. Formulate CRISPR plasmids or ribonucleoproteins (RNPs) with commercial lipid transfection reagents [24] [26].

- Viral Vectors (Lentivirus, AAV): Used for stable expression and hard-to-transfect cells. Note the packaging size limitations of AAV (~4.7 kb) [24].

- Preparation: For RNP delivery, pre-complex the purified Cas9 protein with sgRNA at a molar ratio of 1:1.2 to 1:1.5 and incubate at room temperature for 10-15 minutes to form the functional complex.

- Method Selection: Choose a delivery method appropriate for your cell type.

Cell Culture and Transfection

- Culture your mammalian cells (e.g., HEK293, CHO) according to standard protocols to achieve 70-80% confluency at the time of transfection.

- Perform transfection or electroporation with the prepared CRISPR complexes according to the manufacturer's protocol for your chosen delivery method.

- Include appropriate controls (e.g., cells only, mock transfection, non-targeting gRNA).

Editing Validation and Analysis

- Harvest Genomic DNA: 48-72 hours post-transfection, harvest cells and extract genomic DNA.

- Assay Editing Efficiency: Use a mismatch detection assay (e.g., T7E1 or TIDE) on PCR-amplified target loci to estimate initial indel frequency.

- Sequence Validation: Clone the PCR products and perform Sanger sequencing of multiple clones, or utilize Next-Generation Sequencing (NGS) for a deep, quantitative analysis of editing outcomes and to screen for potential off-target effects [27].

Advanced Gene Editing Modalities

Beyond traditional CRISPR-Cas9, newer editing technologies offer enhanced precision and expanded capabilities.

- Base Editing: This technology uses a catalytically impaired Cas protein (nCas9) fused to a deaminase enzyme. It does not create DSBs but instead chemically converts one base into another at the target site—Cytosine Base Editors (CBEs) convert C•G to T•A, and Adenine Base Editors (ABEs) convert A•T to G•C. This allows for precise point mutation corrections without relying on HDR [25].

- Prime Editing: Considered a "search-and-replace" technology, prime editing employs a Cas9 nickase fused to a reverse transcriptase and is directed by a specialized prime editing guide RNA (pegRNA). The pegRNA both specifies the target site and encodes the desired edit. This system can mediate all 12 possible base-to-base conversions, as well as small insertions and deletions, without requiring DSBs or donor DNA templates, thereby minimizing indel byproducts [21] [25].

DNA Synthesis and Automated Workflows

The ability to rapidly and accurately synthesize DNA oligonucleotides is the foundation for building genetic constructs for synthetic biology. The global DNA synthesis market is experiencing rapid growth, driven by demand from gene editing and synthetic biology [23]. Oligonucleotide synthesis via the phosphoramidite method remains the core technology, but innovations in enzymatic DNA synthesis and microfluidics are enabling longer, more accurate, and cheaper DNA constructs [23]. These synthesized DNA fragments are essential for creating gRNA sequences, HDR donor templates, and complex genetic circuits. The integration of AI is accelerating the design of these sequences, predicting optimal codons and secondary structure to maximize functional output [20].

Automation is the critical link that scales these processes. Strategic partnerships, like that between Integrated DNA Technologies (IDT) and Hamilton Company, are creating end-to-end, automation-friendly NGS workflows [27]. These integrated systems automate library preparation and other complex, manual steps, which drastically reduces hands-on time, minimizes human error, and enhances reproducibility. This is essential for the high-throughput validation required in rapid prototyping cycles [19] [27].

Diagram 2: Integrated rapid prototyping workflow from AI design to data analysis.

Protocol: Automated NGS Library Preparation for Editing Efficiency Analysis

This protocol describes an automated workflow for preparing NGS libraries to quantify CRISPR editing efficiency, leveraging partnerships like IDT and Hamilton [27].

Sample and Reagent Preparation

- Amplicon Generation: Design and synthesize PCR primers to amplify the genomic region surrounding the CRISPR target site. Perform a first-round PCR on purified genomic DNA from edited and control cells.

- Reagent Setup: Thaw and vortex IDT xGen NGS library preparation reagents (or equivalent). Dilute to working concentrations as required. Dispense all reagents, purified first-round PCR products, and unique dual indices (UDIs) into a designated microplate.

Automated Library Construction

- Platform Setup: Load the prepared reagent plate and a fresh PCR plate onto the Hamilton Microlab NIMBUS or STAR liquid handling platform.

- Run Script: Execute the pre-validated automation script for the NGS workflow. The system will automatically:

- Perform enzymatic clean-up and normalization of the first-round PCR amplicons.

- Set up the indexing PCR reaction, transferring the amplicons and adding a unique UDI pair to each sample.

- Perform a second enzymatic clean-up to purify the final NGS libraries.

- Pooling: The automated system can combine equal volumes of each purified library into a single pool.

Sequencing and Data Analysis

- Quantify the pooled library using a fluorometric method and validate library size distribution using a fragment analyzer or bioanalyzer.

- Sequence the pool on an appropriate NGS platform to achieve sufficient coverage (e.g., >100,000x read depth per amplicon).

- Analyze the sequencing data using a CRISPR-specific analysis pipeline (e.g., CRISPResso2) to calculate the percentage of indels, base editing efficiency, or other relevant metrics.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Integrated Genomics Workflows

| Item | Function | Example Applications |

|---|---|---|

| CRISPR-Cas9 Nuclease | Engineered Cas9 protein for complexing with sgRNA to form RNP for highly specific editing with reduced off-target effects. | Gene knockout, knock-in, and targeted mutation in cell lines and primary cells [24] [25]. |

| Custom sgRNA | Synthetic single-guide RNA designed for a specific genomic target; available as modified RNA for enhanced stability. | Guides Cas nuclease to the intended DNA sequence for cleavage [24] [23]. |

| DNA Oligos & Genes | Custom-synthesized oligonucleotides and clonal double-stranded DNA fragments. | gRNA cloning, PCR amplification, HDR template construction, and synthetic gene assembly [23]. |

| NGS Library Prep Kits | Automated, automation-optimized kits for preparing sequencing-ready libraries from amplicons. | High-throughput analysis of editing efficiency and off-target assessment [27]. |

| Lipid Nanoparticles (LNPs) | Non-viral delivery vehicles for in vivo and in vitro transport of CRISPR RNPs or mRNA. | Efficient, low-immunogenicity delivery of editing components, enabling re-dosing [24] [26]. |

| Automated Liquid Handlers | Precision robotic platforms (e.g., Hamilton STAR/NIMBUS) for liquid handling. | Automates repetitive NGS and assay steps, ensuring reproducibility and scalability [27]. |

The Critical Role of Prototyping in De-risking Drug Discovery and Metabolic Engineering Projects

In the high-stakes fields of drug discovery and metabolic engineering, the traditional development pipeline is notoriously long, expensive, and prone to failure. The integration of rapid prototyping workflows from synthetic biology is fundamentally changing this paradigm by systematically de-risking projects from their earliest stages. Central to this transformation is the Design-Build-Test-Learn (DBTL) cycle, an iterative engineering framework that accelerates the development of biological systems while minimizing resource expenditure [2].

Biofoundries, which are integrated facilities combining robotic automation, computational analytics, and high-throughput screening, operationalize this cycle. They enable researchers to move swiftly from genetic designs to functional constructs, transforming biological engineering from an artisanal process into a scalable, predictable endeavor [2]. This application note details how these prototyping platforms, supported by advanced computational tools and standardized genetic parts, are being leveraged to de-risk critical phases of research, from initial genetic construct optimization to the development of complex microbial cell factories and therapeutic modalities.

Core Principles and Workflows

The DBTL Cycle in Biofoundries

The DBTL cycle provides a structured, iterative framework for biological engineering. Its power lies in the continuous loop of design, construction, experimentation, and data analysis, which rapidly converges on optimal solutions.

Figure 1. The iterative Design-Build-Test-Learn (DBTL) cycle, a core engineering framework in modern biofoundries [2].

- Design (D): This initial phase utilizes computer-aided design (CAD) software and bioinformatics tools for the in silico design of genetic sequences, biological circuits, and metabolic pathways. Open-source tools like Cameo (for metabolic engineering) and j5 (for DNA assembly design) are commonly employed. The phase also involves selecting standardized genetic parts from libraries, such as promoters, ribosomal binding sites, and coding sequences, often within a modular cloning (MoClo) framework [2].

- Build (B): In this phase, the designs are physically realized. Robotic liquid handling systems and automated workstations execute DNA synthesis, assembly, and transformation at a massive scale. For instance, an integrated workflow using AssemblyTron can automate the transition from a j5 design output to physical DNA assembly on an Opentrons liquid handling robot [2].

- Test (T): Constructs are subjected to high-throughput screening and characterization. This involves automated analytics to measure key performance indicators (KPIs) such as protein expression, metabolic flux, product titer, and growth. Methods range from fluorescence-activated cell sorting (FACS) to mass spectrometry-based metabolomics [2] [28].

- Learn (L): Data generated from the Test phase is analyzed using computational modeling and bioinformatic tools. Machine Learning (ML) algorithms are increasingly critical here, identifying non-intuitive correlations between genetic designs and performance outcomes to inform the next design iteration, thereby reducing the number of cycles needed to achieve the desired result [2].

A High-Throughput Platform for Chloroplast Engineering

The power of a specialized, automated DBTL workflow is exemplified by a recent effort to advance chloroplast synthetic biology using the microalga Chlamydomonas reinhardtii as a prototyping chassis [28].

Experimental Protocol 1: High-Throughput Characterization of Transplastomic Strains

- Objective: To systematically generate, handle, and analyze thousands of transplastomic C. reinhardtii strains in parallel to characterize genetic parts and pathways.

- Methodology:

- Automated Strain Picking: Transformants are automatically picked and arrayed into a standardized 384-format using a screening robot.

- Solid-Medium Cultivation: Strains are cultivated on solid medium in a 96-array format for reproducible biomass growth, a more efficient alternative to liquid culture for large numbers of strains.

- Automated Homoplasy Screening: Sixteen replicate colonies per construct are simultaneously screened on plates over approximately three weeks to achieve homoplasmy (where all chloroplast copies contain the transgene).

- Liquid Transfer & Assay: A contact-free liquid handler transfers normalized biomass to multi-well plates for reporter gene assays (e.g., luminescence) and other phenotypic screens.

This automated pipeline reduced the time required for picking and restreaking by about eightfold and cut yearly maintenance spending by half, enabling the management of over 3,000 individual transplastomic strains in the cited study [28].

Application in Metabolic Engineering

Metabolic engineering has evolved through several waves, with the current wave heavily reliant on synthetic biology and prototyping to rewire cellular metabolism for the production of valuable chemicals [29]. The approach is hierarchical, tackling engineering at multiple levels of biological complexity.

Hierarchical Metabolic Engineering Strategies

A systematic, hierarchical approach allows for the rational rewiring of microbial cell factories. The strategies and their applications are summarized in the table below.

Table 1. Hierarchical metabolic engineering strategies and their application in developing microbial cell factories [29].

| Hierarchy Level | Engineering Strategy | Example Application | Key Outcome |

|---|---|---|---|

| Part Level | Enzyme engineering, promoter engineering, RBS optimization. | Improving catalytic efficiency and tuning expression levels of pathway enzymes. | Enhanced flux through a rate-limiting step; balanced expression to avoid toxic intermediate accumulation. |

| Pathway Level | Modular pathway engineering, decoupling growth from production, constructing synthetic pathways. | Production of artemisinin (antimalarial) and 1,4-butanediol (chemical intermediate). | De novo production of complex molecules not inherent to the host chassis. |

| Network Level | Cofactor engineering, transporter engineering, regulatory circuit engineering. | Engineering cofactor balance (NAPH/NAD) to support high flux through engineered pathways. | Improved overall pathway efficiency and host cell fitness. |

| Genome Level | Genome-scale modeling, CRISPR-based multiplex editing, tolerance engineering. | Gene knockout strategies predicted by models for lycopene overproduction in E. coli. | Systemic removal of metabolic bottlenecks and competitive pathways. |

| Cell Level | Consortium engineering, morphological engineering, in silico host selection. | Co-culturing multiple engineered strains to compartmentalize metabolic functions. | Division of labor to reduce the burden on a single strain and optimize overall system productivity. |

The following diagram illustrates the logical flow of applying these hierarchical strategies to a metabolic engineering project.

Figure 2. A logical workflow for applying hierarchical strategies in metabolic engineering projects [29].

Case Study: Prototyping a Chloroplast-Based Photorespiration Pathway

A practical implementation of this hierarchical and high-throughput approach is the introduction of a synthetic photorespiration pathway into the chloroplast of C. reinhardtii [28].

Experimental Protocol 2: Prototyping Metabolic Pathways in Plastids

- Objective: To install and optimize a synthetic metabolic pathway in the chloroplast genome to enhance biomass production.

- Key Reagents & Workflow:

- Foundational Genetic Parts: Utilize a standardized library of over 300 genetic parts for plastome manipulation, including native and synthetic promoters, 5' and 3' untranslated regions (UTRs), and intercistronic expression elements (IEEs), all embedded in a MoClo framework [28].

- Automated Assembly: Use Golden Gate cloning to assemble the genes encoding the synthetic photorespiration pathway with compatible regulatory elements.

- Chloroplast Transformation: Introduce the assembled construct into the chloroplast genome of C. reinhardtii.

- High-Throughput Screening: Employ the automated workflow (Protocol 1) to screen thousands of transplastomic strains for homoplasy and desired phenotype.

- Phenotypic Characterization: Measure biomass accumulation of engineered strains versus wild-type under controlled conditions.

- Result: The study reported a threefold increase in biomass production in strains harboring the optimized synthetic pathway, validating the success of the high-throughput prototyping approach [28].

Application in Drug Discovery

The principles of rapid prototyping are equally transformative in drug discovery, where they are applied to de-risk the development of novel therapeutic modalities and streamline the entire development pipeline.

De-risking Novel Therapeutic Modalities

Industry leaders are leveraging prototyping to advance complex biological drugs:

- Genetic Medicines: Prototyping is enabling a paradigm shift from one-size-fits-all treatments to personalized genetic approaches in non-oncological diseases, such as cardiac and neurological conditions, by tailoring therapies to underlying genetic causes [30].

- Cell Therapies: While CAR-T therapies have proven successful in hematological cancers, prototyping is key to "cracking the code" for solid tumors. This involves iterative testing of construct designs and surface targets to improve both efficacy and safety [30].

- Surgical Precision: Prototyping of fluorescent-guided surgery tools allows for real-time illumination of critical structures like nerves and tumors during procedures, significantly improving surgical outcomes and patient quality of life [30].

Streamlining ADME and Pharmacokinetic Optimization

A critical area where prototyping de-risks drug development is in the optimization of a drug's Absorption, Distribution, Metabolism, and Excretion (ADME) properties. Advanced in vitro and in silico methods are used to predict human pharmacokinetics earlier and more accurately.

Table 2. Key technologies and approaches for ADME optimization in drug development [31].

| Technology | Application in ADME Prototyping | Function in De-risking |

|---|---|---|

| Complex Cell Models & Organ-on-a-Chip | In vitro ADME analysis using advanced hepatic (liver) models such as spheroids and flow systems. | Provides more physiologically relevant data on metabolism and potential hepatotoxicity earlier in development. |

| Accelerator Mass Spectrometry (AMS) | Ultra-sensitive analysis for human ADME studies and drug-drug interaction (DDI) studies, even at microdoses. | Enables safe clinical microdosing studies to obtain human PK data prior to large, expensive trials. |

| PBPK Modelling & Simulation | (Physiologically Based Pharmacokinetic) computer models simulating drug disposition in the body. | Predicts human pharmacokinetics, dose, formulation impact, and DDI potential, guiding clinical study design. |

| ICH M12 Guideline | Harmonized international guideline for the design of drug-drug interaction studies. | Standardizes DDI assessment, reducing regulatory risk and the need for costly study redesign. |

| Miniaturization & Microsampling | Reducing scale of in vivo PK studies (e.g., smaller volumes, automated assays). | Aligns with 3Rs (Replacement, Reduction, Refinement), lowers compound requirements, and increases data quality. |

The Impact of AI and Machine Learning

Artificial intelligence (AI) and machine learning are supercharging prototyping workflows across drug discovery. AI is poised to transform not only early-stage discovery but also clinical trials and regulatory documentation [30] [32].

- Target Identification & Validation: AI analyzes complex biological datasets to identify and validate novel drug targets.

- Molecule Design: Through molecular generation techniques, AI facilitates the creation of novel drug candidates with optimized properties, predicting their activities and streamlining virtual screening [32].

- Clinical Trial Efficiency: AI enhances clinical trials by predicting outcomes, optimizing trial design, and identifying suitable patient populations, thereby reducing the high failure rate and cost associated with this phase [30] [32].

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful implementation of the workflows described above relies on a suite of key reagents, software, and hardware.

Table 3. Key research reagent solutions and tools for synthetic biology prototyping.

| Item | Function / Application |

|---|---|

| Modular Cloning (MoClo) Parts | Standardized, interchangeable genetic elements (promoters, UTRs, coding sequences, terminators) for rapid assembly of genetic constructs [28]. |

| Phytobrick-Compatible Vectors | Standardized acceptor vectors for the assembly of multigene constructs, ensuring compatibility and transferability between different biological systems [28]. |

| Automated Liquid Handling Robots | Robotic systems (e.g., Opentrons) that automate pipetting, plate preparation, and other repetitive tasks, enabling high-throughput Build and Test phases [2] [28]. |

| Open-Source DNA Design Software | Tools like j5 for DNA assembly design, Cello for genetic circuit design, and Cameo for metabolic modeling, which facilitate the in silico Design phase [2]. |

| Specialized Model Organisms | Engineerable chassis like Chlamydomonas reinhardtii for chloroplast prototyping [28] and Yarrowia lipolytica for metabolic engineering of lipids and chemicals [29]. |

| Advanced Reporter Genes | Fluorescent (e.g., GFP variants) and luminescent (e.g., luciferase) proteins for high-throughput screening and characterization of genetic constructs [28]. |

| Machine Learning Platforms | Integrated AI/ML software for analyzing complex DBTL cycle data, generating predictive models, and proposing optimized designs for subsequent iterations [2] [32]. |

The integration of rapid prototyping workflows, centered on the DBTL cycle and enabled by biofoundries, is fundamentally de-risking drug discovery and metabolic engineering. By facilitating the iterative testing of thousands of genetic designs in parallel, these approaches compress development timelines, reduce costs, and systematically replace uncertainty with data-driven decisions. The continued evolution of this paradigm—through the expansion of genetic toolkits, enhanced automation, and the deepening integration of artificial intelligence—promises to further accelerate the delivery of next-generation therapeutics and sustainable bio-based products.

Building and Implementing Modern Prototyping Workflows

Combinatorial optimization represents a core strategy in advanced synthetic biology for navigating the immense design space of biological systems. Unlike sequential optimization methods, which test one variable at a time, combinatorial approaches enable multivariate optimization by simultaneously testing numerous genetic variations. This methodology is particularly valuable because biological systems often exhibit nonlinear behaviors and complex interactions where optimal performance emerges from specific combinations of components that are difficult to predict theoretically [33]. The fundamental challenge in most metabolic engineering and genetic circuit projects centers on identifying the optimal expression levels and combinations of multiple genes to maximize desired outputs [33]. Combinatorial optimization addresses this by allowing automatic optimization without requiring prior knowledge of ideal combinations, instead generating diversity and employing high-throughput screening to identify high-performing variants [34] [33].

Key Strategies and Methodologies

Library Generation and Diversity Creation

The foundation of combinatorial optimization lies in creating comprehensive genetic diversity. Advanced synthetic biology tools enable the construction of complex libraries through several methods:

- Combinatorial Cloning Methods: These approaches generate multigene constructs from standardized genetic elements (promoters, coding sequences, terminators) using one-pot assembly reactions. Terminal homology between adjacent fragments enables diverse construct generation in single cloning reactions [33].

- CRISPR/Cas-based Editing: Implementing CRISPR/Cas systems allows multi-locus integration of gene modules into microbial genomes, facilitating rapid library generation across multiple genomic locations [33].

- VEGAS and COMPASS Systems: Specific methodologies like VEGAS (Virtual Environmental Genome Assembly) enable pathway construction in plasmids, while COMPASS facilitates single- or multi-locus integration into microbial host genomes to generate combinatorial libraries [33].

Table 1: Combinatorial Library Generation Techniques

| Method | Key Features | Applications |

|---|---|---|

| Combinatorial Cloning | One-pot assembly; terminal homology between fragments | Multigene construct generation |

| CRISPR/Cas Editing | Multi-locus integration; precise genome modifications | Library generation across genomic locations |

| VEGAS/COMPASS | Pathway construction in plasmids; chromosomal integration | Complex pathway optimization |

Model-Guided Optimization

Model-guided approaches combine computational modeling with experimental validation to optimize complex genetic systems. As demonstrated in the optimization of a proportional miRNA biosensor, predictive modeling can initiate a targeted search in the phase space of sensor genetic composition [35]. This strategy involves:

- Generating diverse sensor circuits using different genetic building blocks

- High-throughput screening to identify optimal parameter combinations

- Mechanistic interrogation of selected sensors for model validation

- Iterative refinement using validated models to guide further experimentation [35]

This approach has proven successful for optimizing dynamic range in gene circuits and enables biosensor reprogramming and integration into larger networks [35].

Machine Learning and the LDBT Paradigm

Recent advances are transforming the traditional Design-Build-Test-Learn (DBTL) cycle into a Learning-Design-Build-Test (LDBT) framework, where machine learning precedes design [4]. This paradigm shift leverages:

- Protein Language Models: Tools like ESM and ProGen trained on evolutionary relationships can predict beneficial mutations and infer protein function, enabling zero-shot prediction of diverse sequences [4].

- Structure-Based Design: Approaches like MutCompute and ProteinMPNN use deep neural networks trained on protein structures to predict stabilizing mutations and design sequences for specific backbones [4].

- Functional Prediction Models: Specialized tools like Prethermut, Stability Oracle, and DeepSol predict thermostability changes and solubility directly from sequence information [4].

When combined with rapid cell-free testing platforms, these machine learning approaches enable megascale data generation and model training, potentially reducing or eliminating iterative DBTL cycles [4].

Detailed Experimental Protocols

Protocol: Combinatorial Library Construction for Pathway Optimization

This protocol outlines the generation of combinatorial libraries for metabolic pathway optimization using advanced DNA assembly and integration techniques [33].

Materials and Reagents

- Library of standardized genetic elements (promoters, RBS, CDS, terminators)

- Type IIS restriction enzymes (BsaI, BsmBI) or homologous recombination systems

- CRISPR/Cas9 components for genomic integration (Cas9, guide RNAs)

- Microbial host strains (E. coli, S. cerevisiae)

- Selection markers (antibiotic resistance, auxotrophic markers)

- DNA assembly master mix

- Transformation equipment and reagents

Procedure

Modular DNA Part Preparation

- Amplify individual genetic elements (regulators, coding sequences, terminators) with appropriate terminal homology regions (30-40 bp overlaps)

- Purify PCR products using column-based purification systems

- Quantify DNA concentration using fluorometric methods

Combinatorial Assembly Reaction

- Set up Golden Gate or Gibson Assembly reactions containing equimolar ratios of each part variant

- For Golden Gate: Combine 50-100 ng of each part with BsaI-HFv2 enzyme and T4 DNA ligase in 1× T4 ligase buffer

- Incubate assembly reaction: 25-30 cycles of (37°C for 2-3 minutes + 16-20°C for 3-5 minutes), followed by 50°C for 5 minutes and 80°C for 10 minutes

Library Amplification and Validation

- Transform assembly reaction into competent E. coli cells via electroporation

- Plate on selective media and incubate overnight at 37°C

- Pool colonies and extract library plasmid DNA using maxiprep kits

- Assess library diversity by sequencing 20-50 random clones

Host Strain Engineering

- Design guide RNAs targeting specific genomic loci for pathway integration

- Co-transform library DNA with CRISPR/Cas9 components into host strain

- Select for successful integrants on appropriate selective media

- Verify integration by colony PCR across integration junctions

Library Storage and Management

- Array individual clones in 96-well or 384-well plates with cryoprotectant Store at -80°C for long-term preservation

- Create pooled library stocks for bulk screening approaches

Protocol: Model-Guided Circuit Optimization

This protocol details the model-guided optimization of genetic circuits, incorporating computational design and experimental validation [35].

Materials and Reagents

- Predictive modeling software (MATLAB, Python with appropriate libraries)

- DNA synthesis capability or modular genetic parts

- Fluorescent reporter genes (GFP, RFP, YFP)

- Flow cytometer or microplate reader for high-throughput characterization

- Cell-free protein expression system or appropriate host cells

- Quantitative PCR reagents for mechanistic interrogation

Procedure

Computational Model Construction

- Define circuit topology and key parameters (transcription rates, degradation rates, binding affinities)

- Implement ordinary differential equations describing circuit dynamics

- Perform parameter sensitivity analysis to identify critical components

- Define quantitative performance criteria (dynamic range, response time, leakiness)

Design of Experiment

- Identify key genetic components for diversification (promoter strengths, RBS variants, protein degradation tags)

- Generate in silico library covering parameter space using Latin hypercube sampling or similar approaches

- Filter designs based on model predictions to focus on most promising regions of parameter space

Experimental Implementation

- Synthesize or assemble selected circuit variants using high-throughput DNA assembly methods

- Transform circuits into host cells or express in cell-free systems

- Characterize circuit performance using fluorescence measurements across relevant input conditions

- Collect data for at least 3 biological replicates per circuit variant

Model Validation and Refinement

- Compare experimental results with model predictions

- Refine model parameters using Bayesian inference or similar approaches

- Identify discrepancies and potential missing biological mechanisms

- Select top-performing circuits for detailed mechanistic interrogation using qPCR and other molecular analyses

Iterative Optimization

- Use validated model to design second-generation library with refined parameter sampling

- Focus diversity on components identified as most critical for performance

- Repeat experimental characterization and model refinement until performance criteria are met

Table 2: Key Parameters for Genetic Circuit Optimization

| Parameter | Typical Range | Optimization Strategy | Measurement Method |

|---|---|---|---|

| Promoter Strength | 10^-4 to 10^-1 transcripts/sec | Library of natural/synthetic promoters | Fluorescent reporter assay |

| RBS Strength | 1000-100,000 AU | RBS library with varying sequence | Flow cytometry, western blot |

| Protein Degradation Rate | Half-life 10 min to 10 hours | Degradation tags (ssrA, LVA, etc.) | Time-course after inhibition |

| Transcript Stability | Half-life 1-60 minutes | 5' and 3' UTR engineering | RNA sequencing time course |

| Transcription Factor Expression | 10-10,000 molecules/cell | Tunable promoters, RBS variants | Quantitative western blot |

Analytical and Visualization Methods

Data Analysis and Visualization

Comprehensive data analysis is crucial for interpreting combinatorial optimization results. The SuperPlotsOfData web app provides accessible tools for transparent data visualization and statistical analysis [9].