A Practical Guide to Calibrating Simulation Parameters from Experimental Data for Biomedical Research

This article provides a comprehensive framework for researchers and drug development professionals on calibrating simulation parameters from experimental data.

A Practical Guide to Calibrating Simulation Parameters from Experimental Data for Biomedical Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals on calibrating simulation parameters from experimental data. It covers foundational principles, explores established and emerging methodological approaches, addresses common troubleshooting and optimization challenges, and outlines rigorous validation techniques. By synthesizing current best practices from fields including cancer modeling and clinical trial simulation, this guide aims to enhance the reliability, efficiency, and regulatory acceptance of simulation-based research in biomedical applications.

The Critical Role of Parameter Calibration in Scientific Simulation

In computational sciences, calibration is the essential process of adjusting a model's input parameters so that its outputs align with observed, experimental data [1]. This process transforms a theoretical construct into a relevant tool capable of providing quantitative insights and predictions. For researchers and drug development professionals, effective calibration is a critical step in developing useful models, from complex biological systems simulating disease progression to quantitative structure-activity relationship (QSAR) models predicting drug efficacy [1] [2]. Without proper calibration, even the most sophisticated models risk producing unreliable results that can misdirect valuable research resources.

This article details the practical application of calibration protocols, focusing on methodologies that address the challenges inherent in calibrating complex models with large parameter spaces and stochastic outputs. The process differs from traditional parameter estimation; instead of finding a single optimal parameter set, calibration typically identifies a robust parameter space—a continuous region where model simulations recapitulate the broad range of outcomes captured by experimental data [1] [3].

Core Calibration Methodologies

Calibration methods can be broadly categorized by their approach to handling uncertainty and their computational strategies. The table below compares several key methodologies applicable to computational modeling in drug discovery.

Table 1: Key Calibration Methodologies for Scientific Models

| Method | Core Principle | Best Application Context | Key Output |

|---|---|---|---|

| CaliPro (Calibration Protocol) [1] [3] | Iterative parameter density estimation using stratified sampling and user-defined pass/fail criteria. | Complex models where likelihoods are unobtainable and the goal is to find parameter ranges that capture a full range of experimental outcomes. | Robust, continuous regions of parameter space. |

| Approximate Bayesian Computation (ABC) [1] | A Bayesian approach that accepts parameter sets producing simulations within a specified distance of the observed data. | Models where prior parameter distributions can be estimated and summary statistics effectively compare simulation to data. | Weighted posterior parameter distributions. |

| Platt Scaling [2] | A post-hoc calibration method that fits a logistic regression model to the output scores of a classifier. | Correcting overconfident or underconfident probabilistic predictions from machine learning models, like neural networks. | Calibrated, reliable probability estimates. |

| Bayesian Neural Networks [2] | Treats model parameters as random variables, using approximation methods to estimate posterior distributions. | Providing reliable uncertainty estimates for neural network predictions, crucial for risk assessment in drug discovery. | Predictive distributions that quantify uncertainty. |

The CaliPro Protocol: A Detailed Workflow

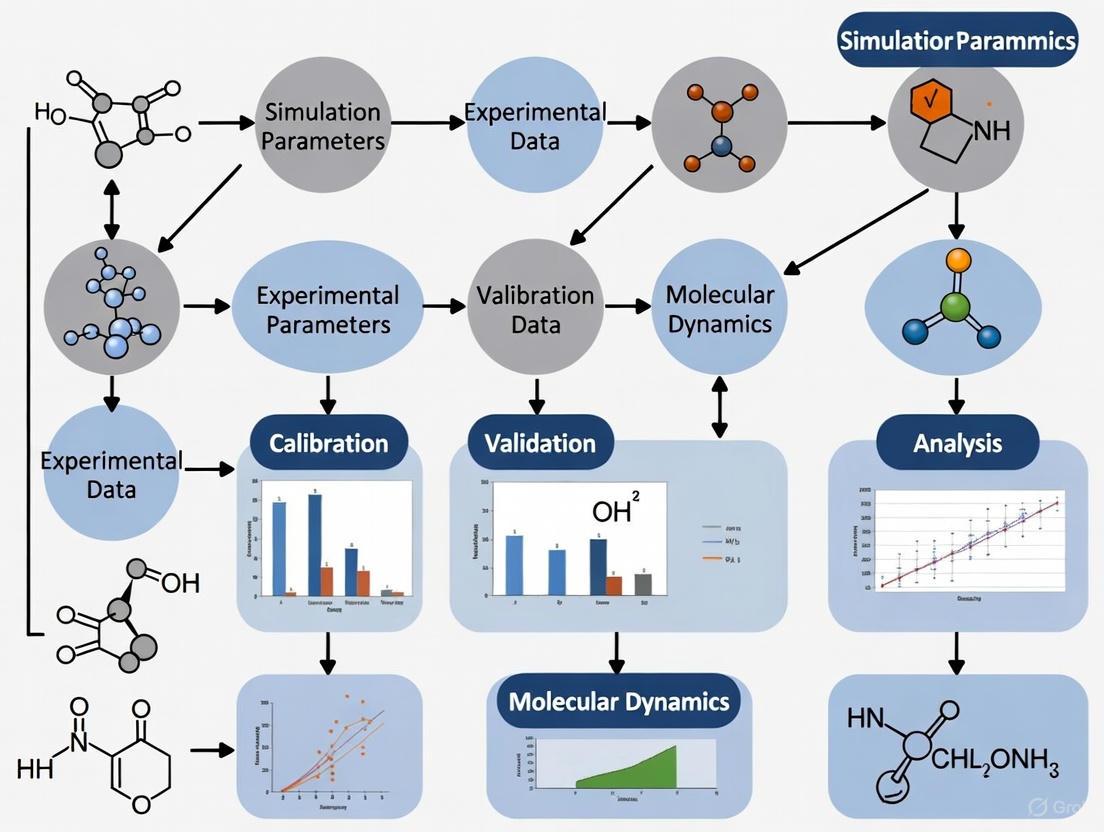

CaliPro is designed for complex models, such as those involving hybrid multi-scale methods (e.g., ODEs, PDEs, and agent-based models) where standard optimization techniques fall short [1] [3]. The following diagram illustrates the iterative workflow of the CaliPro protocol.

The protocol consists of the following detailed steps:

- Define Initial Parameter Ranges: The modeler assigns the widest biologically feasible ranges for all parameters, informed by literature and experimental data. Well-constrained parameters may have narrow ranges, while others representing phenomenological processes may have very wide initial bounds [3].

- Stratified Sampling: The high-dimensional parameter space is sampled using efficient algorithms like Latin Hypercube Sampling (LHS) or Sobol sequences. This ensures broad, non-random coverage of the initial parameter space, which is crucial given the combinatorial complexity of a high-dimensional hypercube [1] [3].

- Model Execution: The model is run for each sampled parameter combination to generate a set of simulation outcomes. In parallel computing environments, a batch sequential design can be employed to evaluate multiple parameter sets simultaneously, enhancing efficiency [4].

- Model Evaluation and Pass Set Definition: This is a crucial step. Each simulation is compared to the experimental data. Instead of minimizing a single objective function, the modeler defines a "pass set" based on how the model should successfully recapitulate the data. For example, a simulation might "pass" if all its output trajectories at various timepoints fall within the bounds of the experimental data [3].

- Iterative Refinement: The density of the passing parameter sets is estimated. Parameter ranges are then refined to focus on regions of the parameter space with a high density of successful simulations, effectively "zooming in" on the robust parameter space. Steps 2-5 are repeated until a continuous, robust parameter space is identified [3].

Calibration in Practice: A Drug Discovery Case Study

In drug discovery, machine learning models that predict drug-target interactions are valuable but often poorly calibrated, meaning their confidence scores do not reflect the true probability of a prediction being correct [2]. An overconfident model can lead to costly pursuit of false leads.

A 2025 calibration study investigated methods to improve the reliability of uncertainty estimates for neural network-based bioactivity models [2]. The study compared hyperparameter tuning strategies and uncertainty quantification methods, including a proposed method called HBLL (HMC Bayesian Last Layer).

Research Reagent Solutions for Model Calibration

The following table details key computational and data "reagents" essential for such a calibration study.

Table 2: Essential Research Reagents for a Drug-Target Interaction Calibration Study

| Reagent / Resource | Function in the Calibration Process |

|---|---|

| Bioactivity Datasets (e.g., ChEMBL) | Provides the experimental "ground truth" data (e.g., Ki, IC50) against which the model's predictions are calibrated. |

| Neural Network Architecture (e.g., Multi-Layer Perceptron) | Serves as the base model for making initial, uncalibrated predictions of drug-target interactions. |

| Hamiltonian Monte Carlo (HMC) Sampler | A core component of the HBLL method; used to obtain high-quality samples from the posterior distribution of the last layer's weights, improving uncertainty estimation [2]. |

| Platt Scaling Calibrator | A post-hoc calibration method that adjusts the model's output probabilities using a logistic regression model fitted on a separate calibration dataset [2]. |

| Calibration Error Metric (e.g., ECE) | Quantifies the difference between the model's confidence and its actual accuracy, serving as a key performance indicator for calibration. |

Workflow for Uncertainty Estimation and Calibration

The process for developing a well-calibrated predictive model in this context involves integrating training with specific uncertainty quantification and post-hoc calibration techniques, as illustrated below.

This workflow highlights two key stages for achieving reliable models:

- Train-time Uncertainty Quantification: Methods like MC Dropout, Deep Ensembles, or the specialized HBLL method are applied during or after training to capture epistemic (model) uncertainty. These methods treat model parameters as distributions rather than fixed values [2].

- Post-hoc Calibration: Even with uncertainty quantification, a model's probabilities may still be misaligned. Techniques like Platt Scaling are applied as a final step to correct overconfidence or underconfidence, ensuring that a predicted probability of 70% truly corresponds to a 70% likelihood of the event occurring [2].

Performance Metrics and Analysis

Evaluating calibration requires specific metrics beyond pure accuracy. The following table summarizes key quantitative measures used to assess the quality of a model's calibration, particularly in a classification context.

Table 3: Key Metrics for Evaluating Model Calibration

| Metric | Measures | Interpretation |

|---|---|---|

| Accuracy | The overall correctness of the model's class predictions. | Necessary but insufficient for assessing calibration; a model can be accurate but overconfident [2]. |

| Calibration Error (CE) | The average difference between model confidence and empirical accuracy. | A lower CE indicates better calibration. Often visualized with a reliability plot [2]. |

| Brier Score | The mean squared difference between predicted probabilities and actual outcomes. | A composite measure that assesses both calibration and refinement (sharpness); lower scores are better. |

Studies have shown that the choice of hyperparameter tuning strategy significantly impacts calibration. Optimizing for accuracy alone can lead to poorly calibrated models, whereas directly optimizing for calibration metrics like the Brier Score can yield models that are both accurate and well-calibrated [2]. Furthermore, combining train-time uncertainty methods like HBLL with post-hoc Platt scaling can synergistically boost both model accuracy and calibration [2].

Calibration is the critical bridge between theoretical models and observed reality. For researchers in drug development and computational biology, employing robust protocols like CaliPro for complex mechanistic models, or advanced uncertainty quantification with probability calibration for machine learning models, is essential for generating trustworthy, actionable insights. A rigorously calibrated model provides not just predictions, but reliable estimates of its own uncertainty, enabling well-informed, risk-aware decision-making in the costly and high-stakes process of scientific discovery and therapeutic development.

In modern computational science, the terms reproducibility and replicability are fundamental to the validation of scientific findings, yet they are often used inconsistently across disciplines. Within the context of this article, we adopt the following critical definitions:

- Reproducibility refers to the ability to obtain consistent computational results using the same input data, computational steps, methods, code, and conditions of analysis [5]. It is the computational cornerstone that allows other researchers to confirm that the original analysis was performed correctly.

- Replicability refers to the ability to obtain consistent results across studies aimed at answering the same scientific question, each of which has obtained its own data [5]. This involves independent investigators testing the original scientific hypothesis using new data or new experimental setups.

Calibration serves as the critical bridge between computational models and empirical reality. It is the systematic process of adjusting a model's parameters to minimize the discrepancy between its predictions and experimental observations. When calibrating simulation parameters from experimental data, researchers ensure that their computational tools are not merely producing output, but are generating scientifically valid, meaningful results that can reliably inform drug development and other research domains. The evolving practices of science, including increased data availability and computational complexity, have made these concepts more critical than ever [5].

The Role of Calibration in Scientific Workflows

Calibration transforms abstract computational models into quantitatively accurate tools for prediction and analysis. In computational science, particularly when parameters are derived from experimental data, a well-calibrated model ensures that simulations reflect underlying physical, chemical, or biological realities rather than computational artifacts.

A failure to properly calibrate can lead to models that are precisely wrong—producing consistent but inaccurate results that undermine both reproducibility and replicability. The pressure to publish in high-impact journals can sometimes create incentives to overstate results or overlook proper calibration practices, increasing the risk of bias [5]. Proper calibration mitigates this risk by providing a systematic, documented methodology for aligning models with data.

Table 1: Contrasting Calibrated and Uncalibrated Research Approaches

| Aspect | Well-Calibrated Research | Poorly Calibrated Research |

|---|---|---|

| Parameter Estimation | Parameters are systematically tuned against reliable experimental datasets. | Parameters are arbitrarily selected or tuned to fit limited data. |

| Result Reproducibility | High; same inputs and methods yield consistent results. | Variable; results may be sensitive to undocumented factors. |

| Result Replicability | High; underlying model accurately captures phenomena for new data. | Low; model fails when applied to new experimental conditions. |

| Uncertainty Quantification | Explicitly characterized and reported. | Often ignored or inadequately addressed. |

| Model Robustness | Performs well across a range of validated conditions. | May fail outside very specific training conditions. |

Experimental Protocols for Effective Calibration

Protocol: A General Framework for Calibrating Simulation Parameters

This protocol provides a methodological approach for calibrating computational models using experimental data, with a focus on ensuring reproducibility and replicability.

Define the Calibration Objective and Experimental Data

- Clearly state the specific model outputs that require calibration.

- Identify and curate the high-quality experimental dataset that will serve as the calibration target. Document all relevant experimental conditions and metadata.

- Establish quantitative metrics (e.g., Mean Squared Error, Kling-Gupta Efficiency [6]) that will measure the agreement between model outputs and experimental data.

Parameter Selection and Uncertainty Specification

- Identify the subset of model parameters to be calibrated. Justify this selection based on sensitivity analysis or domain knowledge.

- Define plausible physical ranges (priors) for each parameter based on literature or theoretical constraints.

Execute the Calibration Procedure

- Employ appropriate optimization or sampling algorithms to find the parameter set that minimizes the discrepancy metric.

- For stochastic models, ensure multiple runs are performed to account for variability [6].

- Maintain a detailed log of all parameter sets evaluated and their corresponding performance metrics.

Validate the Calibrated Model

- Test the calibrated model against a separate, held-out set of experimental data not used during the calibration process. This is critical for assessing replicability.

- Perform uncertainty analysis to quantify confidence in the model predictions.

Document and Archive for Reproducibility

- Record the final calibrated parameter values, the software environment, and the exact version of the code and data used.

- Archive all scripts, data, and computational workflows to enable other researchers to reproduce the calibration process exactly [5].

Protocol: Radar Calibration Using a Target Simulator

This protocol, adapted from Schneebeli et al. (2025), exemplifies a high-precision end-to-end calibration methodology using an electronically generated reference [7].

Setup and Instrumentation

- Equipment: Polarimetric Radar Target Simulator, the radar system under test, lifting platform (if required to elevate the simulator and minimize ground reflections).

- Configuration: Precisely measure the distance between the radar and the target simulator.

Generate Reference Targets

- Use the target simulator to create virtual point targets with well-defined Radar Cross-Section, polarimetric signature, and Doppler shift.

- The power re-emitted by the simulator is controlled by the scaling factor

k = (2π√σ_b * r_s²) / (G_RTS * λ * r_t²), whereσ_bis the desired RCS,r_sis the radar-simulator distance,G_RTSis the simulator antenna gain,λis the wavelength, andr_tis the virtual target range [7].

Data Acquisition

- Direct the radar antenna toward the target simulator.

- Capture radar measurements (reflectivity, differential reflectivity, Doppler velocity) of the generated virtual targets.

Analysis and Bias Correction

- Compare the measured radar variables against the known properties of the virtual targets.

- Quantify any systematic bias in the radar's measurements.

- Apply necessary corrections to the radar's data processing chain to eliminate the identified biases.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools and Resources for Calibrated Computational Research

| Tool / Resource | Function | Relevance to Calibration |

|---|---|---|

| ArgyllCMS with DisplayCAL | An open-source color management system used for display calibration and profiling [8]. | Ensures visual output is accurate and consistent across different hardware, which is critical for image-based analysis. |

| Radar Target Simulator (RTS) | Generates electronic point targets with known radar cross-sections for end-to-end radar calibration [7]. | Provides a precise reference standard for calibrating complex instrumentation, eliminating positioning uncertainties. |

| Reproducible Research Compendium | A complete collection of data, code, and environment specifications needed to reproduce results [5]. | The foundational artifact for achieving computational reproducibility by allowing others to regenerate results exactly. |

| Material Symbols | A library of over 2,500 icons with adjustable design axes (weight, grade, optical size) [9]. | Provides consistently rendered visual elements for user interfaces and scientific dashboards, ensuring clarity. |

| WCAG Contrast Checkers | Tools that verify text and visual elements meet minimum contrast ratios (e.g., 4.5:1 for Level AA) [10] [11]. | Ensures that all visual scientific communication is accessible and that information is not lost due to poor color choice. |

Visualizing the Calibration-Replicability Relationship

The following diagram illustrates the central role of calibration in the iterative cycle of scientific discovery, connecting computational work with experimental validation.

Calibration is not merely a technical step in data processing; it is a fundamental scientific practice that upholds the pillars of modern research: reproducibility and replicability. By rigorously aligning computational models with empirical data, calibration ensures that scientific findings are both trustworthy and transferable. The protocols and tools outlined herein provide a roadmap for researchers, particularly in fields like drug development, to build robust, reliable, and ultimately more impactful scientific workflows. As computational methods continue to grow in complexity and influence, a steadfast commitment to rigorous calibration will remain essential for ensuring that our digital tools accurately reflect the realities they are designed to explore.

Calibration is a fundamental process in scientific modeling, defined as the adjustment of a model's unobservable parameters to ensure its outputs align closely with observed empirical data [12] [13]. In the context of computer simulation models, calibration serves as a critical step for estimating parameters that cannot be directly measured, particularly when direct data are unavailable for certain components of a biological or physical system [12]. This process is especially vital in complex fields like cancer research and drug development, where models must accurately represent natural history disease progression or predict clinical outcomes despite significant knowledge gaps.

The calibration process functions as an inverse solution, where researchers work backward from known outcomes to determine the input parameters that would produce those results [14]. This approach is particularly valuable when forward modeling is infeasible due to system complexity or unobservable processes. In health technology assessment and clinical research, proper calibration enables models to inform critical decisions about screening guidelines, treatment efficacy, and resource allocation [12] [13]. The credibility of these models hinges on rigorous calibration and subsequent validation against independent data sources [13].

Common Calibration Targets Across Research Domains

Calibration Targets in Cancer Modeling

In cancer simulation models, calibration targets are typically population-level epidemiological outcomes derived from large-scale observational studies and registries. These targets provide the empirical benchmarks against which model outputs are compared during the calibration process. The most frequently used targets include disease incidence, mortality rates, and disease prevalence, which collectively capture the population burden of cancer over time [12]. These data are commonly sourced from comprehensive cancer registries such as the National Cancer Institute's Surveillance, Epidemiology, and End Results (SEER) program, which provides high-quality, population-based information on cancer incidence and survival [12] [15].

Additional important targets in cancer modeling include stage distribution at diagnosis and survival statistics, which reflect both the natural history of the disease and the impact of early detection and treatment interventions [12]. For example, the Cancer Intervention and Surveillance Modeling Network (CISNET) models, which inform U.S. preventive services screening recommendations, rely heavily on these calibration targets to ensure their projections align with observed population patterns [12]. The table below summarizes the most common calibration targets used in cancer simulation modeling.

Table 1: Common Calibration Targets in Cancer Simulation Models

| Calibration Target | Description | Common Data Sources |

|---|---|---|

| Incidence | Rate of new cancer cases within a specific time period | Cancer registries (e.g., SEER), observational studies |

| Mortality | Death rate due to cancer | Vital statistics records, cancer registries |

| Prevalence | Proportion of individuals with cancer at a specific point in time | Cancer registries, population health studies |

| Stage Distribution | Breakdown of cancer cases by stage at diagnosis | Cancer registries, diagnostic imaging databases |

| Survival Statistics | Proportion of patients living for a certain time after diagnosis | Clinical trials, cohort studies, cancer registries |

Calibration Targets in Clinical Trial Contexts

In clinical trial research and drug development, calibration targets shift toward more specific efficacy and safety endpoints. For oncology trials, time-to-event endpoints such as overall survival (OS) and progression-free survival (PFS) serve as critical calibration targets [16] [15]. These endpoints are particularly important when reconciling differences between randomized controlled trial results and real-world evidence, where measurement error and differences in assessment protocols can introduce significant bias [16].

The emergence of real-world data (RWD) from electronic health records, claims databases, and registry studies has created new opportunities and challenges for calibration in clinical research [16] [15]. When using RWD to construct external control arms for single-arm trials or to contextualize trial results in broader populations, researchers must calibrate for systematic differences in outcome measurement between highly controlled trial settings and routine clinical practice [16]. This often requires specialized statistical methods, such as survival regression calibration (SRC), which addresses measurement error in time-to-event outcomes [16].

Table 2: Common Calibration Targets in Clinical Trial Contexts

| Calibration Target | Description | Application Context |

|---|---|---|

| Median Survival Times | Median overall survival or progression-free survival | Oncology trials, comparative effectiveness research |

| Restricted Mean Survival Time | Average survival time up to a predefined timepoint | Trial emulation, real-world evidence generation |

| Hazard Ratios | Relative risk of event between treatment groups | Cross-study comparisons, meta-analyses |

| Response Rates | Proportion of patients achieving clinical response | Dose optimization studies, biomarker validation |

| Safety Endpoints | Incidence of adverse events, treatment discontinuation | Benefit-risk assessment, pharmacovigilance |

Methodological Frameworks for Calibration

Goodness-of-Fit Metrics and Acceptance Criteria

Selecting appropriate goodness-of-fit (GOF) metrics is essential for quantifying the alignment between model outputs and calibration targets. The choice of GOF metric depends on the statistical properties of the calibration targets and the modeling context. In cancer simulation models, the most commonly used GOF measure is mean squared error (MSE), which calculates the average squared difference between model outputs and observed data [12]. Weighted MSE is often employed when calibration targets have different degrees of uncertainty or variable importance [12].

Other frequently used GOF metrics include likelihood-based measures, which evaluate the probability of observing the calibration targets given the model parameters, and confidence interval scores, which assess whether model outputs fall within the confidence intervals of the observed data [12]. The ISPOR-SMDM Modeling Good Research Practices Task Force emphasizes that GOF metrics should be appropriate for the mathematical structure of the model and should account for uncertainty in both the empirical data and model outputs [13].

Acceptance criteria define the thresholds for determining whether a model's fit to calibration targets is sufficient for its intended purpose [12]. These criteria may include statistical significance levels, absolute difference thresholds, or relative error limits. For example, a model might be considered calibrated if the MSE falls below a predetermined value or if a specified percentage of model outputs fall within the confidence intervals of the observed data [12].

Parameter Search Algorithms

Parameter search algorithms identify combinations of unobservable parameters that minimize the GOF metric, effectively searching the parameter space for optimal solutions [12]. The choice of algorithm depends on the model's complexity, the number of parameters requiring estimation, and computational constraints.

The simplest approach is grid search, which involves discretizing continuous parameters and evaluating all possible combinations within the defined parameter space [12]. While straightforward to implement, this method becomes computationally prohibitive for models with many parameters due to the "curse of dimensionality." For instance, one study noted that calibrating a breast cancer simulation model using grid search would require approximately 70 days to evaluate all parameter combinations [12].

Random search represents another common approach, where parameter values are randomly sampled from predefined distributions [12]. This method often proves more efficient than grid search for high-dimensional problems. More sophisticated approaches include the Nelder-Mead simplex method, Bayesian optimization, and various machine learning algorithms [12]. Despite advances in machine learning, these methods remain underutilized in cancer modeling, presenting an opportunity for methodological improvement [12].

Diagram 1: General calibration workflow showing the iterative process of comparing model outputs to calibration targets and adjusting parameters until acceptance criteria are met.

Experimental Protocols for Calibration

Protocol 1: Calibrating Cancer Natural History Models

Purpose: To estimate unobservable natural history parameters in cancer simulation models using population-level epidemiological targets.

Materials and Methods:

- Data Sources: Obtain cancer incidence, mortality, and prevalence data from high-quality registries (e.g., SEER). Gather stage distribution and survival statistics from observational studies or clinical databases.

- Parameter Selection: Identify unobservable parameters requiring estimation through calibration (e.g., tumor growth rates, proportion of indolent tumors, transition probabilities between disease states).

- Goodness-of-Fit Metric Selection: Choose appropriate GOF metrics (typically MSE or weighted MSE for time-series data).

- Parameter Search: Implement efficient search algorithms (random search, Bayesian optimization, or Nelder-Mead method) to explore parameter space.

Procedure:

- Define calibration targets and their associated uncertainty measures.

- Specify plausible ranges for each parameter based on biological constraints or prior knowledge.

- Initialize parameter values within predefined ranges.

- Run the simulation model with current parameter values.

- Calculate GOF between model outputs and calibration targets.

- Apply parameter search algorithm to update parameter values.

- Repeat steps 4-6 until stopping rule is triggered (e.g., maximum iterations, computation time, or convergence criteria).

- Apply acceptance criteria to determine if calibration is successful.

- Document all calibrated parameter values and their fit to calibration targets.

Validation: Following calibration, validate the model using data not used in the calibration process (temporal, geographic, or conceptual validation) [13].

Protocol 2: Survival Regression Calibration for Time-to-Event Outcomes

Purpose: To correct for measurement error in time-to-event outcomes when combining randomized trial data with real-world evidence.

Materials and Methods:

- Data Sources: Internal validation sample with both "true" (trial-like) and "mismeasured" (real-world-like) outcome assessments. Full study sample with mismeasured outcomes only.

- Statistical Models: Weibull regression models for time-to-event data. Standard regression calibration for continuous outcomes.

Procedure:

- In the validation sample, fit separate Weibull regression models using the true outcome (Y) and the mismeasured outcome (Y*) as dependent variables [16].

- Estimate the relationship between the parameters of the true and mismeasured Weibull models.

- Using this relationship, calibrate the mismeasured outcome values in the full study sample [16].

- Compare the calibrated versus uncalibrated estimates of the survival endpoint of interest (e.g., median PFS).

- Evaluate reduction in measurement error bias through simulation or comparison to known benchmarks.

Application: This method is particularly valuable when using real-world data to construct external control arms for single-arm trials, where outcomes are measured without error in the trial but potentially with error in the real-world cohort [16].

Diagram 2: Survival regression calibration workflow for addressing measurement error in time-to-event outcomes when combining trial and real-world data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Calibration Studies

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Cancer Registry Data | Provides population-level incidence, mortality, and survival data for calibration targets | Cancer natural history model calibration |

| Structured Query Language (SQL) | Extracts and transforms electronic health record data for analysis | Real-world evidence generation for clinical trial emulation |

| Gradient Boosting Machine (GBM) | Machine learning algorithm for prognostic phenotyping of real-world patients | Risk stratification in trial emulation frameworks |

| Weibull Regression Models | Parametric survival models for time-to-event data | Survival regression calibration for measurement error correction |

| Bayesian Optimization | Efficient parameter search algorithm for high-dimensional problems | Calibration of complex simulation models with many parameters |

| Inverse Probability of Treatment Weighting | Statistical method for balancing covariates between treatment groups | Trial emulation using observational data |

| Platt Scaling | Post-hoc calibration method for correcting probabilistic predictions | Machine learning model calibration in drug-target interaction prediction |

| Monte Carlo Dropout | Approximation to Bayesian inference for uncertainty quantification | Neural network calibration in cheminformatics applications |

Advanced Applications and Future Directions

Machine Learning for Prognostic Phenotyping in Trial Emulation

Advanced machine learning techniques are increasingly employed to address challenges in translating clinical trial results to real-world populations. The TrialTranslator framework exemplifies this approach, using gradient boosting machines (GBMs) to risk-stratify real-world oncology patients into distinct prognostic phenotypes before emulating landmark phase 3 trials [15]. This method has revealed significant heterogeneity in treatment effects across risk groups, with high-risk phenotypes showing substantially lower survival times and treatment benefits compared to RCT populations [15].

The implementation involves developing cancer-specific prognostic models optimized for predictive performance at clinically relevant timepoints (e.g., 1-year survival for advanced NSCLC, 2-year survival for other solid tumors) [15]. The top-performing model – typically GBM based on time-dependent AUC metrics – is then used to calculate mortality risk scores for real-world patients, enabling their stratification into low-, medium-, and high-risk phenotypes [15]. This approach facilitates more nuanced assessment of trial generalizability beyond simple eligibility criteria matching.

Probability Calibration in Drug Discovery Applications

In drug discovery, neural network-based structure-activity models often exhibit poor calibration, where their confidence estimates do not reflect true predictive uncertainty [2]. This problem is particularly consequential in high-stakes decision processes where inaccurate uncertainty estimates can lead to costly misallocations of experimental resources.

Multiple approaches have emerged to address this challenge, including post-hoc calibration methods like Platt scaling and train-time uncertainty quantification methods such as Monte Carlo dropout [2]. The HMC Bayesian Last Layer (HBLL) approach represents a promising advancement, generating Hamiltonian Monte Carlo trajectories to obtain samples for the parameters of a Bayesian logistic regression fitted to the hidden layer of a baseline neural network [2]. This method combines the benefits of uncertainty estimation and probability calibration while maintaining computational efficiency.

The selection of hyperparameter tuning metrics significantly impacts model calibration properties. Studies demonstrate that combining post-hoc calibration with well-performing uncertainty quantification approaches can boost both model accuracy and calibration, emphasizing the importance of comprehensive calibration strategies in cheminformatics applications [2].

Calibration methodologies form a critical bridge between theoretical models and empirical reality across biomedical research domains. From population-level cancer simulations to individual-level prediction of drug-target interactions, appropriate calibration targets and methods ensure that models generate reliable, actionable evidence. The continued refinement of calibration techniques – particularly through machine learning approaches and specialized statistical methods for addressing measurement error – promises to enhance the credibility and utility of models in informing clinical and policy decisions. As modeling grows in complexity and scope, rigorous calibration remains fundamental to the responsible application of models in health research and drug development.

Mechanistic computational models are indispensable for interrogating biological theories, providing a structured approach to decipher complex cellular and physiological processes across multiple scales [3] [17]. Before these models can yield useful, predictive insights, they must first be calibrated—a process where model inputs and parameters are adjusted until outputs recapitulate existing experimental datasets [3] [1]. However, biological systems are inherently characterized by heterogeneity, polyfunctionality, and interactions across spatiotemporal scales, leading to high-dimensional parameter spaces with many degrees of freedom [18]. This complexity is compounded by limited and noisy data, as well as structurally unidentifiable parameters that cannot be uniquely determined from available observations [1] [17]. Navigating this complex parameter space is a fundamental challenge in quantitative biology. This Application Note provides a structured framework and practical protocols for tackling this challenge, enabling researchers to calibrate models robustly and derive biologically meaningful insights.

Foundational Concepts and Challenges

The Nature of the Problem

Calibrating biological models differs significantly from traditional parameter estimation. The goal is not to find a single optimal parameter set, but to identify ranges of biologically plausible parameter values that cause model simulations to fit within the boundaries of experimental data [1]. This is crucial for capturing the natural variability observed in biological systems, from single-cell measurements to population-level heterogeneity [3].

Key challenges include:

- High-dimensionality: Models often contain dozens of parameters, creating a vast hypercube of parameter space that cannot be exhaustively sampled [1].

- Practical non-identifiability: Available data may be insufficient to constrain parameters, even when models are structurally identifiable [17].

- Multi-scale dynamics: Biological systems evolve across multiple time scales, making accurate system identification particularly challenging [19].

A Taxonomy of Parameter Space Navigation Strategies

Table 1: Classification of Calibration Approaches for Biological Systems

| Approach | Core Principle | Ideal Use Case | Key Advantages |

|---|---|---|---|

| CaliPro [3] [1] | Iterative parameter density estimation using user-defined success criteria | Calibrating to full data distributions, not just median trends | Model-agnostic; finds robust parameter spaces; works with deterministic and stochastic models |

| Bayesian Multimodel Inference (MMI) [20] | Combines predictions from multiple candidate models using weighted averaging | When multiple plausible model structures exist for the same pathway | Increases predictive certainty; robust to model selection bias |

| Expert-Guided Multi-Objective Optimization [21] | Incorporates domain knowledge as hard and soft constraints in an optimization framework | Data-limited settings with strong prior knowledge from domain experts | Mitigates overfitting; improves biological relevance of solutions |

| SINDy with Multi-Scale Analysis [19] | Data-driven discovery of governing equations from multi-scale datasets | Systems where governing equations are unknown but rich time-series data exists | Discovers interpretable, parsimonious models directly from data |

| Bayesian Optimization [22] | Sample-efficient global optimization using Gaussian processes | Expensive-to-evaluate experiments (e.g., bioreactor conditions) | Dramatically reduces experimental resource requirements |

Core Methodologies and Application Protocols

Protocol 1: The CaliPro Framework for Temporal Data

The Calibration Protocol (CaliPro) is an iterative, model-agnostic method that utilizes parameter density estimation to refine parameter space when calibrating to temporal biological datasets [3].

Workflow Overview

Step-by-Step Procedure

- Define Initial Parameter Ranges: Compile literature values and establish the widest biologically feasible range for each parameter. Well-constrained parameters should have narrower bounds [3] [1].

- Establish Pass Set Definition: Critically, define what constitutes a "successful" simulation. Instead of minimizing a single metric, specify how simulations must recapitulate the full range of experimental outcomes (e.g., "must fall within the 5th-95th percentile of observed data") [3].

- Perform Stratified Sampling: Use Latin Hypercube Sampling (LHS) or similar techniques to efficiently explore the high-dimensional parameter space, ensuring good coverage [3].

- Execute Model & Evaluate: Run the model for each parameter combination and classify each simulation as a "pass" or "fail" based on the predefined criteria.

- Estimate Parameter Densities: Calculate the density of passing parameters. The goal is to find a continuous, robust region of parameter space, not just individual points [3].

- Refine Parameter Ranges: Use the density estimates to narrow the parameter bounds for the next iteration, focusing on regions with high density of passing simulations.

- Iterate Until Convergence: Repeat steps 3-6 until the parameter ranges stabilize and the majority of sampled parameters within these ranges produce simulations that pass the criteria.

Protocol 2: Bayesian Multimodel Inference for Model Uncertainty

When multiple model structures can describe the same biological pathway, Bayesian Multimodel Inference (MMI) provides a disciplined approach to increase predictive certainty [20].

Workflow Overview

Step-by-Step Procedure

- Assemble Candidate Models: Curate a set of models ( { \mathcal{M}1, \ldots, \mathcal{M}K } ) that represent the same biological pathway but differ in structure or simplifying assumptions [20].

- Calibrate Individual Models: For each model ( \mathcal{M}k ), use Bayesian parameter estimation to infer posterior parameter distributions given training data ( d{\text{train}} ). This yields model-specific predictive densities ( \text{p}(qk | \mathcal{M}k, d_{\text{train}}) ) for a Quantity of Interest (QoI) [20].

- Calculate Model Weights: Choose a weighting scheme based on the research goal:

- Bayesian Model Averaging (BMA): Weights based on the marginal likelihood of the data given the model, ( wk = \text{p}(\mathcal{M}k | d_{\text{train}}) ) [20].

- Stacking: Weights are chosen to maximize the posterior predictive accuracy of the combined model, often providing better predictive performance than BMA [20].

- Construct MMI Predictor: Form the multimodel prediction as a weighted combination: ( \text{p}(q | d{\text{train}}, \mathfrak{M}K) = \sum{k=1}^K wk \text{p}(qk | \mathcal{M}k, d_{\text{train}}) ) [20].

- Validate: Assess the predictive performance and robustness of the MMI predictor on held-out test data. MMI predictions are typically more robust to changes in the model set and data uncertainty than any single model [20].

Protocol 3: Expert-Guided Multi-Objective Optimization

For settings with limited data, incorporating domain knowledge can critically constrain the parameter search.

Step-by-Step Procedure

- Formalize Expert Knowledge:

- Hard Constraints: Define absolute biological boundaries based on direct measurements (e.g., "maximum cell doubling time must be < 24 hours").

- Soft Constraints: Encode qualitative domain expectations (e.g., "calcium response curve should be monophasic") as additional optimization objectives [21].

- Set Up Multi-Objective Optimization: Use an algorithm such as NSGA-II to simultaneously optimize multiple objectives: 1) fit to quantitative data, and 2) adherence to soft qualitative constraints [21].

- Iterate and Refine: The resulting Pareto front represents trade-offs between quantitative fit and biological plausibility. Experts can review these solutions to further refine constraints and weights.

Practical Applications and Case Studies

Case Study: CaliPro in Infectious Disease Modeling

CaliPro has been successfully applied to calibrate an infectious disease transmission model to temporal incidence data [3]. The pass set definition required that model simulations capture the rising, peak, and falling phases of an outbreak within the confidence intervals of reported case data. The protocol identified a robust parameter space that could recapitulate the observed epidemic trajectory, revealing key insights into the plausible range of the basic reproduction number ( R_0 ) and the duration of infectiousness. This approach is particularly valuable for policy planning, as it provides a family of plausible parameter sets for forecasting, rather than a single, potentially overfitted, prediction [3] [1].

Case Study: MMI for ERK Signaling Pathway

Ten different ordinary differential equation models of the core ERK signaling pathway were integrated using MMI [20]. The MMI consensus predictor was more robust to increases in data uncertainty and changes in the composition of the model set than any single, "best-fit" model. When applied to subcellular location-specific ERK activity data, MMI suggested that differences in both Rap1 activation and negative feedback strength were necessary to explain the observed dynamics—a conclusion not reliably reached by any single model in the set [20].

Table 2: Summary of Key Outcomes from Featured Case Studies

| Case Study | Biological System | Calibration Method | Key Outcome |

|---|---|---|---|

| Infectious Disease Modeling [3] | Disease transmission dynamics | CaliPro | Identified a robust range for ( R_0 ) and infectious period, capturing full outbreak trajectory. |

| ERK Signaling Prediction [20] | Intracellular kinase signaling | Bayesian MMI | Achieved predictions robust to model uncertainty and data noise; identified mechanism for localized activity. |

| Metabolic Engineering [22] | Limonene/Astaxanthin production in E. coli | Bayesian Optimization | Converged to optimal inducer concentrations in ~22% of the experiments required by a grid search. |

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions for Parameter Space Analysis

| Reagent / Resource | Type | Function in Workflow | Example/Note |

|---|---|---|---|

| Latin Hypercube Sampling (LHS) | Algorithm | Efficient, stratified sampling of high-dimensional parameter spaces. | Provides better coverage than random sampling with fewer samples [3]. |

| Gaussian Process (GP) | Probabilistic Model | Serves as a surrogate for the expensive objective function; models mean and uncertainty. | Core component of Bayesian Optimization [22]. |

| Expected Improvement (EI) | Acquisition Function | Guides the search in Bayesian Optimization by balancing exploration and exploitation. | Determines the next most informative point to sample [22]. |

| NSGA-II | Optimization Algorithm | Solves multi-objective optimization problems, finding a Pareto-optimal front of solutions. | Used in expert-guided frameworks to balance data fit and biological constraints [21]. |

| SINDy | System Identification Framework | Discovers parsimonious governing equations directly from time-series data. | Effective when combined with multi-scale analysis (CSP) [19]. |

| Marionette-wild E. coli [22] | Biological Chassis | Engineered strain with orthogonal inducible promoters enabling multi-dimensional optimization of gene expression. | Used for validating Bayesian Optimization of a 10-step astaxanthin pathway. |

| BioKernel [22] | Software | No-code Bayesian Optimization interface designed for biological experimental campaigns. | Features heteroscedastic noise modeling and modular kernel architecture. |

The calibration of simulation parameters from experimental data is a fundamental process in scientific research, particularly in fields like drug development. Calibration involves identifying input parameter values that produce model outputs which best predict observed empirical data [23]. This process is critical for ensuring that computational models provide accurate, reliable, and meaningful predictions for real-world decision making.

The quality, or "goodness," of a calibration is measured by how well the model's predictions match the experimental data [24]. Selecting appropriate metrics to evaluate this goodness-of-fit is therefore paramount, as different metrics can lead to different conclusions about model validity and performance. The choice of metric should be driven by the specific goals of the analysis and the nature of the data, rather than historical precedent or convenience.

Core Goodness-of-Fit Metrics

Various metrics are available to quantify the agreement between model predictions and experimental data. The most appropriate metric depends on whether the research aims to minimize absolute or relative error across the calibration range.

Limitations of the Coefficient of Determination (R²)

The coefficient of determination (R²) has historically been used to evaluate calibration curves but possesses critical flaws for this purpose [25]. As a measure of absolute variance, R² is inherently biased toward the high end of the calibration curve [24] [25]. It weights absolute errors equally, meaning a 1% error at a high concentration has the same impact on R² as a 100% error at a low concentration [25]. Consequently, a calibration curve with an excellent R² value may still have unacceptably high relative errors at the lower end, which is often critical in analytical chemistry and biological simulation [24].

Table 1: Comparison of Key Goodness-of-Fit Metrics

| Metric | Calculation | Primary Use Case | Advantages | Disadvantages |

|---|---|---|---|---|

| R² (Coefficient of Determination) | Ratio of the variance of fitted values to the variance of true values [25]. | Limited use; not recommended for calibration acceptance [25]. | Single, familiar metric. | Prioritizes high-end accuracy; ignores relative error; can mask poor low-end fit [24] [25]. |

| %RE (Percent Relative Error) | RE = (Measured Value - True Value) / True Value × 100% Evaluated for each calibration point [24]. |

Critical for ensuring accuracy across all concentration levels, especially at the low end [24]. | Direct, intuitive measure of accuracy at a specific point; identifies non-linearity [24] [25]. | Multiple values to assess; requires setting acceptance criteria for each point. |

| %RSE (Percent Relative Standard Error) | %RSE = √[ Σ( (x'ᵢ - xᵢ)/ xᵢ )² / (n - k) ] × 100% where x' is the calculated concentration, x is the true concentration, n is the number of standards, and k is the degrees of freedom [24] [25]. |

Providing a single, overall metric for the quality of the entire calibration curve [25]. | Single metric for the whole curve; consistent with RSD for Average RF calibrations; applicable to regression models [24]. | Does not identify which specific point(s) may be problematic. |

Recommended Metrics for Calibration Acceptance

For robust model calibration, the following metrics are preferred:

- Percent Relative Error (%RE): This metric calculates the relative error at each individual calibration point [24]. It is exceptionally valuable for identifying specific regions where the calibration model fails, such as significant under- or over-prediction at the curve's ends [25]. Acceptance criteria typically specify a maximum %RE (e.g., <15% or <20%) for each point [24].

- Percent Relative Standard Error (%RSE): This metric provides a single value that summarizes the total relative error across all calibration points [24] [25]. It is an extension of the relative standard deviation (RSD) used for Average Response Factor calibrations to regression-type calibrations. A lower %RSE indicates a better overall fit in relative terms [24].

Experimental Protocols for Calibration and Validation

A rigorous, iterative approach is required to transition from data collection to a validated model. The workflow below outlines this high-level process.

Protocol 1: Selecting and Weighting a Calibration Model

Objective: To choose and execute a regression model that minimizes relative error across the entire calibration range.

- Define the Range and Levels: Establish the calibration range based on expected sample concentrations. Prepare standards at a minimum of 5-6 concentration levels across this range [26].

- Run Initial Calibration: Analyze calibration standards and record the instrument response (e.g., peak area, intensity) for each concentration level.

- Fit Multiple Models: Calculate calibration curves using at least three common models:

- Ordinary Least Squares (OLSR) Regression: Unweighted linear regression.

- Weighted Least Squares (WLSR) Regression 1/x: Linear regression weighted by the reciprocal of the concentration.

- Weighted Least Squares (WLSR) Regression 1/x²: Linear regression weighted by the reciprocal of the concentration squared [26].

- Calculate %RE for Each Point: For each model, calculate the percent relative error (%RE) for every calibration standard [24].

- Select the Optimal Model: The model that produces the most consistent %RE values across the concentration range, with all values falling within pre-defined acceptance limits (e.g., ±15%), should be selected. Weighted models (1/x or 1/x²) are often necessary to achieve this for wide calibration ranges [24].

Protocol 2: Assessing Goodness-of-Fit and Model Acceptance

Objective: To quantitatively evaluate the chosen calibration model against acceptance criteria to determine its suitability for use.

- Apply Individual Point Criteria: Examine the %RE for every calibration standard. The calibration is typically rejected if any individual point exceeds the specified acceptance criterion (e.g., ±15% or ±20%) [24] [25].

- Apply Overall Fit Criteria: Calculate the %RSE for the entire calibration curve. Compare the value against the pre-defined method acceptance criterion.

- Document and Report: Report the selected model, all calculated %RE values, and the final %RSE. The calibration is accepted only if both individual and overall criteria are met.

Protocol 3: Integration with Broader Model Validation

Objective: To position the calibrated model within a comprehensive validation framework, establishing its credibility for intended use.

- Face Validity (First Order): Have domain experts review the model structure, input parameters, and outputs to ensure they are plausible and align with current scientific understanding [23].

- Internal Validation (Second Order): Verify the correctness of the computer code and check that the model behaves as expected under controlled conditions [23].

- External Validation (Third Order): Compare the calibrated model's predictions against a new, independent dataset that was not used in the model development or calibration process. This is a stringent test of model performance [23].

- Prospective/Predictive Validation (Fourth Order): Assess the model's ability to accurately predict future outcomes or outcomes from a study that was completed after the model was developed [23].

The following diagram illustrates the decision-making process for selecting the appropriate goodness-of-fit metric based on the error structure of the data.

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key computational and methodological "reagents" essential for conducting rigorous model calibration.

Table 2: Essential Reagents and Materials for Calibration Studies

| Item Name | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Weighted Least Squares (WLSR) Regression | A statistical method that applies weights to data points to minimize the sum of relative squared residuals, ensuring balanced influence across the concentration range [24]. | Calibrating over wide concentration ranges where low-concentration accuracy is as important as high-concentration accuracy. |

| Percent Relative Error (%RE) | A diagnostic reagent used to quantify the accuracy of the model's prediction at a specific, individual concentration level [24]. | Identifying a single, poorly performing calibration standard that might be an outlier or indicate model failure at a specific range. |

| Percent Relative Standard Error (%RSE) | A summary reagent that aggregates the relative error from all calibration points into a single metric for overall model quality assessment [24] [25]. | Providing a single, method-wide acceptance criterion for a calibration curve, as required in some modern analytical methods [25]. |

| Probabilistic Calibration | A framework that integrates calibration with probabilistic sensitivity analysis by identifying sets of input parameter values that produce outputs fitting observed data [27]. | Health economic modeling where input parameters are defined by probability distributions to account for uncertainty. |

| Experimental Data for External Validation | A critical resource consisting of empirical observations that were not used in model development or calibration, used for the highest level of model testing [23]. | Testing the predictive power and generalizability of a calibrated model before its use in real-world decision-making. |

Methodologies in Practice: From Traditional Algorithms to AI-Driven Approaches

Parameter calibration is a fundamental process in scientific computing and computational modeling, wherein the parameters of a simulation or numerical model are systematically adjusted to ensure its outputs align with observed experimental or historical data [28]. The objective is to identify a set of parameter values that enables the model to accurately replicate the behavior of the real-world system under study [28]. This process is crucial across numerous fields, including systems pharmacology, geomorphology, quantum device control, and drug-target interaction prediction [29] [28] [30].

In computational research, particularly when calibrating simulation parameters from experimental data, the choice of optimization algorithm can significantly impact the accuracy, efficiency, and generalizability of the resulting model. Traditional parameter search algorithms such as Grid Search, Random Search, and the Nelder-Mead Simplex Algorithm form the cornerstone of model calibration, each offering distinct strategies for navigating complex parameter spaces. These gradient-free approaches are especially valuable in contexts where the objective function is noisy, non-differentiable, or computationally expensive to evaluate [30]. This article provides detailed application notes and experimental protocols for employing these classic algorithms within the context of calibrating simulation parameters from experimental data.

Table 1: Overview of Traditional Parameter Search Algorithms

| Algorithm | Core Principle | Key Strengths | Primary Limitations | Typical Use Cases |

|---|---|---|---|---|

| Grid Search | Exhaustive evaluation of all parameter combinations within a predefined discrete set. | Conceptually simple, inherently parallelizable, guarantees coverage of the grid. | Curse of dimensionality; computationally prohibitive for high-dimensional spaces. | Initial coarse exploration of low-dimensional parameter spaces. |

| Random Search | Random sampling of parameter values from specified distributions over the parameter space. | More efficient than Grid Search for many problems; better at escaping local minima. | Performance depends on luck and the number of iterations; may miss subtle optima. | Calibration problems with moderate dimensionality and when computational budget is limited. |

| Nelder-Mead | A direct search method that uses a simplex (geometric shape) to explore and converge towards a minimum. | Derivative-free, can converge quickly to a local minimum with relatively few function evaluations. | Tends to get stuck in local minima; performance can be sensitive to the initial simplex. | Local refinement of parameters in smooth, low-dimensional problems. |

Algorithm Fundamentals and Comparative Analysis

Grid Search

Grid Search, also known as a parameter sweep, operates by defining a finite set of possible values for each parameter. The algorithm then evaluates the model's performance for every possible combination of these parameter values. While this approach is systematic and ensures coverage of the defined grid, it suffers from the "curse of dimensionality," as the number of required evaluations grows exponentially with the number of parameters [29]. Its application is therefore typically limited to coarse exploration of models with a small number of critical parameters. For instance, in pharmacological models, a hybrid approach might use adaptive methods for linear parameters while reserving a coarse-to-fine grid search for optimal values of a limited set of non-linear parameters [29].

Random Search

Random Search addresses a key limitation of Grid Search by sampling parameter sets randomly from the search space, often using techniques like Latin Hypercube Sampling (LHS) to ensure good coverage [31]. This method often finds good solutions faster than Grid Search because it has a higher chance of stumbling upon promising regions of the parameter space without being constrained by a fixed grid. A key protocol involves first defining the parameter ranges, generating a LHS sample within a unit hypercube, and then rescaling these samples to the specified parameter bounds [31]. This algorithm is particularly useful in the early stages of calibration for models with a moderate number of parameters, such as in the calibration of transition probabilities in multi-state health models like a "Cancer Relative Survival Model" [31].

Nelder-Mead Simplex Algorithm

The Nelder-Mead (NM) algorithm is a popular direct search method for finding a local optimum of a function. It operates on a simplex—a geometric shape defined by n+1 vertices in an n-dimensional parameter space. Through an iterative process, the simplex reflects, expands, or contracts away from points with poor performance, gradually moving towards and contracting around a minimum [32]. A significant strength of Nelder-Mead is its simplicity and its ability to converge quickly to a local minimum without requiring gradient information. However, its major weakness is its tendency to converge to local minima and its sensitivity to the initial starting simplex [32] [30]. It is well-suited for fine-tuning parameters in smooth, low-dimensional problems after a global search has identified a promising region.

Table 2: Performance Characteristics in Different Contexts

| Application Context | Grid Search | Random Search | Nelder-Mead |

|---|---|---|---|

| Pharmacological Model Calibration [29] | Used in hybrid methods for non-linear parameters. | Not explicitly discussed. | Prone to local minima; chaos synchronization is suggested as an alternative. |

| Landscape Evolution Model (IMC) [28] | Implicitly compared against; found less efficient. | Implicitly compared against; found less efficient. | Outperformed by a specialized Gaussian neighborhood algorithm. |

| Quantum Device Calibration [30] | Not recommended due to high dimensionality. | Not recommended due to high dimensionality. | Widely used but outperformed by modern algorithms like CMA-ES. |

| Health State Transition Model [31] | Not used. | Effective for calibrating 2 parameters with 1000 samples. | Can be used for local refinement after random search. |

Figure 1: A strategic workflow for selecting and sequencing traditional parameter search algorithms.

Detailed Experimental Protocols

Protocol for Random Search with Latin Hypercube Sampling

This protocol outlines the steps for calibrating a model using a Random Search, as applied in a health state transition model calibration [31].

Objective: To find the parameter set that minimizes the difference between model-predicted survival and observed survival data.

Materials: Model code (markov_crs.R), target data (CRSTargets.RData), computational environment (R).

Define Calibration Parameters and Bounds:

- Identify the parameters to be calibrated (e.g.,

p.Mets,p.DieMets). - Set plausible lower (

lb) and upper (ub) bounds for each parameter based on domain knowledge.

- Identify the parameters to be calibrated (e.g.,

Generate Parameter Samples:

- Set a random seed for reproducibility (e.g.,

set.seed(1010). - Specify the number of samples (e.g.,

rs.n.samp <- 1000). - Generate a Latin Hypercube Sample (LHS) within a unit hypercube:

m.lhs.unit <- randomLHS(rs.n.samp, n.param). - Rescale the unit LHS to the actual parameter bounds using the quantile function for a uniform distribution.

- Set a random seed for reproducibility (e.g.,

Define Goodness-of-Fit Metric:

- Select a metric to quantify the fit between model output and target data. A common choice is the log-likelihood.

- Implement a function, e.g.,

gof_norm_loglike, that calculates this metric.

Run the Calibration Loop:

- Initialize a vector to store the goodness-of-fit (GOF) value for each parameter set.

- For each sampled parameter set

j: a. Run the simulation model with the parameter set:model.res = markov_crs(v.params = rs.param.samp[j, ]). b. Calculate the GOF between the model output (model.res$Surv) and the target data (CRS.targets$Surv$value). c. Store the GOF value.

Identify Best-Fitting Parameters:

- After the loop, combine the parameter sets and their GOF scores into a matrix.

- Order the parameter sets from best (highest GOF) to worst.

- The top-ranked set (e.g.,

rs.calib.res[1, c("p.Mets", "p.DieMets")]) is the calibrated solution.

Protocol for the Nelder-Mead Simplex Algorithm

This protocol describes the steps for employing the Nelder-Mead algorithm for local parameter refinement [32].

Objective: To refine an initial parameter guess to a local optimum.

Materials: Objective function, initial parameter guess, NM algorithm implementation (e.g., optim in R or scipy.optimize in Python).

Initialize the Simplex:

- Define an initial starting point ( x_0 ) in the parameter space. This can be a best guess or an output from a global search like Random Search.

- The algorithm constructs an initial simplex around ( x_0 ), often by perturbing each parameter dimension.

Evaluate and Order Vertices:

- Evaluate the objective function (e.g., a loss or error function) at each vertex of the simplex.

- Order the vertices from best (lowest loss) to worst (highest loss).

Iterative Refinement:

- Calculate Centroid: Compute the centroid of the simplex, excluding the worst point.

- Reflection: Reflect the worst point through the centroid. If the reflected point is better than the second-worst but not the best, replace the worst point with it.

- Expansion: If the reflected point is the best point so far, expand the reflection further in the same direction. If the expansion is successful, replace the worst point with the expanded point.

- Contraction: If the reflected point is worse than the second-worst point, perform a contraction.

- Outside Contraction: If the reflected point is better than the worst point, contract towards it.

- Inside Contraction: If the reflected point is worse than the worst point, contract away from it.

- Reduction (Shrink): If contraction fails, shrink the entire simplex towards the best point.

Check Convergence Criteria:

- Iterate until a stopping condition is met. Common criteria include:

- The simplex size becomes smaller than a specified tolerance.

- The change in the function value between iterations is negligible.

- A maximum number of iterations is reached.

- Iterate until a stopping condition is met. Common criteria include:

Output Result:

- The best vertex of the final simplex is returned as the optimal parameter set.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Materials for Parameter Calibration

| Item Name | Function / Description | Example Use Case |

|---|---|---|

| Latin Hypercube Sampling (LHS) | A statistical method for generating a near-random sample of parameter values from a multidimensional distribution, ensuring good space-filling properties. | Creating the initial population for a Random Search to ensure broad coverage of the parameter space [31]. |

| Goodness-of-Fit Function (GOF) | A function (e.g., Likelihood, Mean Squared Error, Root Mean Square Error) that quantifies the discrepancy between model predictions and observed data. | Serving as the objective for optimization algorithms to minimize/maximize during calibration [31] [33]. |

| Discrete Element Method (DEM) Software | Software that models the behavior of granular materials, requiring calibration of particle interaction parameters. | Simulating the motion and interaction of organic fertilizer particles for agricultural machinery design [34] [33]. |

| Response Surface Methodology (RSM) | A collection of statistical and mathematical techniques for empirical model building and optimization, often used to approximate the response of a complex system. | Building a surrogate model to understand the relationship between DEM parameters and the angle of repose in fertilizer particles [34] [33]. |

| Particle Swarm Optimization (PSO) | A computational method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given quality measure. | Often hybridized with other algorithms (e.g., NM) for global optimization in problems like non-contact blood pressure estimation [35]. |

Figure 2: The iterative logic and decision flow of the Nelder-Mead Simplex algorithm.

Advanced Applications and Hybrid Strategies

While the traditional algorithms are powerful, a significant trend in modern research is the development of hybrid strategies that combine their strengths to overcome their individual limitations. The most common pairing integrates a global explorer with a local refiner.

A prominent example is the Genetic and Nelder-Mead Algorithm (GANMA), which integrates the global search capabilities of a Genetic Algorithm (a population-based method akin to an advanced random search) with the local refinement strength of the Nelder-Mead method [32]. In this hybrid, the GA first performs a broad exploration of the parameter space. Once the GA population converges or after a set number of generations, the best solution(s) are passed to the NM algorithm for intensive local refinement. This synergy helps the GA overcome its weakness in fine-tuning solutions near an optimum, while the NM is saved from getting stuck in poor local minima far from the global solution [32].

Another innovative hybrid is the Nelder-Mead Particle Swarm Optimization (NM-PSO) algorithm, applied in non-contact blood pressure estimation [35]. Here, the PSO algorithm conducts a global search. When PSO's progress stagnates, the NM algorithm is invoked to perform a local search around the best particle found, helping to refine the solution and escape local plateaus. This combination enhances computational efficiency and the likelihood of finding the global optimum in complex, multi-peak problems [35].

Furthermore, traditional algorithms are increasingly being benchmarked and sometimes enhanced by machine learning. For instance, in calibrating Discrete Element Method (DEM) parameters for organic fertilizer, a Particle Swarm Optimization-Backpropagation (PSO-BP) neural network was shown to achieve a better fitting effect and higher prediction accuracy compared to a standard Response Surface Methodology (RSM) model [34]. Similarly, Random Forest and Artificial Neural Network models have been demonstrated to outperform RSM in calibrating DEM parameters for cohesive materials [33]. These ML models can act as highly accurate and fast-to-evaluate surrogate models, which are then optimized using traditional search algorithms, drastically reducing the computational cost of calibration.

Calibrating simulation parameters against experimental data is a fundamental challenge across scientific disciplines, from climate modeling to pharmaceutical development. This process is crucial for reducing uncertainty and improving the predictive accuracy of physics-based models [36]. Bayesian methods provide a principled framework for this calibration, enabling researchers to combine prior knowledge with observational data while rigorously quantifying uncertainty. The integration of Machine Learning (ML), particularly through surrogate models, has emerged as a powerful strategy to overcome the computational constraints associated with complex simulations. Within drug development, these advanced optimization techniques are formalized through Model-Informed Drug Development (MIDD), an essential framework for advancing therapeutic candidates and supporting regulatory decision-making [37].

Key Bayesian Calibration Methods

Bayesian calibration methods offer diverse strategies for integrating model simulations with experimental data. The choice of method depends on the specific calibration goals, computational resources, and the need for uncertainty quantification.

Table 1: Comparison of Bayesian Calibration Methods

| Method | Key Principle | Advantages | Limitations | Best-Suited Applications |

|---|---|---|---|---|

| Calibrate-Emulate-Sample (CES) | Uses surrogate models to emulate computer model outputs, then samples from the posterior [36]. | Excellent performance and rigorous uncertainty quantification [36]. | High computational expense [36]. | Problems where accurate UQ is critical and resources permit. |

| Goal-Oriented Bayesian Optimal Experimental Design (GBOED) | Leverages information-theoretic criteria to select data that is most relevant for calibration [36]. | Achieves comparable accuracy to CES using fewer model evaluations [36]. | Implementation complexity. | Problems with very expensive simulations, guiding data collection. |

| History Matching (HM) | Rules out regions of parameter space that are inconsistent with data, without full posterior characterization [36]. | Moderate effectiveness; can be useful as a precursor to other methods [36]. | Does not provide a full posterior distribution. | Initial screening of vast parameter spaces. |

| Computer Model Mixture Calibration | Represents the real system as a mixture of multiple computer models with input-dependent weights [38]. | Aggregates unique features from different models, often leading to more accurate predictions [38]. | Increased complexity from managing multiple models. | Situations where multiple competing models or physical theories exist. |

| Bayesian Optimal Experimental Design (BOED) | Uses Bayesian inference to design experiments that maximize information gain. | Provides a formal framework for experimental design. | Standard BOED may underperform regarding calibration accuracy [36]. | Designing experiments for parameter estimation or model discrimination. |

Machine Learning in Optimization and Calibration

Machine learning revolutionizes optimization and calibration by enabling a "predict-then-make" paradigm, shifting the focus from physical experimentation to in silico prediction [39].

Core Machine Learning Techniques

Supervised Learning acts as a workhorse for predictive modeling, where an algorithm is trained on a labeled dataset to map inputs (e.g., chemical structures) to known outputs (e.g., biological activity) [39]. This is ideal for classification and regression tasks, such as predicting compound properties or binding affinity.

Unsupervised Learning finds hidden structures within unlabeled data, helping to identify novel patterns or group similar data points without predefined categories [39].

Surrogate Models (Emulators) are a critical application of ML in calibration. They are inexpensive statistical models trained on the input-output data of a computationally expensive simulator. Once built, they can rapidly approximate the simulator's output, making iterative Bayesian calibration procedures like CES feasible [36].

Addressing Data Quality and Economic Challenges