A Comprehensive Framework for Benchmarking Synthetic Biology Simulation Tools

This article provides researchers, scientists, and drug development professionals with a structured framework for the rigorous benchmarking of synthetic biology simulation tools.

A Comprehensive Framework for Benchmarking Synthetic Biology Simulation Tools

Abstract

This article provides researchers, scientists, and drug development professionals with a structured framework for the rigorous benchmarking of synthetic biology simulation tools. It covers foundational principles for defining study scope and selecting methods, details the application of combinatorial optimization and high-throughput screening, addresses common troubleshooting and performance bottlenecks, and establishes robust validation and comparative analysis strategies using challenge-based assessments. The goal is to empower the community to perform unbiased, reproducible evaluations that accelerate the reliable design of biological systems.

Laying the Groundwork: Core Principles for Defining Your Benchmarking Study

Synthetic biology is rapidly transitioning from an artisanal practice to a disciplined engineering field, a shift powered by the adoption of robust benchmarking frameworks. These frameworks are not monolithic; they serve distinct strategic purposes. Neutral benchmarks act as independent arbiters for fair tool comparison on common ground, while method development benchmarks are tailored proving grounds designed to showcase a new method's specific advanced capabilities. Understanding this critical distinction enables researchers to select the right evaluation strategy, properly interpret performance claims, and accelerate the development of reliable biological simulation tools.

Neutral Benchmarks: The Independent Arbiters

Neutral benchmarks provide a standardized, community-vetted foundation for the objective comparison of different computational methods. Their primary purpose is to create a level playing field, free from the biases of any single development team, to assess how tools perform on realistic, representative tasks.

Benchmarking Machine Learning for Synthetic Lethality Prediction

A landmark 2024 study exemplifies the neutral benchmark approach, conducting a comprehensive evaluation of 12 machine learning methods for predicting synthetic lethal (SL) gene pairs in cancer [1]. The goal was to provide unbiased guidance to biologists on model selection.

- Experimental Protocol: The study established a rigorous pipeline assessing methods on both classification (identifying SL pairs) and ranking (prioritizing potential SL partners for a gene) tasks [1]. To test generalizability, researchers used three data-splitting methods (DSMs) of increasing difficulty:

- CV1: Random pairs held out (easiest).

- CV2: For each pair, one gene is unseen (semi-cold start).

- CV3: Both genes in a pair are unseen (full cold start, most realistic) [1].

- Performance Metrics: Models were evaluated using F1 scores for classification and NDCG@10 for ranking quality [1].

Table 1: Top-Performing Models in Synthetic Lethality Benchmarking (Classification Task, F1 Score)

| Model | Architecture | Key Data Inputs | Classification Score (F1) |

|---|---|---|---|

| SLMGAE | Graph Autoencoder | PPI, Gene Ontology, Pathways | 0.842 |

| GCATSL | Graph Neural Network | PPI, Knowledge Graph | 0.839 |

| PiLSL | Graph Neural Network | PPI, Gene Expression | 0.817 |

(Source: Adapted from results in Nature Communications 15, 9058 (2024) [1])

A key finding was that data quality significantly impacted performance more than model architecture. All methods performed better when trained on high-confidence negative samples and when computationally derived SL labels were excluded [1]. The benchmark concluded that SLMGAE demonstrated the best overall performance, offering a data-driven answer for researchers seeking the most effective tool [1].

The DUBS Framework for Virtual Screening

In drug discovery, the Directory of Useful Benchmarking Sets (DUBS) framework addresses the critical lack of standardization in virtual screening benchmarks. DUBS provides a simple, flexible tool to rapidly create standardized benchmarking sets from the Protein Data Bank, ensuring different docking methods can be compared fairly [2].

- Experimental Protocol: DUBS uses a simple text-based input format alongside the Lemon data mining framework to access and organize protein and ligand data. It outputs cleaned and standardized files in common formats (PDB, SDF) for virtual screening software, a process that takes less than two minutes [2].

- Core Function: It solves the problem of incompatible file formats and preprocessing steps in existing benchmarks (like DUD-E and PINC), which can lead to irreproducible results and unfair comparisons [2].

Diagram: The DUBS Neutral Benchmarking Workflow. This process standardizes inputs to ensure fair comparisons between computational methods [2].

Method Development Benchmarks: Showcasing Innovation

In contrast, method development benchmarks are intrinsically linked to demonstrating the superiority of a new tool or technique. They are often designed around the unique capabilities of the new method, highlighting its performance on tasks where existing approaches fall short.

The T-Pro Wetware-Software Suite for Genetic Circuits

Research on "compressed" genetic circuits for higher-state decision-making presents a prime example of a method development benchmark. The team created a new wetware (biological parts) and software (design tools) suite to overcome the limited modularity and high metabolic burden of complex circuits [3].

- Experimental Protocol: The benchmark involved designing 3-input Boolean logic circuits (256 distinct truth tables) using novel synthetic transcription factors and promoters. The key was comparing new "compressed" circuits against traditional, larger canonical circuits [3].

- Performance Metrics: The primary metrics were genetic footprint (number of parts, a measure of compression) and quantitative performance prediction error (fold-error between predicted and measured expression) [3].

Table 2: Performance of T-Pro Method Development Benchmarking

| Benchmarking Aspect | Traditional Approach | New T-Pro "Compressed" Approach | Performance Gain |

|---|---|---|---|

| Circuit Size | Large canonical circuits | ~4x smaller footprint | 4x compression |

| Prediction Error | High (qualitative) | Average error < 1.4-fold | High quantitative accuracy |

| Design Scope | Intuitive, by eye | Algorithmic enumeration of >100 trillion designs | Guaranteed minimal circuit |

(Source: Adapted from Nature Communications 16, 9414 (2025) [3])

This benchmark successfully demonstrated that the new T-Pro method could design complex circuits that were significantly more efficient and predictable than what was previously possible, a claim validated by direct, side-by-side comparison with the old standard [3].

CZI's Community-Driven Benchmarking for AI Biology

The Chan Zuckerberg Initiative (CZI) released a benchmarking suite for AI-driven virtual cell models, designed to accelerate the entire field. This initiative has characteristics of both a neutral community resource and a method development enabler. It addresses the bottleneck of poorly standardized evaluation, which forces researchers to spend weeks building custom pipelines instead of focusing on discovery [4].

- Experimental Protocol: The suite includes six community-defined tasks for single-cell analysis, such as cell clustering and perturbation prediction. Each task is evaluated with multiple metrics for a thorough performance view [4].

- Strategic Purpose: It functions as a "living" resource where researchers can contribute data and models. This design prevents overfitting to static benchmarks and ensures that models are optimized for biological relevance, not just benchmark scores [4].

Comparative Analysis: Objectives and Outcomes

The fundamental differences between these benchmarking approaches shape their design, implementation, and interpretation.

Table 3: Strategic Comparison of Benchmarking Paradigms in Synthetic Biology

| Aspect | Neutral Benchmarks | Method Development Benchmarks |

|---|---|---|

| Primary Goal | Fair, objective tool comparison; community standard | Showcase a new method's superiority & capabilities |

| Typical Custodian | Academic consortia, non-profits (e.g., CZI) [4] | Individual research teams or companies [3] |

| Design Focus | Standardization, reproducibility, real-world relevance [2] [1] | Highlighting specific advantages (e.g., speed, accuracy, novel function) [3] |

| Outcome | Guides user choice; sets field-wide standards [1] | Validates a specific new tool; defines a new state-of-the-art [3] |

| Inherent Risk | Can become outdated, leading to overfitting [4] | Potential for cherry-picked tasks that favor the new method |

Diagram: Strategic Choice Between Benchmarking Paradigms. The researcher's primary goal dictates the most appropriate benchmarking path.

The Scientist's Toolkit: Essential Research Reagent Solutions

The advancement of benchmarking relies on a suite of critical reagents and software tools.

Table 4: Key Reagents and Tools for Synthetic Biology Benchmarking

| Item | Function | Relevance to Benchmarking |

|---|---|---|

| Synthetic Transcription Factors (T-Pro) | Engineered repressors/anti-repressors for genetic circuits [3] | Core wetware for building and testing genetic circuit performance. |

| DUBS Framework | Standardized dataset generation for virtual screening [2] | Provides the neutral, standardized inputs for fair method comparison. |

| CZI cz-benchmarks | Python package & web interface for model evaluation [4] | Enables reproducible, community-driven benchmarking of AI biology models. |

| Enzymatic DNA Synthesis | Low-cost, rapid production of long DNA constructs [5] | Accelerates the "build" phase of DBTL cycles, enabling larger-scale testing. |

| AI-Guided Protein Design | De novo creation of proteins with atom-level precision [6] | Provides novel, previously non-existent components for testing design tools. |

| Machine Learning Models (e.g., SLMGAE) | Predict synthetic lethal gene pairs in cancer [1] | The tools being evaluated in neutral benchmarks to guide end-users. |

The choice between neutral and method development benchmarks is fundamental, shaping a project's trajectory from its inception. Neutral benchmarks like the SL prediction study and DUBS framework provide the trusted, common ground necessary for validating existing tools and establishing field-wide standards. Conversely, method development benchmarks, such as the one for T-Pro genetic circuits, are the engines of innovation, providing the controlled environment to demonstrate a new paradigm's value. For the field of synthetic biology to continue its rapid ascent, researchers must not only leverage both types of benchmarks but also contribute to their evolution, ensuring that the tools of tomorrow are built on a foundation of rigorous, reproducible, and relevant evaluation.

The establishment of a robust benchmarking framework for synthetic biology simulation tools is a cornerstone for advancing reproducible and reliable research. The selection of which methods or tools to include in a comparative study is a critical methodological step that directly determines a benchmark's comprehensiveness, utility, and freedom from bias. A poorly selected set of alternatives can lead to skewed conclusions, invalidate the benchmarking effort, and misdirect future research and resource allocation. This guide provides a structured, objective approach for researchers aiming to compile a representative and unbiased collection of methods for comparison, ensuring that the resulting analysis truly reflects the state-of-the-art in the field. Drawing on established practices from rigorous benchmark studies and principles of objective data presentation, we outline a protocol for method selection that mitigates common pitfalls and reinforces the integrity of scientific evaluation.

A Framework for Comprehensive Method Selection

A comprehensive benchmark requires a systematic search and selection strategy to ensure all relevant tools are considered. This involves leveraging multiple information channels to create an initial long-list of candidates.

Systematic Search and Discovery of Tools

The first step is to cast a wide net to identify as many relevant tools and methods as possible. Relying on a single source introduces a significant risk of omission bias. A multi-pronged approach is essential, utilizing bibliographic databases, specialized repositories, and community knowledge.

Table 1: Channels for Method Discovery

| Discovery Channel | Description | Utility in Building a Long-List |

|---|---|---|

| Bibliographic Databases (Scopus, Web of Science, PubMed) [7] [8] | Search for articles describing tool development using keywords related to synthetic biology simulation (e.g., "synthetic biology simulation", "genetic circuit modeling"). | Identifies peer-reviewed, published tools. Allows analysis of publication venues to find other relevant tools. |

| Reference Management Software (Zotero, Mendeley) [9] | Filter your saved literature database by keywords and journal titles to quickly identify frequently occurring tools. | Provides a quick, personalized overview of the tools prominent in your own literature review. |

| Specialized Repositories (GitHub, GitLab, BioTools) | Search for software tools that may not yet have an associated formal publication but are used in the community. | Captures cutting-edge and development-stage tools that are part of the current research landscape. |

| Preprint Servers (bioRxiv, arXiv) | Scan for recent manuscripts that describe new methods before they appear in traditional journals. | Ensures the benchmark includes the very latest methodological advances. |

Defining Inclusion and Exclusion Criteria

Once a long-list is assembled, objective, pre-defined criteria must be applied to determine final inclusion. These criteria should be established before the performance evaluation begins and be based on the benchmark's specific goals.

Key Criteria for Consideration:

- Technical Scope: Does the tool perform the specific type of simulation relevant to the benchmark (e.g., stochastic, deterministic, whole-cell)?

- Availability: Is the tool's source code or executable publicly accessible? Is there a working web service?

- Maintenance Status: Is the tool actively maintained? (This can be assessed via repository activity or recent citations).

- Documentation: Is there sufficient documentation to install, run, and interpret the tool's output?

- Computational Requirements: Can the tool be feasibly run within the computational environment of the benchmark study?

The application of these criteria should be documented meticulously, as demonstrated in rigorous benchmark studies. For example, a benchmark of methods for identifying perturbed subnetworks in cancer clearly defined its selection process to ensure a comprehensive and fair comparison [8].

Mitigating Bias in the Comparison Process

Bias can be introduced not only in which methods are selected but also in how they are configured, applied, and evaluated. A robust benchmarking framework requires proactive steps to minimize these biases.

Types of Bias and Counteractive Strategies

Table 2: Common Biases in Method Comparison and Mitigation Strategies

| Type of Bias | Description | Mitigation Strategy |

|---|---|---|

| Selection Bias | The set of compared methods is non-representative, favoring a particular type of approach or well-known tools. | Use the systematic discovery and objective criteria outlined in Section 2. Justify the final selection set transparently. |

| Configuration Bias | Methods are not run with their optimal parameters or settings, unfairly disadvantaging some tools. | Contact original tool authors for recommended configurations. Perform a hyperparameter sensitivity analysis for key tools to ensure fair tuning. |

| Dataset Bias | The benchmark uses datasets that are structurally biased toward the strengths of a subset of methods. | Use a wide range of dataset types, including simulated "ground-truth" data and real-world experimental data with varying levels of noise and complexity [8]. |

| Interpretation Bias | Results are presented in a way that visually or numerically highlights a pre-determined conclusion. | Use unbiased data visualization principles, such as avoiding misleading axes and employing color schemes accessible to all readers [10] [11]. |

The Role of Data Visualization in Objective Reporting

The presentation of results is a final, critical stage where bias can be introduced, even unintentionally. Adhering to principles of clear and accessible data visualization is paramount.

- Use Active Titles: Chart titles should state the finding (e.g., "Tool B achieved 15% faster simulation speed than the next best alternative") rather than merely describing the content (e.g., "Simulation speed comparison") [10].

- Employ Strategic Contrast: Use color and callouts to direct the viewer's attention to key findings, but avoid using these techniques to mislead or obscure inconvenient data [10]. All findings must be reported with transparency.

- Ensure Color Accessibility: Approximately 8% of men and 0.5% of women have some form of color vision deficiency [11]. Using a color-blind-friendly palette ensures your results are interpretable by the entire audience. Avoid problematic combinations like red/green and instead use palettes with varying lightness and saturation.

Table 3: Color-Blind-Friendly Palette (adapted from [12])

| Color Name | Hex Code | Recommended Use |

|---|---|---|

| Vermillion | #D55E00 |

Highlighting a key outlier or top performer. |

| Sky Blue | #0072B2 |

Representing a baseline or control method. |

| Bluish Green | #009E73 |

General use, good for data series. |

| Yellow | #F0E442 |

General use, provides good contrast. |

| Dark Pink | #CC79A7 |

General use, good for categorical data. |

Experimental Protocol for a Benchmarking Study

The following workflow diagram and detailed protocol outline the key steps for executing a fair and comprehensive method comparison, from initial planning to final dissemination.

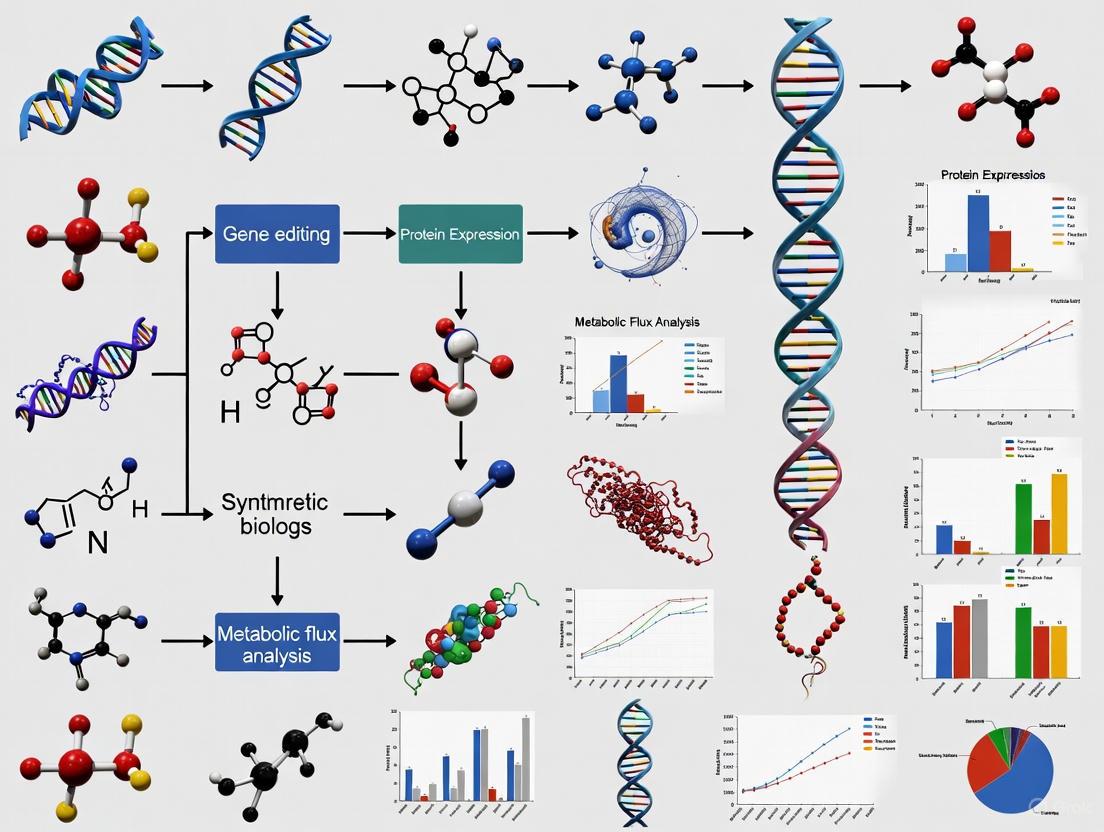

Figure 1: A generalized workflow for executing a benchmarking study, highlighting the sequential phases of planning, execution, analysis, and dissemination.

Detailed Methodological Steps

- Define Scope and Objectives: Clearly articulate the biological question and the specific capabilities the benchmark will assess (e.g., prediction accuracy of gene expression levels, computational speed, scalability with model complexity).

- Method Discovery & Selection: Execute the systematic search and filtering process described in Section 2 to finalize the list of methods for inclusion.

- Dataset Curation: Assemble a diverse set of benchmark datasets. This should include both:

- Synthetic Data: Simulated data where the "ground truth" is known, allowing for precise quantification of accuracy [8].

- Experimental Data: Real-world data from synthetic biology experiments (e.g., from repositories related to the cited de novo protein design study [6]) to assess practical performance.

- Method Configuration and Execution:

- For each tool, document the version and all parameters used.

- Where possible, run tools in a containerized environment (e.g., Docker, Singularity) to ensure consistency and reproducibility.

- Execute multiple runs for stochastic methods to account for random variation.

- Performance Metric Calculation: Calculate a predefined set of quantitative metrics for each tool and dataset. Examples include:

- Accuracy Metrics: Root Mean Square Error (RMSE), Pearson Correlation, Area Under the Precision-Recall Curve (AUPRC).

- Efficiency Metrics: Wall-clock time, CPU time, memory usage.

- Robustness Metrics: Performance consistency across different datasets or under varying noise levels.

- Data Synthesis and Reporting: Aggregate results into structured tables. Visualize comparisons using the principles of contrast and color accessibility outlined in Section 3.2. Discuss results in the context of each method's algorithmic approach.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key resources and tools essential for conducting a rigorous benchmarking study in computational synthetic biology.

Table 4: Key Research Reagent Solutions for Benchmarking Studies

| Item / Resource | Function in Benchmarking | Example Tools / Sources |

|---|---|---|

| High-Performance Computing (HPC) Cluster | Provides the computational power to run multiple simulation tools and large parameter sweeps in parallel. | Local institutional HPC, cloud computing services (AWS, Google Cloud). |

| Containerization Platform | Ensures software dependencies are met and the computational environment is identical for every run, guaranteeing reproducibility. | Docker, Singularity. |

| Bibliographic Database | Used for the systematic discovery of published tools and for retrieving citation metrics for journal quality assessment [7] [9]. | Scopus, Web of Science, PubMed. |

| Reference Management Software | Aids in organizing the literature found during the discovery phase and can help identify key journals and tools [9]. | Zotero, Mendeley, Endnote. |

| Data Visualization Library | Enables the generation of clear, accessible, and publication-quality figures and charts for presenting benchmark results. | Matplotlib (Python), ggplot2 (R). |

| Code and Data Repository | A platform for sharing the scripts, results, and datasets of the benchmark, fulfilling the mandate for open science and reproducibility. | Zenodo, GitHub, GitLab. |

Selecting methods for comparison in synthetic biology benchmarking is a non-trivial exercise that demands a structured, transparent, and bias-aware approach. By implementing a systematic framework for method discovery, applying objective inclusion criteria, and adhering to principles of rigorous experimental design and accessible data presentation, researchers can produce benchmark studies that are both comprehensive and fair. Such high-quality comparisons are indispensable for guiding the development of more powerful and reliable synthetic biology simulation tools, ultimately accelerating progress in biomedicine and biotechnology.

The establishment of robust benchmarking frameworks is a critical pillar of methodological progress in synthetic biology. The core of any such framework is the reference dataset used to evaluate and compare the performance of computational tools and analytical pipelines. A fundamental choice researchers must make is whether to use real experimental data, with all its inherent complexity and noise, or simulated data, where the ground truth is known and parameters are controlled. This guide objectively compares the performance of methods using these different dataset types, detailing the trade-offs to inform researchers, scientists, and drug development professionals. Within the broader thesis on benchmarking for synthetic biology simulation tools, this discussion underscores that the choice between real and simulated data is not a matter of selecting a superior option, but of strategically aligning dataset strengths with specific benchmarking goals.

The Core Trade-offs: A Comparative Analysis

The decision between simulated and real experimental data involves balancing control against authenticity. The table below summarizes the fundamental characteristics and trade-offs of each dataset type.

Table 1: Fundamental Trade-offs Between Simulated and Real Experimental Data

| Aspect | Simulated Data | Real Experimental Data |

|---|---|---|

| Ground Truth | Known and perfectly defined [13] [14] | Unknown or partially inferred; requires validation via "gold-standard" datasets [13] |

| Control & Flexibility | High; allows for controlled scenarios with parameters of arbitrary complexity [13] | Low; constrained by the realities of experimental conditions and cost |

| Bias Assessment | Excellent for identifying algorithmic biases under controlled conditions [13] | Limited for pinpointing specific algorithmic biases, but reveals real-world performance issues |

| Data Fidelity | Risk of failing to capture all properties of experimental data, affecting evaluation validity [14] | High; inherently reflects true biological and technical variation |

| Primary Application | Method development, debugging, and performance evaluation; power analysis [15] [13] [14] | Final validation and confirmation of method utility in real-world scenarios [13] |

| Cost & Scalability | Low cost to generate vast amounts of data; highly scalable [13] | High cost and effort to generate; scalability is limited |

The Critical Role of Data Fidelity in Simulations

A key challenge with simulated data is its ability to faithfully reflect the characteristics of experimental data. A benchmark of single-cell RNA-seq simulation methods found that their performance varies significantly, and deviations from experimental data properties can compromise the validity of downstream evaluations [14]. The reliability of a benchmarking exercise using simulated data is therefore directly contingent on the simulator's ability to capture relevant data properties, such as mean-variance relationships and gene-gene correlations [14]. Consequently, the selection of a simulation tool itself requires careful consideration against real data to ensure it is fit for purpose.

Domain-Specific Applications and Experimental Protocols

The trade-offs between dataset types manifest concretely across different biological domains. The following examples illustrate how benchmarking studies are conducted in practice and what they reveal about tool performance.

Benchmarking Genomic Short-Read Simulators

Evaluating tools for processing Next-Generation Sequencing (NGS) data is a classic application of simulations. A systematic review of 23 genomic NGS simulators highlights their use in comparing analytical pipelines [15]. The typical experimental protocol involves:

- Input Selection: A reference genome sequence is used as the foundation [15] [13].

- Parameterization: Simulators require parameters defining the sequencing experiment (e.g., read length, error distribution) [15]. These can be "basic" (pre-defined within the tool) or "advanced" (custom-estimated from an empirical dataset to mimic its characteristics more closely) [13].

- Data Generation: The tool generates synthetic sequencing reads in standard formats like FASTQ or BAM [15].

- Pipeline Evaluation: The simulated reads, with their known ground truth, are processed by the computational methods under test (e.g., variant callers). The outputs are compared against the known truth to evaluate accuracy, sensitivity, and specificity [13].

A performance evaluation of six popular short-read simulators (ART, DWGSIM, InSilicoSeq, Mason, NEAT, and wgsim) demonstrated that the choice of simulator significantly impacts the characteristics of the output data, such as genomic coverage and GC-coverage bias [13]. This finding underscores the importance of selecting a simulator that accurately models the features most relevant to the benchmarking task.

Benchmarking Single-Cell Data Integration Methods

In single-cell biology, benchmarking often relies on real experimental data where ground truth is inferred from cell annotations. A benchmark of 16 deep learning-based integration methods used datasets from immune cells, pancreas cells, and the Bone Marrow Mononuclear Cells (BMMC) dataset from the NeurIPS 2021 competition [16]. The protocol was:

- Data Curation: Collect well-annotated scRNA-seq datasets with known batch and cell-type labels [16].

- Method Training: Apply integration methods (e.g., scVI, scANVI) using batch labels to remove technical variation and cell-type labels to preserve biological information [16].

- Performance Quantification: Use benchmarking metrics like the single-cell integration benchmarking (scIB) score to quantitatively evaluate two competing objectives: batch correction (how well batch effects are removed) and biological conservation (how well real biological variation is preserved) [16].

This benchmark revealed that methods optimized for batch correction can sometimes inadvertently remove biologically meaningful signal, a trade-off that is best quantified using real data with trusted annotations [16].

The Emergence of Living Synthetic Benchmarks

A proposed solution to the fragmentation and potential bias in method evaluation is the concept of "living synthetic benchmarks." This framework seeks to disentangle method development from simulation study design by creating a neutral, cumulative, and continuously updated benchmark [17]. The blueprint involves:

- Initialization: Collecting existing methods, Data-Generating Mechanisms (DGMs), and Performance Measures (PMs) into an initial benchmark [17].

- Separation: Allowing new methods, DGMs, and PMs to be developed and introduced independently [17].

- Continuous Integration: Systematically adding every new element (method, DGM, PM) to the benchmark, enabling comprehensive and neutral comparisons over time [17].

This approach, inspired by benchmarks in machine learning (e.g., ImageNet) and computational biology (e.g., CASP), aims to make method evaluation more objective, reproducible, and cumulative [17].

A Strategic Framework for Dataset Selection

The choice between dataset types is not mutually exclusive. The most robust benchmarking strategies intelligently combine both. The following workflow provides a logical pathway for researchers to make this choice.

Diagram 1: A strategic decision workflow for choosing between simulated and real experimental data for benchmarking, based on the primary research goal.

The Scientist's Toolkit: Key Reagent Solutions

The table below catalogs essential resources and tools mentioned in this guide that are instrumental for constructing and executing benchmarking studies in synthetic biology.

Table 2: Key Research Reagent Solutions for Benchmarking Studies

| Tool or Resource Name | Type | Primary Function in Benchmarking |

|---|---|---|

| SynBioTools [18] | Tool Registry | A one-stop facility for searching and selecting synthetic biology databases, computational tools, and experimental methods. |

| Genome In A Bottle (GIAB) [13] | Gold-Standard Empirical Dataset | Provides a high-quality reference dataset for human genomics, serving as a benchmark for validating variant calls and other genomic analyses. |

| Single-Cell Integration Benchmarking (scIB) [16] | Benchmarking Metric | A framework providing quantitative scores to evaluate how well single-cell data integration methods correct for batch effects and conserve biological information. |

| Living Synthetic Benchmark [17] | Benchmarking Framework | A proposed neutral and cumulative framework for simulation studies, disentangling method development from evaluation to ensure impartial comparisons. |

| Short-Read Simulators (e.g., ART, NEAT) [15] [13] | Simulation Tool | Generate synthetic NGS data for controlled benchmarking of computational pipelines for read mapping, variant calling, and assembly. |

| Single-Cell Simulators (e.g., Splat, SymSim) [14] | Simulation Tool | Generate synthetic scRNA-seq data with known ground truth for evaluating computational methods for clustering, trajectory inference, and differential expression. |

| SimBench [14] | Evaluation Framework | A comprehensive framework for benchmarking scRNA-seq simulation methods themselves, assessing their ability to capture properties of experimental data. |

The choice between simulated and real experimental data for benchmarking synthetic biology tools is foundational. Simulated data offers unparalleled control and knowledge of ground truth, making it ideal for method development, power analysis, and stress-testing algorithms under specific, controlled scenarios. Its primary weakness is the potential failure to capture the full complexity of real biological systems, which can lead to optimistic but misleading performance estimates. Real experimental data provides the ultimate test of a method's practical utility, ensuring performance under real-world conditions of noise and biological variation, though it is often costly and its "ground truth" is rarely perfect. The most rigorous benchmarking strategy is a hybrid one: leveraging simulated data for extensive initial testing and refinement, followed by final validation on multiple real-world datasets. Furthermore, the adoption of community-driven, living synthetic benchmarks promises to reduce bias and foster more cumulative, comparable, and neutral evaluation of methodological progress in the field.

The rapid expansion of synthetic biology has led to a proliferation of computational tools for designing and analyzing biological systems. For researchers, developers, and drug discovery professionals, selecting the appropriate tool is crucial yet challenging. Benchmarking studies provide a rigorous framework for this selection process by objectively comparing tool performance using reference datasets with known "ground truth" [19]. Establishing this ground truth is the foundational challenge in benchmarking, as the true biological processes underlying real experimental data are often unknown or incompletely characterized [20]. Without a known ground truth, it becomes difficult to quantitatively assess whether a computational method is performing accurately.

Two primary approaches have emerged to address this challenge: using synthetic data from computer simulations where all parameters are predefined, and employing experimentally-derived gold standards that incorporate physical controls like spiked-in molecules [19]. Simulation-based benchmarking allows for generating unlimited data with completely known properties, while experimental gold standards provide authentic biological contexts but often with only partially known truths. This guide examines both approaches, focusing on their implementation, relative strengths, and practical applications in benchmarking synthetic biology tools, with particular emphasis on sequencing-based analyses.

Establishing Ground Truth with Spiked-in Controls

Spiked-in controls are synthetic molecules of known sequence and quantity added to biological samples during experimental processing. They serve as internal standards that travel the entire experimental pathway alongside native biological molecules, enabling researchers to track technical performance and detect artifacts that may arise during sample processing.

Synthetic DNA Spike-Ins (SDSIs) for Amplicon Sequencing

Amplicon-based sequencing methods, widely used in SARS-CoV-2 genomic surveillance, are highly sensitive to contamination due to extensive PCR amplification. Synthetic DNA spike-ins (SDSIs) have been developed to track samples and detect inter-sample contamination throughout the sequencing workflow [21].

The SDSI + AmpSeq protocol utilizes 96 distinct synthetic DNA sequences derived from uncommon Archaea genomes, minimizing homology with common human pathogens and reducing false positives. Each SDSI consists of a unique core sequence flanked by constant priming regions, allowing co-amplification with target sequences using a single primer pair added to existing multiplexed PCR reactions [21].

Table 1: Synthetic DNA Spike-in (SDSI) System Characteristics

| Feature | Specification | Function/Benefit |

|---|---|---|

| Core Sequence Source | Uncommon Archaea genomes | Minimizes false positives from homology with common pathogens |

| Number of Variants | 96 distinct sequences | Enables multiplexed sample tracking |

| Priming Regions | Constant flanking sequences | Enables co-amplification with a single primer pair |

| GC Content Range | 33-65% | Similar to viral genomes (e.g., SARS-CoV-2: 37±5%) |

| Optimal Concentration | 600 copies/μL | Reliable detection without impacting target amplification |

| Compatibility | ARTIC Network primers | Works with widely used amplicon sequencing designs |

Experimental Protocol: Implementing SDSIs in Sequencing Workflows

The following protocol outlines the steps for incorporating SDSIs into amplicon sequencing workflows:

SDSI Selection and Preparation: Select a unique SDSI from the 99-plex library for each sample. Prepare SDSI stocks at 600 copies/μL in nuclease-free water [21].

Sample Processing: Add selected SDSI to sample cDNA prior to the multiplexed PCR amplification step. The constant priming regions enable simultaneous amplification with target-specific primers [21].

Library Preparation and Sequencing: Continue with standard library preparation protocols. The SDSIs will be co-amplified and sequenced alongside biological targets.

Data Analysis and Contamination Detection: After sequencing, map reads to both the target reference genome and the SDSI reference sequences. The presence of the expected SDSI confirms sample identity, while detection of unexpected SDSIs indicates inter-sample contamination [21].

Validation and Performance Metrics

Extensive validation of the SDSI + AmpSeq approach demonstrated several key performance characteristics:

No Impact on Target Sequencing: At optimal concentration (600 copies/μL), SDSIs yielded >96% of reads mapping to SARS-CoV-2 with no significant difference in coverage uniformity across the genome compared to standard protocols [21].

High Specificity: Each of the 96 SDSIs produced robust, specific signals without cross-mapping or misidentification in clinical samples spanning a range of viral loads (CT values 25-33) [21].

Genome Concordance: Comparison with unbiased metagenomic sequencing showed 100% genome concordance in processed samples, demonstrating that SDSI addition does not compromise variant calling accuracy [21].

Figure 1: SDSI Workflow for Contamination Detection. Synthetic DNA spike-ins (SDSIs) are added to samples before amplification and sequencing. Bioinformatics analysis detects expected and unexpected SDSIs to confirm sample identity or identify contamination.

Gold Standard Databases and Reference Materials

While spiked-in controls provide internal standards for individual experiments, gold standard databases offer community-wide reference points for method validation. These resources include experimentally validated datasets and carefully curated reference materials that serve as benchmarks for comparing computational tool performance.

Experimentally-Derived Gold Standards

Several approaches have been developed to create experimental datasets with known characteristics:

Fluorescence-Activated Cell Sorting (FACS): Cells are sorted into known subpopulations prior to single-cell RNA-sequencing, creating defined cell type mixtures with known composition [19].

Spiked-in RNA Molecules: Synthetic RNA molecules at known relative concentrations are added to samples before RNA-sequencing, enabling precise assessment of differential expression detection accuracy [19].

Cell Line Mixtures: Different cell lines are mixed to create 'pseudo-cells' with known genomic characteristics, providing controlled systems for method validation [19].

Sex Chromosome Genes: Genes located on sex chromosomes serve as proxies for validating epigenetic silencing patterns in DNA methylation studies [19].

Several organizations maintain gold standard references for benchmarking:

Genome in a Bottle (GIAB): Maintained by the National Institute of Standards and Technology (NIST), GIAB provides reference materials and high-confidence variant calls for human genomes, serving as benchmarks for variant calling pipelines [13].

MAQC/SEQC Consortia: These community-wide initiatives establish standards for microarray and sequencing quality control, generating extensively validated datasets for assessing reproducibility across platforms and laboratories [19].

Single Cell Portal: Provides curated single-cell RNA-seq datasets with experimental validation, enabling benchmarking of computational methods for single-cell analysis [22].

Simulation-Based Ground Truth for Method Validation

Computer simulation provides a powerful alternative for establishing ground truth by generating synthetic datasets with completely known properties. Simulations allow researchers to create controlled scenarios with predefined parameters, enabling precise assessment of computational method performance.

Numerous specialized tools have been developed to simulate next-generation sequencing (NGS) data, each with distinct capabilities and applications:

Table 2: Comparison of Popular Short-Read Sequencing Simulators

| Simulator | Supported Technologies | Variant Simulation | Error Models | Primary Applications |

|---|---|---|---|---|

| ART | Illumina, 454, SOLiD | No | Built-in platform-specific | Method validation, experimental design |

| DWGSIM | Illumina, SOLiD, IonTorrent | Yes (SNPs, indels) | User-defined or empirical | Variant detection benchmarking |

| InSilicoSeq | Illumina | No | Built-in or custom from data | Metagenomic simulations, method comparison |

| Mason | Illumina, 454 | Yes (SNPs, indels) | Built-in platform-specific | Large-scale genomic studies |

| NEAT | Illumina | Yes (SNPs, indels) | Built-in or empirical | Variant detection, error model evaluation |

| wgsim | Illumina | Yes (SNPs, indels) | Simple uniform model | Rapid prototyping, basic simulations |

Benchmarking Single-Cell RNA-seq Simulation Methods

Single-cell RNA sequencing presents unique computational challenges due to its high sparsity, technical noise, and complex data structures. A comprehensive benchmark study (SimBench) evaluated 12 scRNA-seq simulation methods across 35 experimental datasets, assessing their ability to reproduce key data properties and biological signals [20].

The evaluation framework examined four critical aspects of simulator performance:

Data Property Estimation: Accuracy in capturing 13 distinct data characteristics including mean-variance relationships, dropout rates, and gene-gene correlations.

Biological Signal Preservation: Ability to maintain biologically meaningful patterns such as differentially expressed genes and cell-type markers.

Computational Scalability: Efficiency in terms of runtime and memory consumption as dataset size increases.

Method Applicability: Flexibility in simulating complex experimental designs including multiple cell groups and differential expression patterns.

The benchmark revealed significant performance differences among methods, with no single simulator outperforming others across all criteria. ZINB-WaVE, SPARSim, and SymSim excelled at capturing data properties, while scDesign and zingeR performed better at preserving biological signals despite lower overall accuracy in data property estimation [20]. This highlights the importance of selecting simulators based on specific benchmarking needs rather than assuming universal superiority.

Experimental Protocol: Using Simulated Data for Method Benchmarking

A robust protocol for using simulated data in benchmarking computational methods includes these key steps:

Simulator Selection: Choose simulators based on the specific benchmarking goals, considering the trade-offs between biological accuracy, computational efficiency, and implementation complexity [20].

Parameter Estimation: Use real experimental datasets to estimate parameters for the simulation, ensuring that simulated data reflects relevant properties of biological systems [20].

Ground Truth Implementation: Introduce known signals (e.g., differentially expressed genes, specific mutations, or cell subpopulations) with controlled effect sizes and prevalences.

Method Evaluation: Apply computational methods to the simulated data and compare outputs to the known ground truth using appropriate performance metrics.

Sensitivity Analysis: Assess method performance across a range of conditions (e.g., varying sequencing depths, effect sizes, or noise levels) to identify operating boundaries and failure modes.

Comparative Analysis of Ground Truth Approaches

Both experimental controls and computational simulations offer distinct advantages and limitations for establishing ground truth in benchmarking studies. The choice between approaches depends on the specific research questions, available resources, and desired applications.

Table 3: Comparison of Ground Truth Establishment Methods

| Characteristic | Spiked-in Controls | Gold Standard Databases | Synthetic Simulations |

|---|---|---|---|

| Ground Truth Certainty | High for spiked molecules | Variable (depends on validation) | Complete (by definition) |

| Biological Relevance | High (in biological context) | High (real biological samples) | Limited (model-dependent) |

| Implementation Cost | Moderate (reagent costs) | Low (existing resources) | Low (computational only) |

| Scalability | Limited by experimental scale | Fixed (limited datasets) | Unlimited (arbitrary data size) |

| Technical Artifacts | Captures real experimental noise | Includes real technical variation | Modeled (may miss complexities) |

| Primary Applications | Contamination detection, normalization | Method validation, reproducibility | Method development, power analysis |

Figure 2: Ground Truth Approaches for Benchmarking. Experimental and computational approaches provide complementary methods for establishing ground truth. Combining multiple approaches enables comprehensive benchmarking.

Table 4: Research Reagent Solutions for Ground Truth Establishment

| Resource Type | Specific Examples | Function in Benchmarking |

|---|---|---|

| Synthetic Spike-ins | SDSIs [21], ERCC RNA Spike-in Mix | Sample tracking, contamination detection, normalization control |

| Reference Materials | Genome in a Bottle (GIAB) [13], MAQC samples | Method validation, inter-laboratory reproducibility |

| Cell Line References | Mixed cell lines, FACS-sorted populations [19] | Controlled cellular inputs with known composition |

| Sequence Simulators | ART, DWGSIM, InSilicoSeq [13], scRNA-seq simulators [20] | Generating data with completely known ground truth |

| Curated Databases | Single Cell Portal [22], GEO, dbGAP | Access to experimentally validated datasets |

| Analysis Workflows | Artic Network pipeline, nf-core/sarek | Standardized processing for comparative studies |

Establishing reliable ground truth through spiked-in controls, gold standard databases, and synthetic simulations is fundamental to rigorous benchmarking of synthetic biology tools. Each approach offers complementary strengths: experimental controls provide biological context and capture real technical variation, while computational simulations offer complete knowledge of underlying truths and unlimited scalability.

The most comprehensive benchmarking strategies integrate multiple approaches, using experimental gold standards to validate findings from synthetic data and vice versa. As the field advances, developing more sophisticated spike-in systems that better mimic native biomolecules and improving simulation methods to capture biological complexity more accurately will further enhance our ability to critically evaluate computational tools. For researchers and drug development professionals, understanding these ground truth establishment methods enables more informed tool selection and more robust computational analyses, ultimately accelerating scientific discovery and therapeutic development.

From Theory to Practice: Implementing Combinatorial Optimization and High-Throughput Workflows

Leveraging Combinatorial Optimization for Multivariate Pathway Tuning

A fundamental question in most metabolic engineering projects is determining the optimal expression levels of multiple enzymes to maximize the output of a desired pathway [23]. However, engineering microorganisms for industrial-scale production remains challenging due to the enormous complexity of living cells, where the nonlinearity of biological systems and low-throughput characterization methods create significant bottlenecks [23]. Traditional sequential optimization methods, which test only one part or a small number of parts at a time, prove time-consuming and expensive for complex multivariate systems [23]. Combinatorial optimization has emerged as a powerful alternative approach that allows rapid generation of diverse genetic constructs without requiring prior knowledge of optimal expression levels for each individual gene in a multi-enzyme pathway [23].

This review compares contemporary combinatorial optimization strategies for multivariate pathway tuning, evaluating their performance characteristics, implementation requirements, and applicability across different synthetic biology contexts. As the field advances toward more complex genetic circuits and biosystems, establishing robust benchmarking frameworks for these optimization approaches becomes increasingly critical for the synthetic biology community [24]. By objectively comparing the capabilities of different optimization methodologies, researchers can select appropriate strategies for their specific pathway engineering challenges, accelerating the design-build-test-learn cycle in synthetic biology.

Comparative Analysis of Combinatorial Optimization Approaches

Table 1: Comparison of Combinatorial Optimization Approaches for Pathway Engineering

| Optimization Approach | Key Methodology | Experimental Requirements | Scalability | Best-Suited Applications |

|---|---|---|---|---|

| Combinatorial Library Screening [23] | Generation of diverse genetic constructs via standardized part assembly | High-throughput screening; Biosensors; Flow cytometry | Moderate (library size limitations) | Metabolic pathway optimization; Enzyme expression tuning |

| Model-Based Optimization (DIOPTRA) [25] | Mathematical optimization using mixed-integer linear programming (MILP) | RNA-Seq data; Pathway annotation; Phenotype labels | High (computationally intensive) | Disease subtype classification; Biomarker identification |

| Quantum-Inspired Algorithms [26] | Quantum annealing and coherent Ising machines | Specialized hardware; Problem mapping to Ising model | Emerging technology | Maximum cut problems; Spin glass systems |

| Transcriptional Programming (T-Pro) [3] | Algorithmic enumeration of genetic circuits with compression | Synthetic transcription factors; Promoter engineering | High (wetware-software integration) | Genetic circuit design; Biocomputing applications |

Performance Metrics and Experimental Data

Table 2: Performance Comparison of Optimization Methods

| Method | Optimization Efficiency | Experimental Validation | Key Performance Metrics | Limitations |

|---|---|---|---|---|

| Combinatorial Library Screening [23] | Moderate to High | Strain libraries with metabolite production | Metabolite titers; Production yields; Screening throughput | Library size constraints; Screening bottlenecks |

| Model-Based Optimization (DIOPTRA) [25] | High (subtype classification accuracy) | Cancer transcriptome datasets | Prediction accuracy: ~70-90% for cancer subtypes; Robustness to noise | Requires large training datasets; Computational complexity |

| Quantum-Inspired Algorithms [26] | Variable (problem-dependent) | MaxCut problem instances | Time-to-solution (TTS); Scaling efficiency | Early-stage development; Specialized implementation |

| Transcriptional Programming (T-Pro) [3] | High (circuit compression) | Genetic circuit implementations in microbial hosts | Prediction error: <1.4-fold for >50 test cases; 4x size reduction vs. canonical circuits | Limited to transcriptional networks; Requires specialized wetware |

Experimental Protocols and Methodologies

Combinatorial Library Generation and Screening

The workflow for combinatorial optimization begins with in vitro construction and in vivo amplification of combinatorially assembled DNA fragments to generate gene modules [23]. In each module, gene expression is controlled by a library of regulators, with terminal homology between adjacent assembly fragments and plasmids enabling diverse construct generation in single cloning reactions. CRISPR/Cas-based editing strategies facilitate multi-locus integration of multiple module groups into genomic loci, with each group integrated into a single locus of different microbial cells [23]. Sequential cloning rounds enable entire pathway construction in plasmids, which can be transformed into hosts or used for single/multi-locus genomic integration to generate combinatorial libraries.

For high-throughput screening, biosensors combined with laser-based flow cytometry technologies transduce chemical production into detectable fluorescence signals [23]. This approach enables rapid identification of microbial strains producing the highest levels of target metabolites. Advanced screening techniques utilize genetically encoded whole-cell biosensors to overcome limitations of traditional, time-consuming metabolite screening methods [23].

Mathematical Optimization Framework (DIOPTRA Methodology)

The DIOPTRA (Disease OPTimisation for biomaRker Analysis) model employs mathematical optimization principles to infer pathway activity as a weighted linear combination of pathway constituent gene expressions [25]. The methodology follows these key steps:

Data Preparation: RNA-Seq count data are normalized using upper quartile FPKM (FPKM-UQ). Genes with high missingness (>30% zero expression values across samples) are removed.

Pathway Activity Definition: For each pathway p and sample s, pathway activity is calculated as:

(pas = \sum{m} G{sm} \cdot (rpm - rn_m))

where (G{sm}) represents gene expression value for sample s and gene m, while (rpm) and (rn_m) are positive continuous variables modeling positive and negative gene weights determined by the optimization model [25].

Optimization Constraints: Binary variables (Lm) ensure that for each gene m, at most one of (rpm) or (rn_m) takes positive values:

(rpm \leq Lm) (rnm \leq (1 - Lm))

Objective Function: The model minimizes distances between samples and their corresponding class intervals, deriving pathway activity features that cluster samples with the same label together while separating them from samples of different classes [25].

Transcriptional Programming (T-Pro) Workflow

The T-Pro workflow for genetic circuit compression involves both wetware and software components [3]:

Wetware Expansion: Engineering synthetic repressor/anti-repressor transcription factor sets responsive to orthogonal signals (IPTG, D-ribose, cellobiose).

Algorithmic Enumeration: Modeling circuits as directed acyclic graphs and systematically enumerating circuits in sequential order of increasing complexity to identify the most compressed circuit for a given truth table.

Predictive Design Workflow: Accounting for genetic context to quantitatively predict expression levels, enabling prescriptive performance design.

Experimental Validation: Implementing designed circuits in microbial hosts and measuring performance against predictions using fluorescence-based assays and sorting via FACS [3].

Visualization of Optimization Workflows

Combinatorial Optimization Screening Pipeline

Mathematical Optimization Framework

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for Combinatorial Optimization Experiments

| Reagent/Resource | Function | Application Examples | Key Characteristics |

|---|---|---|---|

| Synthetic Transcription Factors [3] | Regulation of gene expression in genetic circuits | T-Pro circuit design; Orthogonal regulation | Ligand responsiveness; DNA binding specificity; Modular design |

| Biosensors [23] | Detection of metabolite production | High-throughput screening; Metabolic engineering | Fluorescence output; Sensitivity; Dynamic range |

| CRISPR/Cas Systems [23] | Genome editing; Multiplex integration | Library generation; Pathway integration | Editing efficiency; Multiplexing capability; Orthogonality |

| Orthogonal Inducers [3] | Control of synthetic genetic circuits | IPTG; D-ribose; Cellobiose in T-Pro | Orthogonality; Cell permeability; Non-toxicity |

| Fluorescent Reporters [23] [3] | Quantification of gene expression | Circuit characterization; Screening | Brightness; Stability; Spectral properties |

| Pathway Databases [25] | Source of biological pathway information | KEGG; Model construction | Coverage; Annotation quality; Currency |

Combinatorial optimization approaches for multivariate pathway tuning represent a powerful paradigm shift from traditional sequential optimization methods in synthetic biology [23]. The comparative analysis presented here demonstrates that method selection depends critically on the specific application context, available resources, and desired outcomes. For metabolic pathway optimization, combinatorial library screening approaches offer established, practical solutions, while emerging mathematical optimization frameworks like DIOPTRA show promise for analysis of complex biological systems [25]. Meanwhile, novel approaches like Transcriptional Programming (T-Pro) demonstrate how integrated wetware-software solutions can achieve predictive design with minimal genetic footprint [3].

As synthetic biology continues to advance toward more complex systems, establishing comprehensive benchmarking frameworks for these optimization methodologies becomes increasingly important [24]. Future developments will likely focus on improving computational efficiency, expanding the scope of biological systems that can be effectively optimized, and enhancing the integration between computational design and experimental implementation. The convergence of artificial intelligence with synthetic biology promises to further accelerate these developments, potentially enabling fully automated design-build-test-learn cycles for multivariate pathway optimization [27].

Integrating Biosensors and Flow Cytometry for High-Throughput Screening

The convergence of biosensor technology and advanced flow cytometry is revolutionizing high-throughput screening (HTS) in synthetic biology and drug development. This integration creates a powerful framework for analyzing cellular function with unprecedented depth and speed. Biosensors function as intracellular sentinels, converting specific biological events into detectable signals, while modern flow cytometry platforms, particularly spectral and imaging flow cytometers, provide the multi-parameter, high-throughput detection capability to read these signals across thousands of cells per second [28] [29] [30]. Within synthetic biology, this synergy is particularly valuable for benchmarking genetic circuits and metabolic pathways, enabling researchers to move beyond static endpoint measurements to dynamic, real-time monitoring of cellular processes in live cells [31] [28].

The core value of this integration lies in its ability to close the "design-build-test" cycle central to synthetic biology. By employing biosensors as reporting tools within a flow cytometric readout, researchers can rapidly prototype and iteratively improve synthetic biological systems [31]. This approach provides quantitative, single-cell resolution data that is essential for characterizing the performance and variability of synthetic biology tools, from engineered promoters and riboswitches to complex genetic circuits [28].

Biosensor Classes for Cytometric Detection

Biosensors suitable for integration with flow cytometry can be broadly categorized into two classes based on their molecular architecture: protein-based and nucleic acid-based sensors. Each class offers distinct advantages for monitoring different types of intracellular events.

Table 1: Key Biosensor Classes for Flow Cytometric Integration

| Category | Biosensor Type | Sensing Principle | Key Advantages | Common Cytometric Applications |

|---|---|---|---|---|

| Protein-Based | Transcription Factors (TFs) | Ligand binding induces conformational change, regulating gene expression [28]. | Suitable for high-throughput screening; broad analyte range [28]. | Metabolite sensing, stress response profiling [28]. |

| Protein-Based | Two-Component Systems (TCSs) | Sensor kinase autophosphorylates and transfers phosphate to a response regulator [28]. | High adaptability; environmental signal detection [28]. | Sensing extracellular ions, pH, small molecules [28]. |

| Protein-Based | G-Protein Coupled Receptors (GPCRs) | Ligand binding activates intracellular G-proteins and downstream pathways [28]. | High sensitivity; complex signal amplification [28]. | Ligand screening, signal transduction studies [28]. |

| RNA-Based | Riboswitches | Ligand-induced RNA conformational change affects translation or transcription [28]. | Compact genetic footprint; reversible response [28]. | Real-time regulation of metabolic fluxes [28]. |

| RNA-Based | Toehold Switches | Base-pairing with a trigger RNA activates translation of a downstream reporter gene [28]. | High specificity; programmable logic gates [28]. | RNA-level diagnostics, logic-gated pathway control [28]. |

The performance of these biosensors is quantified by several critical metrics. The dynamic range refers to the span between the minimal and maximal detectable signals, while the operating range defines the concentration window of the analyte where the biosensor performs optimally [28]. For high-throughput screening, the response time—the speed at which the biosensor reacts to changes—is crucial for capturing rapid cellular dynamics. Finally, the signal-to-noise ratio determines the clarity and reliability of the output, directly impacting the sensitivity and statistical power of the screen [28].

Technological Platforms and Performance Benchmarking

Advanced Flow Cytometry Modalities

The choice of flow cytometry platform significantly impacts the quality and quantity of data that can be acquired from integrated biosensors.

- Spectral Flow Cytometry: This technology represents a significant advancement over conventional cytometry. Instead of using optical filters to direct narrow wavelength bands to specific detectors, spectral cytometers capture the full emission spectrum of every fluorophore across a wide range of wavelengths using a prism or diffraction grating and an array of highly sensitive detectors [29]. This allows for improved signal resolution and the ability to multiplex more fluorophores, even with significant spectral overlap. It is particularly beneficial for complex screens involving multiple biosensors reporting on different pathway activities simultaneously [29].

- Imaging Flow Cytometry (IFC): IFC merges the high-throughput capabilities of conventional flow cytometry with high-resolution morphological imaging. Instruments like the Annis ImageStreamx or the Thermo Fisher Attune CytPix capture images of each cell as it flows through the detection system [30]. This provides not only quantitative fluorescence data from biosensors but also enables spatial analysis of signal localization within the cell—critical for biosensors that report on subcellular events such as transcription factor nuclear translocation or organelle-specific metabolite pools [30].

Table 2: Comparative Analysis of Flow Cytometry Platforms for Biosensor Screening

| Platform Characteristic | Conventional Flow Cytometry | Spectral Flow Cytometry | Imaging Flow Cytometry (IFC) |

|---|---|---|---|

| Key Principle | One detector-one fluorophore via optical filters [29]. | Full-spectrum capture with spectral unmixing [29]. | High-speed cellular imaging during flow [30]. |

| Multiplexing Capacity | Moderate (typically 10-20 parameters) [29]. | High (40+ parameters demonstrated) [29]. | Moderate, limited by camera sensitivity and speed [30]. |

| Primary Advantage with Biosensors | High-throughput, well-established protocols. | Superior resolution for complex fluorescent panels [29]. | Spatial context of biosensor activity [30]. |

| Typical Throughput | Very High (>10,000 cells/sec) [30]. | High (~10,000 cells/sec) [29]. | Moderate (up to 5,000 cells/sec) [30]. |

| Best Suited For | Rapid screening of well-separated fluorophores. | Complex screens with spectral overlap [29]. | Subcellular localization and morphological analysis [30]. |

Benchmarking Framework and Performance Metrics

Rigorous benchmarking is essential for evaluating the performance of integrated biosensor-flow cytometry platforms. The foundational guidelines for such benchmarking involve clearly defining the purpose, selecting appropriate methods and reference datasets, and using standardized evaluation criteria [19].

Performance is typically assessed using a combination of the following metrics:

- Sensitivity and Limit of Detection (LOD): The lowest analyte concentration that produces a statistically significant signal change. For example, a novel electrochemical biosensor for CD4+ T-cells demonstrated a LOD of 1.41×10^5 cells/mL, adequate for clinical HIV monitoring [32].

- Dynamic Range: The concentration range over which the biosensor responds linearly to the analyte. The aforementioned CD4+ sensor showed a linear range from 1.25×10^5 to 2×10^6 cells/mL, covering both healthy and diseased states [32].

- Specificity and Signal-to-Noise Ratio: The ability to distinguish the target signal from background or off-target interference. This is often validated by testing against non-target cell types (e.g., monocytes, neutrophils) [32].

- Temporal Resolution: For dynamic studies, the response time of the biosensor and the sampling rate of the cytometer determine the ability to track fast biological processes.

Experimental Protocols for Integrated Workflows

Workflow for Metabolic Flux Analysis Using TF-Based Biosensors

This protocol details the use of transcription factor-based biosensors in yeast to screen a library of metabolic engineering variants for enhanced metabolite production [28].

1. Biosensor and Strain Preparation:

- Clone a biosensor construct where the output of a metabolite-responsive transcription factor (e.g., LysR-type for organic acids) drives the expression of a fluorescent protein (e.g., GFP) [28].

- Transform this biosensor plasmid into a host microbial chassis (e.g., S. cerevisiae) harboring a diverse library of engineered metabolic pathways.

2. Cultivation and Induction:

- Grow transformed cells in a 96-well or 384-well deep-well plate with appropriate selective medium.

- Induce pathway expression during mid-log phase if using inducible promoters.

3. Flow Cytometric Analysis:

- Dilute cultures to an optimal density for cytometer acquisition (e.g., ~10^6 cells/mL).

- Acquire data on a spectral or conventional flow cytometer. For a TF-based sensor, a 488-nm laser and standard FITC filter set (530/30 nm) are typical for GFP detection.

- Record fluorescence intensity and side scatter for a minimum of 10,000 events per sample to ensure statistical significance.

4. Data Analysis and Hit Identification:

- Gate cells based on forward and side scatter to exclude debris and aggregates.

- Analyze the fluorescence distribution of the biosensor output.

- Isolate the top-performing "hit" strains (e.g., the top 1-5% of the population with the highest fluorescence) for further validation and pathway characterization.

Diagram 1: Biosensor-based metabolic screening workflow.

Workflow for RNA Logic Gate Validation Using Toehold Switches

This protocol uses RNA-based toehold switch biosensors to validate the operation of synthetic RNA circuits inside cells, read out via flow cytometry.

1. Circuit and Sensor Co-Design:

- Design a toehold switch biosensor where the trigger RNA sequence is the output of an upstream synthetic RNA circuit [28].

- The toehold switch, upon binding the trigger RNA, undergoes a conformational change that allows translation of a downstream reporter protein (e.g., mCherry).

2. Cell Transfection and Culture:

- Co-transfect mammalian cells (e.g., HEK293T) with plasmids encoding the RNA circuit and the toehold switch reporter.

- Include appropriate controls: a circuit with a non-functional mutation and a sensor without a trigger.

3. Flow Cytometry and Data Analysis:

- After 24-48 hours, harvest cells and resuspend in a suitable buffer for flow analysis.

- Use a cytometer equipped with a yellow-green (561 nm) laser and a 610/20 nm bandpass filter for mCherry detection.

- The fraction of mCherry-positive cells and the mean fluorescence intensity (MFI) directly report on the activity and output strength of the RNA logic gate.

The Scientist's Toolkit: Key Reagents and Materials

Successful integration of biosensors with flow cytometry requires a carefully selected suite of reagents and instruments.

Table 3: Essential Research Reagent Solutions for Integrated Workflows

| Item Name | Function/Benefit | Example Application |

|---|---|---|

| Fluorescent Proteins (e.g., eFluor dyes, Spark PLUS) | Bright, photostable labels for biosensor outputs. Multiplexing with minimal spillover [29]. | Multi-analyte detection in spectral cytometry [29]. |

| Characterized DNA Parts (from BIOFAB) | Well-characterized promoters/RBSs for predictable biosensor construction [31]. | Standardized biosensor assembly and tuning [31]. |

| Anti-CD4 Antibody (Functionalized) | Immobilization on electrode surface for specific cell capture [32]. | Functionalizing electrochemical microfluidic sensors [32]. |

| Cell Separation Chips (DFF Chip) | Label-free separation of cell populations (e.g., monocytes from PBMC) [32]. | Sample preprocessing to reduce interference in complex samples [32]. |

| Spectral Unmixing Software (e.g., SpectroFlo) | Algorithmic separation of overlapping fluorescence signals [29]. | Analyzing data from highly multiplexed biosensor panels [29]. |

| Microfluidic Electrochemical Chip | Integrated, portable platform for cell detection and enumeration [32]. | Point-of-care diagnostic development and in-field screening [32]. |

Implementation and Benchmarking Considerations

Implementing a robust biosensor-flow cytometry screening platform requires careful planning. A major consideration is biosensor characterization prior to large-scale screening. Key parameters that must be empirically determined include the dose-response curve, dynamic range, response time, and specificity in the intended host chassis [28]. Furthermore, chassis effects can significantly influence biosensor performance; the same genetic construct may behave differently in E. coli, yeast, or mammalian cells due to variations in transcription/translation machinery, metabolic background, and growth conditions [31].

From a benchmarking perspective, the selection of appropriate reference datasets and ground truths is critical for validating the integrated platform [19]. For metabolic biosensors, this could involve correlating fluorescence output with intracellular metabolite concentrations measured via LC-MS. For cell-based biosensors, comparison with established techniques like ELISA or manual microscopy provides a performance baseline [19]. The integration of AI and machine learning for data analysis is becoming increasingly important, helping to deconvolve complex multiparameter data, identify subtle patterns, and improve the accuracy of high-throughput screening outcomes [33] [30].

Diagram 2: Biosensor signal transduction and detection.

Utilizing Barcoding Strategies to Track Library Diversity in Silico

In the field of synthetic biology, the ability to track the diversity of vast genetic libraries is paramount for endeavors ranging from metabolic engineering to the development of novel therapeutic agents. Barcoding strategies, which involve the incorporation of unique DNA sequences into library members, have emerged as a powerful experimental solution. The computational analysis of these barcodes, performed in silico, is a critical pillar that transforms raw sequencing data into reliable, biologically meaningful insights. As the scale and complexity of barcoding experiments grow, the selection of appropriate computational tools and benchmarking frameworks becomes increasingly important. This guide provides an objective comparison of the performance and capabilities of contemporary software tools designed for the extraction, filtering, and analysis of cellular barcodes, providing researchers with the data needed to inform their analytical workflows.

Benchmarking Computational Tools for Barcode Analysis

The core challenge in barcode analysis is distinguishing true, biological barcodes from erroneous sequences introduced by PCR amplification and sequencing. Several computational strategies have been developed to address this, each with distinct strengths and limitations. The following table summarizes the key features and performance metrics of available tools.

Table 1: Comparison of Cellular Barcoding Analysis Tools

| Tool Name | Supported Barcode Types | Key Filtering Strategies | Performance Highlights | Applicable Data |

|---|---|---|---|---|

| CellBarcode [34] | Fixed-length, variable-length (with flanking sequence) | Reference, Threshold, Cluster, UMI-based | Barcode extraction & cluster filtering are 20x and 70x faster than genBaRcode, respectively [34] | Bulk DNA-seq, scRNA-seq |

| CellBarcodeSim [34] | Simulated libraries (e.g., lentiviral, VDJ) | Simulation-based ground truth for strategy validation | High Pearson correlation with experimental data structure [34] | Simulated bulk DNA-seq |

| BARtab / bartools [35] | Diverse cellular barcodes (lineage tracing) | End-to-end analysis pipeline | Designed for flexibility and scalability in single-cell & spatial transcriptomics [35] | Single-cell RNA-seq, Spatial transcriptomics |

| genBaRcode [34] | Restricted diversity of barcode types | Not Specified | Serves as a performance benchmark for CellBarcode [34] | Bulk sequencing |

| Bartender [34] | Not Specified | Not Specified | Less versatile in analysis strategies [34] | Bulk sequencing |

| CellTagR [34] | Restricted diversity of barcode types | Not Specified | Less versatile in analysis strategies [34] | scRNA-seq |

Performance benchmarking, using simulated data from CellBarcodeSim, reveals that the effectiveness of filtering strategies is highly dependent on experimental parameters. For instance, threshold filtering involves a fundamental trade-off between recall (finding true barcodes) and precision (avoiding false positives) [34]. Surprisingly, biological factors like the variation in clone size can have a greater impact on filtering performance than technical factors, with lower clone size variation leading to significantly better precision-recall outcomes [34].

Experimental Protocols for Barcode Analysis

Adopting a standardized and rigorous protocol is essential for reproducible barcode analysis. The following section details the methodologies employed by key studies and tools.

Protocol 1: High-Throughput Barcode Extraction and Filtering with CellBarcode

The CellBarcode package provides a comprehensive workflow for processing barcode sequencing data [34]:

- Quality Control and Read Filtering: Assess sequencing quality using the package's functions and remove low-quality sequences.

- Barcode Extraction: Define a regular expression that matches the barcode's structure and its flanking sequences. CellBarcode can extract both fixed-length and variable-length barcodes from FASTQ or BAM files, allowing for mismatches in flanking regions for bulk analysis.

- Filtering Spurious Barcodes: Apply one or more filtering strategies:

- Reference Filtering: Eliminate barcodes not found in a predefined reference list.

- Threshold Filtering: Retain barcodes with read counts above a specific threshold, which can be set manually or determined automatically.

- Cluster Filtering: Remove barcodes that are within a small edit distance of a more abundant barcode.

- UMI Filtering: If Unique Molecular Identifiers are present, apply additional filters based on UMI counts.

- Visualization and Export: Use package functions to visualize barcode read count distributions and export the final count matrix.

Protocol 2: In Silico Benchmarking with CellBarcodeSim

The CellBarcodeSim kit allows for the simulation of barcoding experiments to optimize filtering strategies [34]:

- Library Production: Generate an in silico library of barcode sequences.

- Cell Labeling and Clonal Expansion: Model the process of cells being labeled with barcodes and subsequent clonal expansion, introducing biological variation in clone sizes.

- Read Construction: Simulate the construction of sequencing reads, including the incorporation of flanking sequences and UMIs if desired.

- PCR and Sequencing: Model the technical noise of PCR amplification and sequencing, including the introduction of errors.

- Strategy Evaluation: Compare the known ground-truth barcodes from the simulation with the output of CellBarcode after applying different filtering strategies. This allows for the quantitative evaluation of precision and recall for each strategy under controlled conditions.

Protocol 3: Microscopy-Readable Barcoding (MiCode) for Phenotypic Screening

This experimental method, which requires subsequent computational analysis, uses fluorescent proteins to create a visual barcode [36]: